Calendar

Dimitri Lezcano, M.S.E.

PhD Candidate

Laboratory for Computational Sensing and Robotics

Title: Shape-Sensing, Shape-Prediction and Sensor Location Optimization of FBG-Sensorized Needles

Abstract:

Needle insertion is typical for surgical intervention including biopsy, cryoablation, and injection. To reduce tissue damage and for vital organ obstacle avoidance, asymmetric bevel-tipped flexible needles are commonly used for needle insertion. The challenges for accurate needle placement in minimally-invasive surgeries requires modalities for determining the needle position during insertion. Current modalities for 3D needle positioning, like magnetic-resonance imaging, computational tomography and ultrasound are either too slow, require large amounts of radiation over sustained periods, and/or are not precise enough. Embedding flexible bevel-tipped needles with fiber-Bragg grading (FBG) sensors enables for shape-sensing capabilities for accurate and real-time needle localization during needle insertion. Our shape-sensing model leverages Lie group theory and curvature sensing to not only provide accurate 3D shape-sensing, but to perform shape-prediction during needle insertion. The conducted experiments will demonstrate our model’s effectiveness on determining and predicting needle shape in isotropic phantom tissue using a novel hybrid deep learning and model-based approach. Furthermore, a presentation of constructive optimization of FBG sensor placement along with the framework to stochastically modeling needle shape-sensing, will be included.

Bio:

Dimitri Lezcano is a fifth year PhD candidate in LCSR’s Advanced Medical Instrumentation and Robotics Laboratory at Johns Hopkins University working with Professor Iulian Iordachita and Professor Jin Seob Kim. He received a B.A. in Physics and Mathematics from McDaniel College (2015) and M.S.E. in Robotics (2020) from Johns Hopkins University. His research focuses on the instrumentation and application of flexible, shape-sensing, needles in minimally-invasive surgical interventions.

Wangqu Liu

Wangqu Liu

Ph.D. Candidate, Gracias Lab

Department of Chemical and Biomolecular Engineering

Title: Autonomous Untethered Microinjectors for Gastrointestinal Delivery of Insulin

Abstract:

Delivering macromolecular drugs like insulin via the gastrointestinal (GI) tract is challenging due to the low stability of these drugs and their poor absorption through the tight GI epithelium. An innovative approach has been developed using untethered microscopic robots to break the epithelial barrier and improve the delivery of these drugs.

In this talk, I will present our research on autonomous untethered micro-robotic injectors for the gastrointestinal delivery of insulin. These submillimeter-sized microinjectors utilize thermally activated, prestressed thin films that function like micro-spring-loaded latches. Once triggered by body temperature, the arms of microinjectors self-fold. The shape-changing motion allows the injection tip to penetrate the GI epithelium, efficiently delivering insulin with bioavailability in line with intravenous injection. Due to their small size, tunability in sizing and dosing, wafer-scale fabrication, and parallel, autonomous operation, we anticipate these microinjectors will significantly advance drug delivery across the GI tract mucosa to the systemic circulation safely. We will conclude the talk by discussing the future development of ingestible active drug delivery systems incorporating microinjectors.

Bio:

Wangqu is a Ph.D. candidate in the Chemical and Biomolecular Engineering department, advised by Prof. David Gracias. His works mainly focus on the development of shape-morphing microdevices and related systems for biomedical applications such as drug delivery, minimally invasive surgery, and biopsy. He received a B.Eng. in Chemical Engineering from Beijing Forestry University (2017), where he focused on developing biomass-based sustainable nanomaterials for water treatment. He later joined the Johns Hopkins University for his M.Sc. (2019), where he worked on developing 3D printing functional hydrogel materials and shape-changing structures.

Abstract:

Abstract:

Complex and unstructured environments pose several challenges for traditional rigid robot technologies.

Inspired by biological systems, soft robots offer a promising alternative with respect to their rigid counterparts and demonstrate increased resilience and adaptation, resulting in machines that can safely interact with natural environments.

Mimicking how biological systems use their soft and dexterous body to interact with and exploit their surroundings entails addressing multiple fundamental challenges related to the design, manufacturing, and control of soft robots.

In this talk, I will present our research on developing new manufacturing methods to enable the fabrication of multi-degrees-of-freedom soft robots with distributed actuation and multiscale features.

I will also discuss opportunities and challenges arising in deploying soft multi-degrees-of-freedom soft robots in the real world. Specifically, I will introduce our work on methods to embed control and computational capabilities onboard soft robots to increase their autonomy, focusing on our efforts towards enabling electronic control of multi-DoF fluidic soft robots.

Finally, I will present our work on the application of soft robotic technologies in minimally invasive surgery. I will discuss various applications, including atraumatic manipulation of large abdominal organs and accurate and effective manipulation of delicate structures inside the beating heart.

Bio:

Tom Ranzani received a Bachelor’s and Master’s degree in Biomedical Engineering from the University of Pisa, Italy. He did his Ph.D. at the BioRobotics Institute of the Sant’Anna School of Advanced Studies. In 2014, he joined the Wyss Institute for Biologically Inspired Engineering at the Harvard John A. Paulson School of Engineering and Applied Sciences as a postdoctoral fellow.

He is currently an Assistant Professor in the Department of Mechanical Engineering, Biomedical Engineering, and in the Division of Materials Science and Engineering at Boston University, where he established the Morphable Biorobotics Lab in 2018.

In 2020 he was awarded the NIH Trailblazer Award for New and Early Stage Investigators.

His research focuses on soft and bioinspired robotics with applications ranging from underwater exploration to surgical and wearable devices. He is interested in expanding the potential of soft robots across different scales to develop novel reconfigurable soft-bodied robots capable of operating in environments where traditional robots cannot.

Recent technological advances in the field of surgical Robotics have resulted in the development of a range of

new techniques and technologies that have reduced patient trauma, shortened hospitalization, and improved

diagnostic accuracy and therapeutic outcome. Despite the many appreciated benefits of robot-assisted mini-

mally invasive surgery (MIS), there are still significant drawbacks associated with these technologies including, dexterity, intelligence, and autonomy of the developed robotic devices and prognosis design of medical devices and implants.

The dexterity limitation is associated with the poor accessibility to the areas of interest and insufficient

instrument control and ergonomics caused by rigidity of the conventional instruments and implants. In other words, the ability to adequately access different target anatomy is still the main challenge of MIS end endoscopic procedures demanding specialized instrumentation, sensing and control paradigms.

To enhance the safety of robot-assisted procedures, current robotics research is also exploring new ways of providing synergistic intelligent semi/autonomous control between the surgeon and the robot. In this context, the robot can perform certain surgical tasks autonomously under the supervision of the surgeon. However, such autonomy not only requires understanding the robot’s perception and adaptation to dynamically changing environments of the tissue, but also it requires understanding the mental workload and decision-making state of the surgeon as the decision-maker and key component of this systems. This demands a Surgeon-Centric Brain-In-the-Loop Autonomous Control techniques.

To address these challenges, this talk covers our efforts towards engineering of surgery (surgineering) and bringing dexterity and autonomy in various robot-assisted minimally invasive surgical procedures. Particulalrly, I will discuss our efforts towards enhancing the existing paradigm in spinal fixation, colorectal cancer diagnosis, and bioprinting of volumetric muscle loss injuries using continuum manipulators, soft sensors, flexible implants, and semi/autonomous intelligent surgical robotic systems.

Bio:

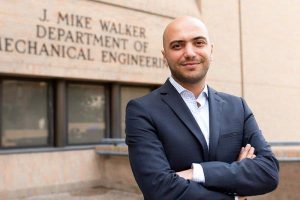

Dr. Farshid Alambeigi is an Assistant Professor at the Walker Department of Mechanical Engineering at the University of Texas at Austin since August 2019. He is also one of the core faculties of the Texas Robotics. Dr. Alambeigi received his Ph.D. in Mechanical Engineering from the Johns Hopkins University, in 2019. He also holds an M.Sc. degree (2017) in Robotics from the Johns Hopkins University. In summer of 2018, Dr. Alambeigi received the 2019 SIEBEL Scholarship because of the academic excellence and demonstrated leadership. In 2020, Dr. Alambeigi received the NIH NIBIB Trailblazer Career Award to develop novel flexible implants and robots for minimally invasive spinal fixation surgery. He also has received the prestigious 2022 NIH Director’s New Innovator Award to develop an in vivo bioprinting surgical robotic system for treatment of volumetric muscle loss.

At The University of Texas at Austin, Dr. Alambeigi directs the Advanced Robotic Technologies for Surgery (ARTS) Lab. Dr. Alambeigi’s research focuses on developing high dexterity and situationally aware continuum manipulators, soft robots, and appropriate instruments and sensors designed for less/minimally invasive treatment of various medical applications. Utilizing these novel surgical instruments together with intelligent control algorithms, the ARTS Lab in collaboration with the UT Dell Medical School will work toward engineering of the surgery (Surgineering) and partnering dexterous intelligent robots with surgeons. Ultimately, our goal is to augment the clinicians’ skills and quality of the surgery to further improve patient’s safety and outcomes.

“Variable Topology Truss: A Novel Approach to Modular Self-Reconfigurable Robots”

Abstract:

Conventional robotic systems are most effective in structured environments with well-defined tasks. The next frontier of robotics is to create systems that can operate in challenging environments while autonomously adapting to changing and uncertain task requirements. In the field of modular self-reconfigurable robotics, we approach this challenge by designing a set of robotic building blocks that can be combined to form a variety of robot morphologies. By autonomously rearranging these modules, the system can change its shape to complete a wider variety of tasks than is possible with a fixed morphology.

In this talk, I will present my research on a new modular robot, the Variable Topology Truss (VTT). Most existing modular self-reconfigurable robots use cube-shaped modules that connect together on a lattice or as a serial string of joints. These architectures are convenient when it comes to designing reconfiguration algorithms, but they face serious practical challenges when it comes to scaling the system up to solve larger tasks. Instead, VTT uses a truss-based architecture. Individual modules are beams which can extend or retract using a novel high-extension-ratio linear actuator: the Spiral Zipper. By connecting the beam modules together like a truss, we can create large, lightweight structures with much greater structural efficiency than conventional modular architectures. Furthermore, the length-changing ability of the Spiral Zipper allows the system to more flexibly adapt its scale and geometry without needing to use as many modules. However, the truss architecture poses new challenges when it comes to motion and reconfiguration planning. I will discuss the hardware design of the VTT system as well as my research on collision-free motion and reconfiguration planning for this novel system.

In this talk, I will present my research on a new modular robot, the Variable Topology Truss (VTT). Most existing modular self-reconfigurable robots use cube-shaped modules that connect together on a lattice or as a serial string of joints. These architectures are convenient when it comes to designing reconfiguration algorithms, but they face serious practical challenges when it comes to scaling the system up to solve larger tasks. Instead, VTT uses a truss-based architecture. Individual modules are beams which can extend or retract using a novel high-extension-ratio linear actuator: the Spiral Zipper. By connecting the beam modules together like a truss, we can create large, lightweight structures with much greater structural efficiency than conventional modular architectures. Furthermore, the length-changing ability of the Spiral Zipper allows the system to more flexibly adapt its scale and geometry without needing to use as many modules. However, the truss architecture poses new challenges when it comes to motion and reconfiguration planning. I will discuss the hardware design of the VTT system as well as my research on collision-free motion and reconfiguration planning for this novel system.

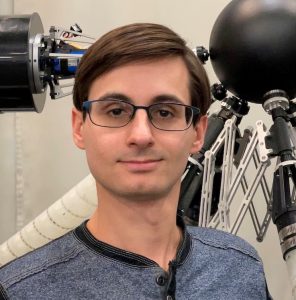

Bio:

Alexander Spinos received his Bachelor’s degree in Mechanical Engineering from Johns Hopkins University. He then joined the Modlab in GRASP at the University of Pennsylvania, where he received his PhD in Mechanical Engineering and Applied Mechanics. His dissertation centered around the mechanical design and self-reconfiguration planning of the Variable Topology Truss, a modular self-reconfigurable parallel robot. He is now a robotics researcher at the JHU Applied Physics Lab, where he works on multi-robot planning and the design of novel robot hardware.

Title: Decision Making with Internet-Scale Knowledge

Abstract: Machine learning models pretrained on internet data have acquired broad knowledge about the world but struggle to solve complex tasks that require extended reasoning and planning. Sequential decision making, on the other hand, has empowered AlphaGo’s superhuman performance, but lacks visual, language, and physical knowledge about the world. In this talk, I will present my research towards enabling decision making with internet-scale knowledge. First, I will illustrate how language models and video generation are unified interfaces that can integrate internet knowledge and represent diverse tasks, enabling the creation of a generative simulator to support real-world decision-making. Second, I will discuss my work on designing decision making algorithms that can take advantage of generative language and video models as agents and environments. Combining pretrained models with decision making algorithms can effectively enable a wide range of applications such as developing chatbots, learning robot policies, and discovering novel materials.

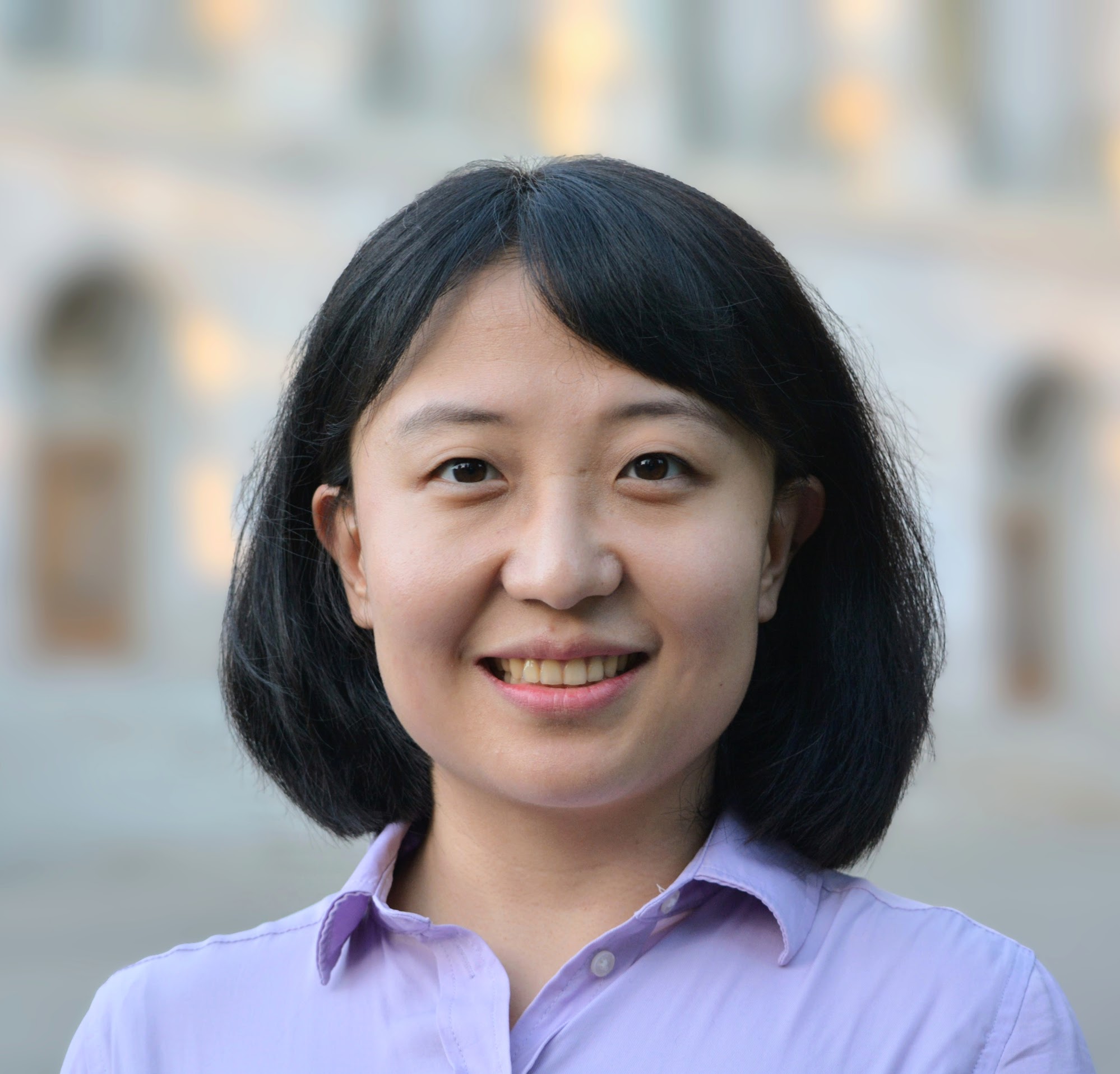

Bio: Sherry is a final year PhD student at UC Berkeley advised by Pieter Abbeel and a senior research scientist at Google DeepMind. Her research aims to develop machine learning models with internet-scale knowledge to make better-than-human decisions. To this end, she has developed techniques for generative modeling and representation learning from large-scale vision, language, and structured data, coupled with developing algorithms for sequential decision making such as imitation learning, planning, and reinforcement learning. Sherry initiated and led the Foundation Models for Decision Making workshop at NeurIPS 2022 and 2023, bringing together research communities in vision, language, planning, and reinforcement learning to solve complex decision making tasks at scale. Before her current role, Sherry received her Bachelor’s degree and Master’s degree from MIT advised by Patrick Winston and Julian Shun.

Abstract:

Humans can effortlessly construct rich mental representations of the 3D world from sparse input, such as a single image. This is a core aspect of intelligence that helps us understand and interact with our surroundings and with each other. My research aims to build similar computational models–artificial intelligence methods that can perceive properties of the 3D structured world from images and videos. Despite remarkable progress in 2D computer vision, 3D perception remains an open problem due to some unique challenges, such as limited 3D training data and uncertainties in reconstruction.

In this talk, I will discuss these challenges and explain how my research addresses them by posing vision as an inverse problem, and by designing machine learning models with physics-inspired inductive biases. I will demonstrate techniques for reconstructing 3D faces and objects, and for reasoning about uncertainties in scene reconstruction using generative models. I will then discuss how these efforts advance us toward scalable and generalizable visual perception and how they advance application domains such as robotics and computer graphics.

Bio:

Ayush Tewari is a postdoctoral researcher at MIT CSAIL with William Freeman, Vincent Sitzmann, and Joshua Tenenbaum. He previously completed his Ph.D. at the Max Planck Institute for Informatics, advised by Christian Theobalt. His research interests lie at the intersection of computer vision, computer graphics, and machine learning, focusing on 3D perception and its applications. Ayush was awarded the Otto Hahn medal from the Max Planck Society for his scientific contributions as a Ph.D. student.

Abstract: Decision-making in robotics domains is complicated by continuous state and action spaces, long horizons, and sparse feedback. One way to address these challenges is to perform bilevel planning, where decision-making is decomposed into reasoning about “what to do” (task planning) and “how to do it” (continuous optimization). Bilevel planning is powerful, but it requires multiple types of domain-specific abstractions that are often difficult to design by hand. In this talk, I will give an overview of my work on learning these abstractions from data. This work represents the first unified system for learning all the abstractions needed for bilevel planning. In addition to learning to plan, I will also discuss planning to learn, where the robot uses planning to collect additional data that it can use to improve its abstractions. My long-term goal is to create a virtuous cycle where learning improves planning and planning improves learning, leading to a very general library of abstractions and a broadly competent robot.

Bio: Tom Silver is a final year PhD student at MIT EECS advised by Leslie Kaelbling and Josh Tenenbaum. His research is at the intersection of machine learning and planning with applications to robotics and often uses techniques from task and motion planning, program synthesis, and neuro-symbolic learning. Before graduate school, he was a researcher at Vicarious AI and received his B.A. from Harvard with highest honors in computer science and mathematics in 2016. He has also interned at Google Research (Brain Robotics) and currently splits his time between MIT and the Boston Dynamics AI Institute. His work is supported by an NSF fellowship and an MIT presidential fellowship.

Title: “Assured robotic super-autonomy”

Abstract: In recent years, we have observed the rise of robotic super-autonomy, where computational control and machine learning methods enable agile robots to far exceed the performance of conventional autonomous systems. However, as powerful as these methods are, they often fail to provide the robustness and performance guarantees required for real-world deployment. In this talk, I will present some of the research I am leading at the Johns Hopkins University Applied Physics Laboratory (JHU/APL) to develop dramatically more capable robots that can operate at the very edge of physical and computational limits. In particular, I will present on our efforts to achieve agile autonomous flight with fixed-wing aerial vehicles for precision perching, landing, and high-speed navigation in constrained urban environments. I will also present our approaches for computing performance guarantees for these complex systems and control paradigms in the presence of environmental uncertainty and model mismatch. Finally, I will discuss future potential research directions for safe learning-based control, risk-aware multi-robot coordination, and assured control for nonlinear hybrid dynamical systems.

Bio: Dr. Joseph Moore is the Chief Scientist for Robotics in the Research and Exploratory Development Department at the Johns Hopkins University Applied Physics Laboratory (JHU/APL) and an Assistant Research Professor in the Mechanical Engineering Department at the JHU Whiting School of Engineering. Dr. Moore received his Ph.D. in Mechanical Engineering from the Massachusetts Institute of Technology where he focused on control algorithms for robust post-stall perching with autonomous fixed-wing aerial vehicles. During his time at JHU/APL, Dr. Moore has developed control and planning strategies for hybrid unmanned aerial-aquatic vehicles, heterogeneous multi-robot teams, and aerobatic fixed-wing vehicles. He has served as the Principal Investigator for both Office of Naval Research (ONR) and Defense Advanced Research Project Agency (DARPA) programs that have developed flight controllers for aggressive post-stall maneuvering with fixed-wing aerial vehicles to enable precision landing and high-speed navigation in constrained environments. He is also a Principal Investigator for the Army Research Lab (ARL) Tactical Behaviors for Autonomous Maneuver program which seeks to enable tactical coordination of multi-robot teams in complex environments and terrains.

Bio: Dr. Joseph Moore is the Chief Scientist for Robotics in the Research and Exploratory Development Department at the Johns Hopkins University Applied Physics Laboratory (JHU/APL) and an Assistant Research Professor in the Mechanical Engineering Department at the JHU Whiting School of Engineering. Dr. Moore received his Ph.D. in Mechanical Engineering from the Massachusetts Institute of Technology where he focused on control algorithms for robust post-stall perching with autonomous fixed-wing aerial vehicles. During his time at JHU/APL, Dr. Moore has developed control and planning strategies for hybrid unmanned aerial-aquatic vehicles, heterogeneous multi-robot teams, and aerobatic fixed-wing vehicles. He has served as the Principal Investigator for both Office of Naval Research (ONR) and Defense Advanced Research Project Agency (DARPA) programs that have developed flight controllers for aggressive post-stall maneuvering with fixed-wing aerial vehicles to enable precision landing and high-speed navigation in constrained environments. He is also a Principal Investigator for the Army Research Lab (ARL) Tactical Behaviors for Autonomous Maneuver program which seeks to enable tactical coordination of multi-robot teams in complex environments and terrains.

Host: Anton Dahbura

Zoom: Meeting ID 955 8366 7779; Passcode 530803

https://wse.zoom.us/j/95583667779

Model-Based Methods in Today’s Data-Driven Robotics Landscape

Seth Hutchinson, Georgia Tech

Abstract:

Data-driven machine learning methods are making advances in many long-standing problems in robotics, including grasping, legged locomotion, perception, and more. There are, however, robotics applications for which data-driven methods are less effective. Data acquisition can be expensive, time consuming, or dangerous — to the surrounding workspace, humans in the workspace, or the robot itself. In such cases, generating data via simulation might seem a natural recourse, but simulation methods come with their own limitations, particularly when nondeterministic effects are significant, or when complex dynamics are at play, requiring heavy computation and exposing the so-called sim2real gap. Another alternative is to rely on a set of demonstrations, limiting the amount of required data by careful curation of the training examples; however, these methods fail when confronted with problems that were not represented in the training examples (so-called out-of-distribution problems), and this precludes the possibility of providing provable performance guarantees.

In this talk, I will describe recent work on robotics problems that do not readily admit data-driven solutions, including flapping flight by a bat-like robot, vision-based control of soft continuum robots, a cable-driven graffiti-painting robot, and ensuring safe operation of mobile manipulators in HRI scenarios. I will describe some specific difficulties that confront data-driven methods for these problems, and describe how model-based approaches can provide workable solutions. Along the way, I will also discuss how judicious incorporation of data-driven machine learning tools can enhance performance of these methods.

BIO:

Seth Hutchinson is the Executive Director of the Institute for Robotics and Intelligent Machines at the Georgia Institute of Technology, where he is also Professor and KUKA Chair for Robotics in the School of Interactive Computing. Hutchinson received his Ph.D. from Purdue University in 1988, and in 1990 joined the University of Illinois in Urbana-Champaign (UIUC), where he was a Professor of Electrical and Computer Engineering (ECE) until 2017, serving as Associate Department Head of ECE from 2001 to 2007.

Hutchinson served as president of the IEEE Robotics and Automation Society (RAS) 2020-21. He has previously served as a member of the RAS Administrative Committee, as the Editor-in-Chief for the “IEEE Transactions on Robotics” and as the founding Editor-in-Chief of the RAS Conference Editorial Board. He has served on the organizing committees for more than 100 conferences, has more than 300 publications on the topics of robotics and computer vision, and is coauthor of the books “Robot Modeling and Control,” published by Wiley, “Principles of Robot Motion: Theory, Algorithms, and Implementations,” published by MIT Press, and the forthcoming “Introduction to Robotics and Perception,” to be published by Cambridge University Press. He is a Fellow of the IEEE.