Calendar

Continuum robots change their shape with elastic deformations rather than mechanical joints and are often elastically deform under typical forces for their applications. They have advantages in some environments where geometry may be complex and not well-known in advance of operations, which is a common feature of many applications outside of factory settings. Continuum robots leverage contact and deformation to complete tasks, relying on passive mechanical behaviors in addition to software-based intelligence and traditional control systems. For example, robots with slender, snake-like, elastic bodies can navigate the tortuous human anatomy like the colon or the esophagus to perform surgery, or they can navigate through challenging industrial environments like pipelines and machinery to perform “minimally invasive” inspection and maintenance. However, slender bodies and mechanical softness come with distinct engineering challenges. Many slender-bodied soft robots have adopted remote actuation approaches that suffer from exponentially worsening friction as they bend. Additionally, many approaches to actuation result in an undesirable coupling between actuators. In this seminar, I will describe our recent research that has focused on improving the understanding of continuum mechanism manipulator designs, models, and applications. Ongoing studies are aimed at (i) improving the design of electromechanically driven continuum robots; (ii) investigating methods to mitigate friction in long, slender devices; and (iii) improving modeling approaches for continuum robots.

Continuum robots change their shape with elastic deformations rather than mechanical joints and are often elastically deform under typical forces for their applications. They have advantages in some environments where geometry may be complex and not well-known in advance of operations, which is a common feature of many applications outside of factory settings. Continuum robots leverage contact and deformation to complete tasks, relying on passive mechanical behaviors in addition to software-based intelligence and traditional control systems. For example, robots with slender, snake-like, elastic bodies can navigate the tortuous human anatomy like the colon or the esophagus to perform surgery, or they can navigate through challenging industrial environments like pipelines and machinery to perform “minimally invasive” inspection and maintenance. However, slender bodies and mechanical softness come with distinct engineering challenges. Many slender-bodied soft robots have adopted remote actuation approaches that suffer from exponentially worsening friction as they bend. Additionally, many approaches to actuation result in an undesirable coupling between actuators. In this seminar, I will describe our recent research that has focused on improving the understanding of continuum mechanism manipulator designs, models, and applications. Ongoing studies are aimed at (i) improving the design of electromechanically driven continuum robots; (ii) investigating methods to mitigate friction in long, slender devices; and (iii) improving modeling approaches for continuum robots.

Bio:

Hunter B. Gilbert received the B.S. degree in mechanical engineering in 2010 from Rice University (Houston, Texas), and the Ph.D. degree in mechanical engineering in 2016 from Vanderbilt University (Nashville, Tennessee). He conducted a postdoctoral fellowship in the Physical Intelligence Department of the Max Planck Institute for Intelligent Systems (Stuttgart, Germany), supported by an Alexander von Humboldt Stiftung postdoctoral fellowship from 2016-2019. He is currently an Associate Professor of Mechanical Engineering at Louisiana State University, where he is co-director of the Innovation in Control and Robotics Engineering (iCORE) research laboratory. He is an Associate Editor for the IEEE Robotics and Automation Letters and for Frontiers in Robotics and AI. His research interests are centered on several themes within applied mechanics and dynamic systems: mechanically “soft” or deformable robots, systems and technologies focused on human health and safety, and modeling of complex dynamic systems.

Eric Diller, Associate Professor, Department of Mechanical and Industrial Engineering, Robotics Institute, Institute of Biomedical Engineering (cross-appointed); University of Toronto

Eric Diller, Associate Professor, Department of Mechanical and Industrial Engineering, Robotics Institute, Institute of Biomedical Engineering (cross-appointed); University of Toronto

Abstract: Micro-scale mobile robots can physically access small spaces in a versatile and non-invasive manner. Such microrobots under several mm in size have potential unique applications for surgery, sensing and drug delivery in healthcare, microfactories and as scientific tools. These devices are powered and controlled remotely using externally-applied magnetic fields for motion in 3D. This talk will introduce how we design and produce these tiny machines, as well as how we create magnetic fields that can move them as functional robots inside the body. Moving microrobots for swimming, crawling and grasping powered by these magnetic fields will be shown, along with our progress towards medical applications for diagnosis in the gut, and in neurosurgery.

Eric Diller received the B.S. and M.S. degree in mechanical engineering from Case Western Reserve University in 2010 and the Ph.D. degree in mechanical engineering from Carnegie Mellon University in 2013. He is currently Associate Professor in the Department of Mechanical and Industrial Engineering and the Robotics Institute at the University of Toronto, where he is director of the Microrobotics Laboratory. His research interests include micro-scale robotics, and features fabrication and control relating to remote actuation of micro-scale devices using magnetic fields, micro-scale robotic manipulation, and smart materials. He is an Associate Editor of the Journal of Micro-Bio Robotics, and received the IEEE Robotics & Automation Society 2020 Early Career Award. He has also received the 2018 Ontario Early Researcher Award, the University of Toronto Innovation Award, and the Canadian Society of Mechanical Engineering’s 2018 I.W. Smith Award for research contributions in medical microrobotics. He envisions an accessible future of medicine free of invasive colonoscopies, open surgery and long recoveries.

Lab website: http://microrobotics.mie.utoronto.ca/

Abstract:

As robotics increasingly integrates into our social and professional spheres, the question of how humans perceive and trust robots has gained prominence. Are robots regarded as utilitarian tools, designed to fulfill tasks efficiently, or are they embraced as teammates, eliciting human-like trust? Some argue that humans interact with robots in a way that resembles social interactions with other humans, a viewpoint aligned with the ‘computers are social actors’ (CASA) concept. Conversely, proponents of the robot as a tool view contend that humans perceive robots as non-human tools, promoting the use of human-to-automation theories and trust measures. In this presentation, we delve into these arguments and propose an empirical study aimed at shedding light on this debate.

Bio:

Bio:

He holds the position of Professor in the School of Information at the University of Michigan and boasts a number of distinguished memberships, including AIS Distinguished Member Cum Laude and IEEE Senior Member. Dr. Robert obtained his Ph.D. in Information Systems from Indiana University, where he was a BAT Fellow and KPMG Scholar. Currently, he is the director of the Michigan Autonomous Vehicle Research Intergroup Collaboration (MAVRIC) and affiliated with various institutions, including the University of Michigan Robotics Institute, the National Center for Institutional Diversity at the University of Michigan, and the Center for Computer-Mediated Communication at Indiana University. Additionally, he is a member of the AAAS Community Advisory Board. Dr. Robert’s research interests revolve around human collaboration with technology, which is reflected in his published works in leading information systems and information science journals as well as notable computer and robotics conferences. His research has garnered numerous accolades, including best paper awards/nominations from the Journal of the Association of Information Systems, the ACM Conference on Computer-Supported Cooperative Work, SAE International, and the ACM/IEEE International Conference on Human–Robot Interaction. Dr. Robert has received research funding from various sources, such as the AAA Foundation, Automotive Research Center/U.S. Army, Army Research Laboratory, Toyota Research Institute, MCity, Lieberthal-Rogel Center for Chinese Studies, and the National Science Foundation. He has also been featured in print, radio, and television for major media outlets like ABC, CBS, CNN, CNBC, Michigan Radio, Inc., New York Times, and the Associated Press.

Abstract:

Improving the capabilities of robots in medicine requires innovation in both robot design and computational methods. In this talk, I will discuss recent research from my lab on both topics. I will present new continuum robot designs at both meso- and micro-scales intended for procedures in delicate tissues such as the brain and lungs. I will also present data-driven and model-driven algorithmic methods we have developed to model, control, and plan motions for continuum, deformable robots and deformable tissue in the human body.

Bio:

Alan Kuntz is an assistant professor in the Robotics Center and the Kahlert School of Computing at the University of Utah. He leads a highly interdisciplinary research lab consisting of computer scientists, mechanical engineers, electrical and computer engineers, and applied mathematicians. His research focuses on the design of automation and machine learning methods for robots and on the mechanical design and control of novel robotic systems with healthcare applications.

Prior to joining the University of Utah, he was a postdoctoral research scholar at Vanderbilt University in the Vanderbilt Institute for Surgery and Engineering and the Department of Mechanical Engineering. He holds a BS in Computer Science from the University of New Mexico and an MS and PhD in Computer Science from the University of North Carolina at Chapel Hill.

Abstract:

Soft and continuum robots have immense potential to assist humans with tasks that require navigation and manipulation in unstructured environments. In this talk, I present my group’s research on the design, modeling, and control of a variety of soft and continuum robots. I begin by discussing soft vine-inspired robots, which move through their environment by extending from their tip and are well suited for navigation and manipulation within confined spaces. In particular, I discuss our research on vine robot field deployment, shape sensing, force sensing, and collapse modeling. I then present our research on two other bioinspired robots: spider monkey tail-inspired robots for grasping objects, and amoeba-inspired robots for navigation in confined spaces. Finally, I discuss our research on soft wearable robots for replacing or assisting the motion of the upper limbs. This research helps make robots more capable of assisting humans in the unstructured environments of everyday life.

Bio:

Margaret Coad joined the faculty at the University of Notre Dame in the fall of 2021, and she is currently an Assistant Professor of Aerospace and Mechanical Engineering. She leads the Innovative Robotics and Interactive Systems (IRIS) Lab, which explores the design, modeling, and control of innovative robotic systems to improve human health, safety, and productivity; she also teaches courses in robotics and soft robotics. Prior to joining Notre Dame, she completed her Ph.D. degree in 2021 and M.S. degree in 2017 in Mechanical Engineering at Stanford University under the direction of Professor Allison Okamura, and her B.S. degree in 2015 in Mechanical Engineering at MIT. She won the Robotics and Automation Magazine Best Paper Award for 2020 for her work on vine robots, and she has been a finalist for several Best Paper Awards at international robotics conferences. Outside of academics, she plays ultimate frisbee and sings in choir.

Abstract:

This seminar will provide PhD students and postdocs with some information on how to navigate the academic job market. The seminar will touch on 1) benefits and possible challenges of the academic career path, 2) the many aspects of the academic job search (such as timing, required documents, interview schedule, …), and 3) the essential tasks junior faculty (and people aspiring to be) must master quickly.

Abstract:

The da Vinci Research Kit (dVRK) is an open research platform that couples open-source control electronics and software with the mechanical components of the da Vinci surgical robot. This presentation will describe the dVRK system architecture, followed by selected research enabled by this system, including mixed reality for the first assistant, autonomous camera motion, and force estimation for bilateral teleoperation. The presentation will conclude with an overview of the AccelNet Surgical Robotics Challenge, which includes both simulated and physical environments.

Bio:

Peter Kazanzides received the Ph.D. degree in electrical engineering from Brown University in 1988. He began work on surgical robotics in March 1989 as a postdoctoral researcher with Russell Taylor at the IBM T.J. Watson Research Center and co-founded Integrated Surgical Systems (ISS) in November 1990. As Director of Robotics and Software at ISS, he was responsible for the design, implementation, validation and support of the ROBODOC System, which has been used for more than 20,000 hip and knee replacement surgeries. Dr. Kazanzides joined Johns Hopkins University December 2002 and currently holds an appointment as a Research Professor of Computer Science. His research focuses on computer-integrated surgery, space robotics and mixed reality.

Dimitri Lezcano, M.S.E.

PhD Candidate

Laboratory for Computational Sensing and Robotics

Title: Shape-Sensing, Shape-Prediction and Sensor Location Optimization of FBG-Sensorized Needles

Abstract:

Needle insertion is typical for surgical intervention including biopsy, cryoablation, and injection. To reduce tissue damage and for vital organ obstacle avoidance, asymmetric bevel-tipped flexible needles are commonly used for needle insertion. The challenges for accurate needle placement in minimally-invasive surgeries requires modalities for determining the needle position during insertion. Current modalities for 3D needle positioning, like magnetic-resonance imaging, computational tomography and ultrasound are either too slow, require large amounts of radiation over sustained periods, and/or are not precise enough. Embedding flexible bevel-tipped needles with fiber-Bragg grading (FBG) sensors enables for shape-sensing capabilities for accurate and real-time needle localization during needle insertion. Our shape-sensing model leverages Lie group theory and curvature sensing to not only provide accurate 3D shape-sensing, but to perform shape-prediction during needle insertion. The conducted experiments will demonstrate our model’s effectiveness on determining and predicting needle shape in isotropic phantom tissue using a novel hybrid deep learning and model-based approach. Furthermore, a presentation of constructive optimization of FBG sensor placement along with the framework to stochastically modeling needle shape-sensing, will be included.

Bio:

Dimitri Lezcano is a fifth year PhD candidate in LCSR’s Advanced Medical Instrumentation and Robotics Laboratory at Johns Hopkins University working with Professor Iulian Iordachita and Professor Jin Seob Kim. He received a B.A. in Physics and Mathematics from McDaniel College (2015) and M.S.E. in Robotics (2020) from Johns Hopkins University. His research focuses on the instrumentation and application of flexible, shape-sensing, needles in minimally-invasive surgical interventions.

Wangqu Liu

Wangqu Liu

Ph.D. Candidate, Gracias Lab

Department of Chemical and Biomolecular Engineering

Title: Autonomous Untethered Microinjectors for Gastrointestinal Delivery of Insulin

Abstract:

Delivering macromolecular drugs like insulin via the gastrointestinal (GI) tract is challenging due to the low stability of these drugs and their poor absorption through the tight GI epithelium. An innovative approach has been developed using untethered microscopic robots to break the epithelial barrier and improve the delivery of these drugs.

In this talk, I will present our research on autonomous untethered micro-robotic injectors for the gastrointestinal delivery of insulin. These submillimeter-sized microinjectors utilize thermally activated, prestressed thin films that function like micro-spring-loaded latches. Once triggered by body temperature, the arms of microinjectors self-fold. The shape-changing motion allows the injection tip to penetrate the GI epithelium, efficiently delivering insulin with bioavailability in line with intravenous injection. Due to their small size, tunability in sizing and dosing, wafer-scale fabrication, and parallel, autonomous operation, we anticipate these microinjectors will significantly advance drug delivery across the GI tract mucosa to the systemic circulation safely. We will conclude the talk by discussing the future development of ingestible active drug delivery systems incorporating microinjectors.

Bio:

Wangqu is a Ph.D. candidate in the Chemical and Biomolecular Engineering department, advised by Prof. David Gracias. His works mainly focus on the development of shape-morphing microdevices and related systems for biomedical applications such as drug delivery, minimally invasive surgery, and biopsy. He received a B.Eng. in Chemical Engineering from Beijing Forestry University (2017), where he focused on developing biomass-based sustainable nanomaterials for water treatment. He later joined the Johns Hopkins University for his M.Sc. (2019), where he worked on developing 3D printing functional hydrogel materials and shape-changing structures.

Abstract:

Abstract:

Complex and unstructured environments pose several challenges for traditional rigid robot technologies.

Inspired by biological systems, soft robots offer a promising alternative with respect to their rigid counterparts and demonstrate increased resilience and adaptation, resulting in machines that can safely interact with natural environments.

Mimicking how biological systems use their soft and dexterous body to interact with and exploit their surroundings entails addressing multiple fundamental challenges related to the design, manufacturing, and control of soft robots.

In this talk, I will present our research on developing new manufacturing methods to enable the fabrication of multi-degrees-of-freedom soft robots with distributed actuation and multiscale features.

I will also discuss opportunities and challenges arising in deploying soft multi-degrees-of-freedom soft robots in the real world. Specifically, I will introduce our work on methods to embed control and computational capabilities onboard soft robots to increase their autonomy, focusing on our efforts towards enabling electronic control of multi-DoF fluidic soft robots.

Finally, I will present our work on the application of soft robotic technologies in minimally invasive surgery. I will discuss various applications, including atraumatic manipulation of large abdominal organs and accurate and effective manipulation of delicate structures inside the beating heart.

Bio:

Tom Ranzani received a Bachelor’s and Master’s degree in Biomedical Engineering from the University of Pisa, Italy. He did his Ph.D. at the BioRobotics Institute of the Sant’Anna School of Advanced Studies. In 2014, he joined the Wyss Institute for Biologically Inspired Engineering at the Harvard John A. Paulson School of Engineering and Applied Sciences as a postdoctoral fellow.

He is currently an Assistant Professor in the Department of Mechanical Engineering, Biomedical Engineering, and in the Division of Materials Science and Engineering at Boston University, where he established the Morphable Biorobotics Lab in 2018.

In 2020 he was awarded the NIH Trailblazer Award for New and Early Stage Investigators.

His research focuses on soft and bioinspired robotics with applications ranging from underwater exploration to surgical and wearable devices. He is interested in expanding the potential of soft robots across different scales to develop novel reconfigurable soft-bodied robots capable of operating in environments where traditional robots cannot.

Recent technological advances in the field of surgical Robotics have resulted in the development of a range of

new techniques and technologies that have reduced patient trauma, shortened hospitalization, and improved

diagnostic accuracy and therapeutic outcome. Despite the many appreciated benefits of robot-assisted mini-

mally invasive surgery (MIS), there are still significant drawbacks associated with these technologies including, dexterity, intelligence, and autonomy of the developed robotic devices and prognosis design of medical devices and implants.

The dexterity limitation is associated with the poor accessibility to the areas of interest and insufficient

instrument control and ergonomics caused by rigidity of the conventional instruments and implants. In other words, the ability to adequately access different target anatomy is still the main challenge of MIS end endoscopic procedures demanding specialized instrumentation, sensing and control paradigms.

To enhance the safety of robot-assisted procedures, current robotics research is also exploring new ways of providing synergistic intelligent semi/autonomous control between the surgeon and the robot. In this context, the robot can perform certain surgical tasks autonomously under the supervision of the surgeon. However, such autonomy not only requires understanding the robot’s perception and adaptation to dynamically changing environments of the tissue, but also it requires understanding the mental workload and decision-making state of the surgeon as the decision-maker and key component of this systems. This demands a Surgeon-Centric Brain-In-the-Loop Autonomous Control techniques.

To address these challenges, this talk covers our efforts towards engineering of surgery (surgineering) and bringing dexterity and autonomy in various robot-assisted minimally invasive surgical procedures. Particulalrly, I will discuss our efforts towards enhancing the existing paradigm in spinal fixation, colorectal cancer diagnosis, and bioprinting of volumetric muscle loss injuries using continuum manipulators, soft sensors, flexible implants, and semi/autonomous intelligent surgical robotic systems.

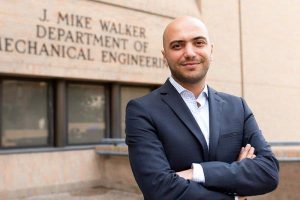

Bio:

Dr. Farshid Alambeigi is an Assistant Professor at the Walker Department of Mechanical Engineering at the University of Texas at Austin since August 2019. He is also one of the core faculties of the Texas Robotics. Dr. Alambeigi received his Ph.D. in Mechanical Engineering from the Johns Hopkins University, in 2019. He also holds an M.Sc. degree (2017) in Robotics from the Johns Hopkins University. In summer of 2018, Dr. Alambeigi received the 2019 SIEBEL Scholarship because of the academic excellence and demonstrated leadership. In 2020, Dr. Alambeigi received the NIH NIBIB Trailblazer Career Award to develop novel flexible implants and robots for minimally invasive spinal fixation surgery. He also has received the prestigious 2022 NIH Director’s New Innovator Award to develop an in vivo bioprinting surgical robotic system for treatment of volumetric muscle loss.

At The University of Texas at Austin, Dr. Alambeigi directs the Advanced Robotic Technologies for Surgery (ARTS) Lab. Dr. Alambeigi’s research focuses on developing high dexterity and situationally aware continuum manipulators, soft robots, and appropriate instruments and sensors designed for less/minimally invasive treatment of various medical applications. Utilizing these novel surgical instruments together with intelligent control algorithms, the ARTS Lab in collaboration with the UT Dell Medical School will work toward engineering of the surgery (Surgineering) and partnering dexterous intelligent robots with surgeons. Ultimately, our goal is to augment the clinicians’ skills and quality of the surgery to further improve patient’s safety and outcomes.