Calendar

Participants from the public and private sectors are invited to attend the JHU Robotics Industry Day, hosted by the Laboratory for Computational Sensing and Robotics, to see cutting-edge robotics research and explore university-corporate partnerships.

Meet robotics experts at Hopkins and discuss furthering robotics research, education and commercialization in healthcare, manufacturing, defense, space exploration, environmental science and transportation.

Agenda

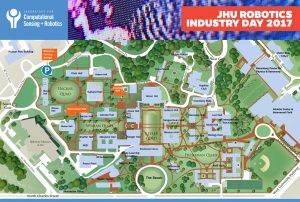

- 08:00 AM Registration/continental breakfast – Mason Hall, Homewood Campus

- 08:30 AM Welcome by Ed Schlesinger, Dean Whiting School of Engineering

- 08:40 AM Introduction to LCSR – Russell Taylor, Director

- 09:10 AM Overview and Updates of LCSR Robotics Research

-

- Medical Robotics & Computer Assisted Surgery – Russell Taylor

- Human Machine Collaborative Systems – Gregory Hager

- Robots for Extreme Environments- Louis Whitcomb

- Robotics and Biological Systems- Noah Cowan

-

- 10:30 AM Break

- 10:45 AM LCSR Highlight Talks

- 11.30 AM LCSR Education Programs

- 11:40 AM LCSR Spin Off Companies

- 12:00 Noon JHU/LCSR Industry Programs

-

- Partner Program

- Fellowship Program

- Funding Mechanisms for University/Industry Collaborations

-

- 12:30 PM Lunch, Poster and Demo Session, Lab Tours, and Meet Students and Faculty- Hackerman B08

-

Register now

The Laboratory for Computational Sensing and Robotics will highlight its elite robotics students and showcase cutting-edge research projects in areas that include Medical Robotics, Extreme Environments Robotics, Human-Machine Systems for Manufacturing, BioRobotics and more. JHU Robotics Industry Day will take place from 8 a.m. to 3 p.m. in Hackerman Hall on the Homewood Campus at Johns Hopkins University.

Robotics Industry Day will provide top companies and organizations in the private and public sectors with access to the LCSR’s forward-thinking, solution-driven students. The event will also serve as an informal opportunity to explore university-industry partnerships.

You will experience dynamic presentations and discussions, observe live demonstrations, and participate in speed networking sessions that afford you the opportunity to meet Johns Hopkins most talented robotics students before they graduate.

Please contact Rose Chase if you have any questions.

Schedule of Events

(times are subject to change)

LEVERING GREAT HALL

8:00 Registration and Continental Breakfast

8:30 Welcome: Larry Nagahara, Associate Dean for Research, JHU

8:35 Introduction to LCSR: Director Russell H. Taylor

8:55 Research and Commercialization Highlights

9:00 Louis Whitcomb, LCSR

9:10 Noah Cowan & Erin Sutton, LCSR

9:20 Marin Kolilarov, LCSR

9:30 Philipp Stolka, Clear Guide Medical

9:40 Mehran Armand, APL and LCSR

9:50 Stephen L. Hoffman, Sanaria, Inc.

10:00 COFFEE BREAK

10:10 Bernhard Fuerst, LCSR

10:20 Bruce Lichorowic, Galen Robotics

10:30 David Narrow, Sonavex, Inc.

10:40 Kelleher Guerin & Benjamin Gibbs, READY Robotics

10:50 Promit Roy, Max and Haley LLC

11:00 John Krakauer, Malone Center for Engineering in Healthcare Update, JHU

11:10 New Faculty Talks

11:10 – Muyinatu Bell

11:30 – Jeremy D. Brown

HACKERMAN HALL B17 LOBBY

12:00-1:15 LUNCH

HACKERMAN HALL ROBOTORIUM

12:00-1:15 Poster + Demo Sessions

HACKERMAN HALL B17

1:15-3:00 Student and Industry Speed Networking

Please contact Rose Chase if you have any questions. Download PDF of Campus Map & Schedule of Events

Abstract

This is the Fall 2017 Kick-Off Seminar, presenting an overview of LCSR, useful information, and an introduction to the faculty and labs.

Abstract

Robots hold promise in assisting people in a variety of domains including healthcare services, household chores, collaborative manufacturing, and educational learning. In supporting these activities, robots need to engage with humans in cooperative interactions in which they work together toward a common goal in a socially intuitive manner. Such interactions require robots to coordinate actions, predict task intent, direct attention, and convey relevant information to human partners. In this talk, I will present how techniques in human-computer interaction, artificial intelligence, and robotics can be applied in a principled manner to create and study intuitive interactions between humans and robots. I will demonstrate social, cognitive, and task benefits of effective human-robot teams in various application contexts. I will discuss broader impacts of my research, as well as future directions of my research focusing on intuitive computing.

Bio

Chien-Ming Huang is an Assistant Professor of Computer Science in the Whiting School of Engineering at The Johns Hopkins University. His research seeks to enable intuitive interactions between humans and machines to augment human capabilities. Dr. Huang received his Ph.D. in Computer Science at the University of Wisconsin–Madison in 2015, his M.S. in Computer Science at the Georgia Institute of Technology in 2010, and his B.S. in Computer Science at National Chiao Tung University in Taiwan in 2006. His research has been awarded a Best Paper Runner-Up at Robotics: Science and Systems (RSS) 2013 and has received media coverage from MIT Technology Review, Tech Insider, and Science Nation.

Abstract

SimpleITK is a simplified, open source, multi-language interface to the National Library of Medicine’s Insight Segmentation and Registration Toolkit (ITK), a C++ open source image analysis toolkit which is widely used in academia and industry. SimpleITK is available in multiple programing languages including: Python, R, Java, C#, C++, Lua, Ruby, and TCL. Binary versions of the toolkit are available for the GNU Linux, Apple OS X, and Microsoft Windows operating systems. For researchers, the toolkit facilitates rapid prototyping and evaluation of image-analysis workflows with minimal effort using their programming language of choice. For educators and students, the toolkit’s concise interface and support of scripting languages facilitates experimentation with well-known algorithms, allowing them to focus on algorithmic understanding rather than low level programming skills.

The toolkit development process follows best software engineering practices including code reviews and continuous integration testing, with results displayed online allowing everyone to gauge the status of the current code and any code that is under consideration for incorporation into the toolkit. User support is available through a dedicated mailing list, the project’s Wiki, and on GitHub. The source code is freely available on GitHub under an Apache-2.0 license (github.com/SimpleITK/SimpleITK). In addition, we provide a development environment which supports collaborative research and educational activities in the Python and R programming languages using the Jupyter notebook web application. It too is freely available on GitHub under an Apache-2.0 license (github.com/InsightSoftwareConsortium/SimpleITK-Notebooks).

The first part of the presentation will describe the motivation underlying the development of SimpleITK, its development process and its current state. The second part of the presentation will be a live demonstration illustrating the capabilities of SimpleITK as a tool for reproducible research.

Bio

Dr. Ziv Yaniv is a senior computer scientist with the Office of High Performance Computing and Communications, at the National Library of Medicine, and at TAJ Technologies Inc. He obtained his Ph.D. in computer science from The Hebrew University of Jerusalem, Jerusalem Israel. Previously he was an assistant professor in the department of radiology, Georgetown university, and a principal investigator at Children’s National Hospital in Washington DC. He was chair of SPIE Medical Imaging: Image-Guided Procedures, Robotic Interventions, and Modeling (2013-2016) and program chair for the Information Processing in Computer Assisted Interventions (IPCAI) 2016 conference.

He believes in the curative power of open research, and has been actively involved in development of several free open source toolkits, including the Image-Guided Surgery Toolkit (IGSTK), the Insight Registration and Segmentation toolkit (ITK) and SimpleITK.