Calendar

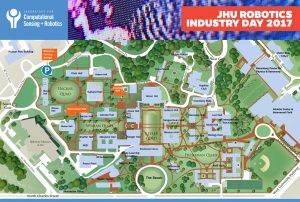

The Laboratory for Computational Sensing and Robotics will highlight its elite robotics students and showcase cutting-edge research projects in areas that include Medical Robotics, Extreme Environments Robotics, Human-Machine Systems for Manufacturing, BioRobotics and more. JHU Robotics Industry Day will take place from 8 a.m. to 3 p.m. in Hackerman Hall on the Homewood Campus at Johns Hopkins University.

Robotics Industry Day will provide top companies and organizations in the private and public sectors with access to the LCSR’s forward-thinking, solution-driven students. The event will also serve as an informal opportunity to explore university-industry partnerships.

You will experience dynamic presentations and discussions, observe live demonstrations, and participate in speed networking sessions that afford you the opportunity to meet Johns Hopkins most talented robotics students before they graduate.

Please contact Rose Chase if you have any questions.

Schedule of Events

(times are subject to change)

LEVERING GREAT HALL

8:00 Registration and Continental Breakfast

8:30 Welcome: Larry Nagahara, Associate Dean for Research, JHU

8:35 Introduction to LCSR: Director Russell H. Taylor

8:55 Research and Commercialization Highlights

9:00 Louis Whitcomb, LCSR

9:10 Noah Cowan & Erin Sutton, LCSR

9:20 Marin Kolilarov, LCSR

9:30 Philipp Stolka, Clear Guide Medical

9:40 Mehran Armand, APL and LCSR

9:50 Stephen L. Hoffman, Sanaria, Inc.

10:00 COFFEE BREAK

10:10 Bernhard Fuerst, LCSR

10:20 Bruce Lichorowic, Galen Robotics

10:30 David Narrow, Sonavex, Inc.

10:40 Kelleher Guerin & Benjamin Gibbs, READY Robotics

10:50 Promit Roy, Max and Haley LLC

11:00 John Krakauer, Malone Center for Engineering in Healthcare Update, JHU

11:10 New Faculty Talks

11:10 – Muyinatu Bell

11:30 – Jeremy D. Brown

HACKERMAN HALL B17 LOBBY

12:00-1:15 LUNCH

HACKERMAN HALL ROBOTORIUM

12:00-1:15 Poster + Demo Sessions

HACKERMAN HALL B17

1:15-3:00 Student and Industry Speed Networking

Please contact Rose Chase if you have any questions. Download PDF of Campus Map & Schedule of Events

Spring Break – No Seminar

Abstract

Coming Soon

Bio

Coming Soon

Abstract

Robotic platforms now deliver vast amounts of sensor data from large unstructured environments. In attempting to process and interpret this data there are many unique challenges in bridging the gap between prerecorded datasets and the field. This talk will present recent work addressing the application of deep learning techniques to robotic perception. Deep learning has pushed successes in many computer vision tasks through the use of standardized datasets. We focus on solutions to several novel problems that arise when attempting to deploy such techniques on fielded robotic systems. The themes of the talk are twofold: 1) How can we integrate such learning techniques into the traditional probabilistic tools that are well known in robotics? and 2) Are there ways of avoiding the labor-intensive human labeling required for supervised learning? These questions give rise to several lines of research based around dimensionality reduction, adversarial learning, and simulation. We will show this work applied to three domains: self-driving cars, acoustic localization, and optical underwater reconstruction. This talk will show results on field data from the monitoring of Australia’s Coral Reefs, the archeological mapping of a 5,000-year-old submerged city, and the operation of a level-4 self-driving car in urban environments.

Bio

Matthew Johnson-Roberson is Assistant Professor of Engineering in the Department of Naval Architecture & Marine Engineering and the Department of Electrical Engineering and Computer Science at the University of Michigan. He received a PhD from the University of Sydney in 2010. There he worked on Autonomous Underwater Vehicles for long-term environment monitoring. Upon joining the University of Michigan faculty in 2013, he created the DROP (Deep Robot Optical Perception) Lab, which researches a wide variety of perception problems in robotics including SLAM, 3D reconstruction, scene understanding, data mining, and visualization. He has held prior postdoctoral appointments with the Centre for Autonomous Systems – CAS at KTH Royal Institute of Technology in Stockholm and the Australian Centre for Field Robotics at the University of Sydney. He is a recipient of the NSF CAREER award (2015).

Abstract

Deep Networks are very successful for many visual task but their performance still fall far short of human visual abilities. Humans can learn from a few examples, with very weak supervision, can adapt to unknown factors like occlusion, can generalize from objects we know to objects which we do not. This talk will describe some state of the art work on deep networks but also discuss some of their limitations.

Bio

Alan Yuille received his B.A. in mathematics from the University of Cambridge in 1976, and completed his Ph.D. in theoretical physics at Cambridge in 1980. He then held a postdoctoral position with the Physics Department, University of Texas at Austin, and the Institute for Theoretical Physics, Santa Barbara. He then became a research scientists at the Artificial Intelligence Laboratory at MIT (1982-1986) and followed this with a faculty position in the Division of Applied Sciences at Harvard (1986-1995), rising to the position of associate professor. From 1995-2002 he worked as a senior scientist at the Smith-Kettlewell Eye Research Institute in San Francisco. From 2002-2016 he was a full professor in the Department of Statistics at UCLA with joint appointments in Psychology, Computer Science, and Psychiatry. In 2016 he became a Bloomberg Distinguished Professor in Cognitive Science and Computer Science at Johns Hopkins University. He has won a Marr prize, a Helmholtz prize, and is a Fellow of IEEE.

Abstract

The sophistication of Unmanned Aerial Vehicles (UAV), otherwise known as drones, is increasing while their cost is decreasing and is quickly approaching consumer prices. This technology, like most others, adds tremendous value to humanity but also challenges. This dichotomy has motivated our research from the early 90’s to develop more capable platforms and more recently to explore technologies that can mitigate the risks associated with drone proliferation. We will present examples of our work on both sides of this spectrum. One particular area with tremendous potential impact is on the sensor and processing (payload) side. We have been the thought leaders on computational sensors and are on a path to reaching size, weight, and power constraints commensurate or exceeding biological equivalents. This revolution in integrated sensing and computing is likely to enable a new class of autonomous and very capable systems. In particular, we are exploring the interface between biological and engineered systems. Biological creatures are highly efficient, autonomous, and mobile with minimal sensory requirements. Their endurance and mobility remain far unmatched especially as the size decreases and that is the subject of intense research. We believe that solutions that build on the best of both worlds may produce better performance than either on its own and our focus is on the optimal integration of engineered payloads with natural hosts. Another complementary area of our research is small robotics with our recent focus for endoscopic medical procedures. In particular, we are developing a self-propelled aiding endoscope based on biomimetic peristaltic locomotion, and potential solutions may reside in what is becomingly known as soft robotics.

Bio

Dr. Rizk is currently an Associate Research Professor for JHU ECE, a lecturer for JHU ME, a Science and Technology (S&T) and Innovation consultant for JHU APL, local industries, and government leadership, and an entrepreneur. Prior to Nov 2016, he was a Principal Staff, Systems/Lead Engineer, S&T Advisor, Innovation Lead, member of the S&T committee, and member of the Innovation Steering Group for the Air and Missile Defense Sector at APL. He has had 15 intellectual property filings since 2014 and received 9 internal and external achievement awards. He has been recognized as a top innovator, thought leader, and successful Principal Investigator, and has demonstrated an effective model for R&D that yielded multiple innovative and far-reaching concepts and technologies. He was a pioneer in UAV technology and led a small team that developed and demonstrated the first four-rotor (quad copter) UAV system in the early 90’s. More recently, he has been the forerunner in developing a new multi-mode / multi-mission sensor architecture that is low C-SWaP and likely to revolutionize the associated missions/applications space and platforms. In addition, he is currently developing a new vision for future unmanned systems. Dr. Rizk has been teaching the Mechatronics courses at JHU since Spring of 2015 and is developing a new design course to be offered in Fall 2017 for which he was awarded a teaching innovation grant. During his APL tenure, he also provided systems engineering and S&T support to senior DOD leadership and large acquisition programs. In addition to providing effective technical, innovative, and mentoring leadership and management, Dr. Rizk has demonstrated a collaborative spirit, successfully working with various FFRDC’s, government labs, academia, and industry of various sizes. He also made key contributions during his time at Rockwell Aerospace, McDonald Douglas, and Boeing. He is a senior member of IEEE, AIAA, and a member of AUVSI.

Abstract

Image-guided therapy is a clinical procedure under 2-D or 3-D image guidance such as MRI and CT images to accurately deliver surgical devices to diseased or cancerous tissue. This emerging field is interdisciplinary, combining the technology of robotics, computer science, engineering and medicine. Image-guided therapy allows faster, safer and more accurate minimally invasive surgery and diagnosis. In this talk, Dr. Tse will present the technological challenges in the field, followed by his research in MRI-guided therapy for brachytherapy, ablation and stem cell treatment in the prostate, the heart and the spine. These procedures consist of the latest imaging and robotic technology in minimally invasive therapy.

Bio

Dr. Zion Tse is an Assistant Professor in the College of Engineering and the Principal Investigator of the Medical Robotics Lab at the University of Georgia. Formerly, he was a visiting scientist in the Center for Interventional Oncology at National Institutes of Health, and a research fellow in the Radiology Department at Harvard Medical School, Brigham and Women’s Hospital. He received his PhD in Medical Robotics from Imperial College London, UK. His academic and professional experience has related to mechatronics, medical devices and surgical robotics. Dr. Tse has designed and prototyped a broad range of novel clinical devices, most of which have been tested in animal and human trials.

Abstract

Human-controlled robotic systems can greatly improve healthcare by synthesizing information, sharing knowledge with the human operator, and assisting with the delivery of care. This talk will highlight projects related to new technology for surgical simulation and training, as well as a more in depth discussion of a novel teleoperated robotic system that enables complex needle-based medical procedures, currently not possible. The central element to this work is understanding how to integrate the human with the physical system in an intuitive and natural way, and how to leverage the relative strengths between the human and mechatronic system to improve outcomes.

Bio

Ann Majewicz completed B.S. degrees in Mechanical Engineering and Electrical Engineering at the University of St. Thomas, the M.S.E. degree in Mechanical Engineering at Johns Hopkins University, and the Ph.D. degree in Mechanical Engineering at Stanford University. Dr. Majewicz joined the Department of Mechanical Engineering as an Assistant Professor in August 2014, where she directs the Human-Enabled Robotic Technology Laboratory. She holds at courtesy appointment in the Department of Surgery at UT Southwestern Medical Center. Her research interests focus on the interface between humans and robotic systems, with an emphasis on improving the delivery of surgical and interventional care, both for the patient and the provider.

Abstract

This is the Fall 2017 Kick-Off Seminar, presenting an overview of LCSR, useful information, and an introduction to the faculty and labs.

Abstract

Robots hold promise in assisting people in a variety of domains including healthcare services, household chores, collaborative manufacturing, and educational learning. In supporting these activities, robots need to engage with humans in cooperative interactions in which they work together toward a common goal in a socially intuitive manner. Such interactions require robots to coordinate actions, predict task intent, direct attention, and convey relevant information to human partners. In this talk, I will present how techniques in human-computer interaction, artificial intelligence, and robotics can be applied in a principled manner to create and study intuitive interactions between humans and robots. I will demonstrate social, cognitive, and task benefits of effective human-robot teams in various application contexts. I will discuss broader impacts of my research, as well as future directions of my research focusing on intuitive computing.

Bio

Chien-Ming Huang is an Assistant Professor of Computer Science in the Whiting School of Engineering at The Johns Hopkins University. His research seeks to enable intuitive interactions between humans and machines to augment human capabilities. Dr. Huang received his Ph.D. in Computer Science at the University of Wisconsin–Madison in 2015, his M.S. in Computer Science at the Georgia Institute of Technology in 2010, and his B.S. in Computer Science at National Chiao Tung University in Taiwan in 2006. His research has been awarded a Best Paper Runner-Up at Robotics: Science and Systems (RSS) 2013 and has received media coverage from MIT Technology Review, Tech Insider, and Science Nation.