Calendar

Abstract

Coming Soon

Bio

Coming Soon

Abstract

Catheters play a key role in diagnosing and treating cardiac arrhythmia. Intracardiac echo (ICE) catheters enable real-time 2D ultrasound image acquisition from within the heart, however, manually steering ICE catheters inside a beating heart is a complex and time consuming task. The clinical use of ICE catheters is therefore limited to only a few critical tasks, such as septal puncture. At the Harvard Biorobotics Lab, we built a robotic system that can automatically steer four degree-of-freedom catheters, enabling real-time tracking of instruments within the heart and 3D visualization of cardiac tissue. In this talk, I will walk you through the design process in preparing our system for in vivo trials, and present results from our latest live animal experiment. I will describe the control strategies we employed to accurately steer these flexible manipulators in the presence of external disturbances (e.g. respiratory motion) and unmodeled motion of the catheter body. Finally, I will describe the GPU-accelerated image processing pipeline we used to generate 3D volumetric images of the heart in real-time from the 2D images acquired by the ICE catheter.

Bio

Alperen Degirmenci is a PhD candidate in Engineering Sciences at the Harvard John A. Paulson School of Engineering and Applied Sciences. He has been working in the BioRobotics Laboratory since 2012 under the supervision of Prof. Robert D. Howe. Alperen earned his M.S. degree from Harvard University in 2015, and a B.S. degree in Mechanical Engineering from the Johns Hopkins University in 2012, with minors in mathematics, computer science, robotics, and computer-integrated surgery. Alperen’s research at Harvard focuses on real-time, high-performance algorithm development for medical ultrasound image processing and robotic procedure guidance in catheter-based cardiac interventions.

Abstract

Coming Soon

Bio

Coming Soon

Abstract

Patients with peripheral field loss complain of colliding with other pedestrians in open-space environments such as shopping malls. Field expansion devices (e.g., prisms) can create artificial peripheral islands of vision. We investigated the visual angle at which these islands can be most effective for avoiding pedestrian collisions, by modeling the collision risk density as a function of bearing angle of pedestrians relative to the patient. Pedestrians at all possible locations were assumed to be moving in all directions with equal probability within a reasonable range of walking speeds. The risk density was found to be highly anisotropic. It peaked at ≈ 45° eccentricity. Increasing pedestrian speed range shifted the risk to higher eccentricities. The risk density is independent of time to collision. The model results were compared to the binocular residual peripheral island locations of 42 patients with forms of retinitis pigmentosa. The natural residual island prevalence also peaked at about 45° nasally but at about 80° temporally. This asymmetry results in a complementary coverage of the binocular field of view. Field expansion prism devices will be most effective if they can create artificial peripheral islands at about 45° eccentricities. The collision risk and residual island findings raise interesting questions about normal visual development.

Bio

Dr. Eli Peli earned a BSc in Electrical Engineering and an MSc in Biomedical Engineering from the Technion Israel Institute of Technology. He then came to Boston where he received his OD degree from the New England College of Optometry. Currently Dr. Peli is the Moakley Scholar in Aging Eye Research at Schepens Eye Research Institute, Massachusetts Eye and Ear, and Professor of Ophthalmology at Harvard Medical School. He also serves as Adjunct Professor of Ophthalmology at Tufts University School of Medicine. Since 1983 he has been caring for visually impaired patients as the director of the Vision Rehabilitation Service at the New England Medical Center Hospitals (now Tufts-Medical Center). Dr. Peli is a Fellow of the American Academy of Optometry, a Fellow of the Optical Society of America, a Fellow of the SID (Society for Information Display), and a Fellow of the SPIE (The International Society of Optical Engineering). He was presented the 2001 Glenn A. Fry Lecture Award and the 2009 William Feinbloom Award by the American Academy of Optometry, the 2004 Alfred W. Bressler Prize in Vision Science (shared with Dr. R. Massof) by the Jewish Guild for the Blind, the 2006 Pisart Vision Award by the Lighthouse International, the 2009 Alcon Research Institute award (shared with Dr. R. Massof), the 2010 Otto Schade Prize from the SID (Society for Information Display) and the 2010 Edwin H Land Medal awarded jointly by the Optical Society of America and the Society for Imaging Science and Technology. He was awarded an Honorary Degree of Master in Medicine by Harvard Medical School in 2002 and an Honorary Doctor of Science Degree from the State University of New York (SUNY) in 2006. Dr. Peli’s principal research interests are image processing in relation to visual function and clinical psychophysics in low vision rehabilitation, image understanding and evaluation of display-vision interaction. He also maintains an interest in oculomotor control and binocular vision. Dr. Peli is a consultant to many companies in the ophthalmic instrumentation area and to manufacturers of head mounted displays (HMD). He served as a consultant on many national committees, including the National Institutes of Health, NASA AOS, Aviation Operations Systems advisory committee, US Air Force, Department of Veterans Affairs, US Navy Postdoctoral Fellowships Program, US Army Research Labs, and US Department of Transportation, Federal Motor Carrier Safety Administration. Dr. Peli has published more than 200 peer reviewed scientific papers and has been awarded 9 US Patents. He edited a book entitled Visual Models for Target Detection with special emphasis on military applications and co-authored a book entitled Driving with Confidence: A Practical Guide to Driving with Low Vision.

This talk proposes a low-cost balloon observation system for sustained (week-long), broadly distributed, in-situ observation of hurricane development. The high-quality, high-density (in both space and time) measurements to be made available by such a system should be instrumental in significantly improving our ability to forecast such extreme and dangerous atmospheric events. Scientific challenges in this over-arching problem, which is of acute societal relevance, include:

Bio

Thomas R Bewley (BS/MS, Caltech, 1989; diploma, von Karman Institute for Fluid Dynamics, 1990; PhD, Stanford, 1998) directs the UCSD Flow Control and Coordinated Robotics Labs, which collaborate closely on interdisciplinary projects. The Flow Control Lab investigates a range of questions ranging from theoretical to applied, including the development of advanced analysis tools and numerical methods to better understand, optimize, estimate, forecast, and control fluid systems. The Coordinated Robotics Lab investigates the mobility and coordination of small multi-modal robotic vehicles, leveraging dynamic models and feedback control, with prototypes built using cellphone-grade electronics, custom PCBs, and 3D printing; the team has also worked with a number of commercial partners to design and bring successful consumer and educational-focused robotics products to market.

Abstract

Image-guided interventional systems rely on accurate device tracking technologies, such as optical or electromagnetic (EM) systems to navigate tools with high resolution diagnostic imaging modalities, such as CT/MRI/PET and facilitate the targeting of specific tissue within the body. However for MR-guided procedures such as Magnetic Resonance Navigation (MRN) which exploits the high magnetic field of an MRI scanner to steer magnetic nanoparticles embedded in drug-eluting beads (DEB), traditional tracking methods are not suitable to visualize catheters inside the patient’s vascular network. This talk will focus on the development of optical shape sensing devices which overcome the limitations associated with these past approaches with the ability to be integrated into sub-millimeter size tools. We present two MR-compatible solutions, using distributed fiber Bragg gratings (FBG) sensors and ultraviolet curing for optical frequency domain reflectometry (OFDR) measuring strain applied to a fiber triplet inserted in a tool and reconstruct the 3D shape during navigation. Recent phantom and ex-vivo experiments compare the accuracy to EM tracking and demonstrate the insensitivity towards external magnetic fields, illustrating the potential of these approaches for image guidance.

Bio

Samuel Kadoury is an associate professor in the Computer and Software Engineering Department at Polytechnique Montreal, member of the Biomedical Engineering Institute at the University of Montreal and researcher at the CHUM Research Center. He currently holds the Canada Research Chair in Medical Imaging and Assisted Interventions at Polytechnique Montreal. He obtained his Masters in Electrical Engineering from McGill University in 2005. After a one-year period at Siemens Corporate Research in Princeton, NJ, he returned to Montreal to complete his Ph.D. in biomedical engineering, focusing on orthopaedic imaging. He completed a post-doctoral fellowship at Ecole Centrale de Paris and worked as a clinical research scientist for Philips Research North America at the National Institutes of Health in Bethesda, MD from 2010 to 2012, developing image-guided systems for liver and prostate cancer. Prof. Kadoury has published over 100 peer-reviewed papers in leading journals and conferences in fields such as biomedical imaging, computer vision, radiology and neuroimaging. He holds 5 US patents in the field of image-guided interventions, has participated in the technological transfer of multiple research projects to commercial products, and was awarded the NIH merit award for his work on prostate cancer, as well as the Cum Laude Award from the RSNA for his work in artificial intelligence for liver cancer detection.

Abstract

Coming Soon

Bio

Coming Soon

Abstract

Rhythms guide our lives. Almost every biological process reflects a roughly 24-hour periodicity known as a circadian rhythm. Living against these body clocks can have severe consequences for physical and mental well-being, with increased risk for cardiovascular disease, cancer, obesity and mental illness. However, circadian disruptions are becoming increasingly widespread in our modern world. As such, there is an urgent need for novel technological solutions to address these issues. In this talk, I will introduce the notion of “Circadian Computing” – technologies that support our innate biological rhythms. Specifically, I will describe a number of my recent projects in this area. First, I will present novel sensing and data-driven methods that can be used to assess sleep and related circadian disruptions. Next, I will explain how we can model and predict alertness, a key circadian process for cognitive performance. Third, I will describe a smartphone based tool for maintaining circadian stability in patients with bipolar disorder. To conclude, I will discuss a vision for how Circadian Computing can radically transform healthcare, including by augmenting performance, enabling preemptive care for mental health patients, and complementing current precision medicine initiatives.

Bio

Saeed Abdullah is a Ph.D. candidate in Information Science at Cornell University, advised by Tanzeem Choudhury. Abdullah works on developing novel data-driven technologies to improve health and well-being. His research is inherently interdisciplinary and he has collaborated with psychologists, psychiatrists, and behavioral scientists. His work has introduced assessment and intervention tools across a number of health related domains including sleep, cognitive performance, bipolar disorder, and schizophrenia. Saeed’s research has been recognized through several accolades, including the $100,000 Heritage Open mHealth Challenge winner, a best paper award, and an Agile Research Project award from the Robert Wood Johnson Foundation.

Abstract

Coming Soon

Bio

Coming Soon

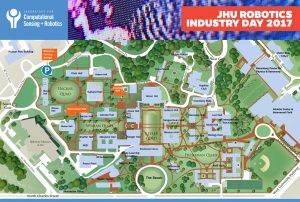

The Laboratory for Computational Sensing and Robotics will highlight its elite robotics students and showcase cutting-edge research projects in areas that include Medical Robotics, Extreme Environments Robotics, Human-Machine Systems for Manufacturing, BioRobotics and more. JHU Robotics Industry Day will take place from 8 a.m. to 3 p.m. in Hackerman Hall on the Homewood Campus at Johns Hopkins University.

Robotics Industry Day will provide top companies and organizations in the private and public sectors with access to the LCSR’s forward-thinking, solution-driven students. The event will also serve as an informal opportunity to explore university-industry partnerships.

You will experience dynamic presentations and discussions, observe live demonstrations, and participate in speed networking sessions that afford you the opportunity to meet Johns Hopkins most talented robotics students before they graduate.

Please contact Rose Chase if you have any questions.

Schedule of Events

(times are subject to change)

LEVERING GREAT HALL

8:00 Registration and Continental Breakfast

8:30 Welcome: Larry Nagahara, Associate Dean for Research, JHU

8:35 Introduction to LCSR: Director Russell H. Taylor

8:55 Research and Commercialization Highlights

9:00 Louis Whitcomb, LCSR

9:10 Noah Cowan & Erin Sutton, LCSR

9:20 Marin Kolilarov, LCSR

9:30 Philipp Stolka, Clear Guide Medical

9:40 Mehran Armand, APL and LCSR

9:50 Stephen L. Hoffman, Sanaria, Inc.

10:00 COFFEE BREAK

10:10 Bernhard Fuerst, LCSR

10:20 Bruce Lichorowic, Galen Robotics

10:30 David Narrow, Sonavex, Inc.

10:40 Kelleher Guerin & Benjamin Gibbs, READY Robotics

10:50 Promit Roy, Max and Haley LLC

11:00 John Krakauer, Malone Center for Engineering in Healthcare Update, JHU

11:10 New Faculty Talks

11:10 – Muyinatu Bell

11:30 – Jeremy D. Brown

HACKERMAN HALL B17 LOBBY

12:00-1:15 LUNCH

HACKERMAN HALL ROBOTORIUM

12:00-1:15 Poster + Demo Sessions

HACKERMAN HALL B17

1:15-3:00 Student and Industry Speed Networking

Please contact Rose Chase if you have any questions. Download PDF of Campus Map & Schedule of Events