Calendar

https://wse.zoom.us/j/348338196

Abstract:

In the absence of computer-assistance, orthopaedic surgeons frequently rely on a challenging interpretation of fluoroscopy for intraoperative guidance. Existing computer-assisted navigation systems forgo this mental process and obtain accurate information of visually obstructed objects through the use of 3D imaging and additional intraoperative sensing hardware. This information is attained at the expense of increased invasiveness to patients and surgical workflows. Patients are exposed to large amounts of ionizing radiation during 3D imaging and undergo additional, and larger, incisions in order to accommodate navigational hardware. Non-standard equipment must be present in the operating room and time-consuming data collections must be conducted intraoperatively. Using periacetabular osteotomy (PAO) as the motivating clinical application, we introduce methods for computer-assisted fluoroscopic navigation of orthopaedic surgery, while remaining minimally invasive to both patients and surgical workflows.

Partial computed tomography (CT) of the pelvis is obtained preoperatively, and surface models of the entire pelvis are reconstructed using a combination of thin plate splines and a statistical model of pelvis anatomy. Intraoperative navigation is implemented through a 2D/3D registration pipeline, between 2D fluoroscopy and the 3D patient models. This pipeline recovers relative motion of the fluoroscopic imager using patient anatomy as a fiducial, without any introduction of external objects. PAO bone fragment poses are computed with respect to an anatomical coordinate frame and are used to intraoperatively assess acetabular coverage of the femoral head. Convolutional neural networks perform semantic segmentation and detect anatomical landmarks in fluoroscopy, allowing for automation of the registration pipeline. Real-time tracking of PAO fragments is enabled through the intraoperative injection of BBs into the pelvis; fragment poses are automatically estimated from a single view in less than one second. A combination of simulated and cadaveric surgeries was used to design and evaluate the proposed methods.

Bio:

Robert Grupp is a postdoctoral fellow at LCSR primarily working with Mehran Armand in the Biomechanical and Image-Guided Surgical Systems Lab. He recently completed his PhD in the Department of Computer Science at Johns Hopkins University, advised by Russell Taylor. His current research focuses on medical image registration and aims to enable computer-assisted navigation during minimally invasive orthopaedic surgery. Some of this work has been highlighted as a feature article in the February 2020 issue of IEEE Transactions on Biomedical Engineering. Prior to starting his PhD studies, Robert worked on various Synthetic Aperture Radar exploitation algorithms as part of the Automatic Target Recognition group at Northrop Grumman: Electronic Systems. He received a BS in Computer Science and Mathematics from the University of Maryland: College Park.

The goals for today’s talk with by to understand the basic physiology of swallowing by using fluoroscopy as a guide. Fluoroscopy is consider an instrumental diagnostic tool in assessing swallowing safety and planning surgical intervention in the aerodigestive tract. Currently, fluoroscopy is interpreted by a frame by frame analysis of the study and in some high quality centers formal protocols are used to quantify physiologic deviations in the swallow.

Bio: Dr. Shumon Dhar, Assistant Professor, Dept. of Otolaryngology-Head & Neck Surgery in the Division of Laryngology has expertise in the comprehensive management of voice, upper airway and swallowing disorders. Dr. Dhar is passionate about treating a variety of patients including professional voice users, patients with upper airway stenosis, and those suffering from early cancer of the vocal cords. Dr. Dhar has unique training in bronchoesophagology, which positions him to treat patients with profound swallowing, reflux and motility problems from a holistic perspective. He uses the latest in diagnostic modalities within a multidisciplinary care model. He also offers minimally invasive endoscopic options for the treatment of GERD and Barrett’s esophagus. Advanced interventions performed by Dr. Dhar include endoscopic and open treatment of cricopharyngeus muscle dysfunction and Zenker’s diverticulum, complete pharyngoesophageal stenosis, vocal fold paralysis, and severe dysphagia in head and neck cancer survivors and patients with neuromuscular disease-related swallowing dysfunction.

Abstract:

In the first half the talk I will present image (MRI) guided robotic interventions. Robotic assistance for precise placement of needle like devices could improve the outcome of diagnostic or therapeutic interventions. Such interventions are often performed under image guidance; image guided robotic system can not only improve the tool placement accuracy but also eliminate the need for manual registration of the surgical scene in clinicians’ mind. This talk will cover recent advances and challenges of developing MRI guided robotic systems for percutaneous interventions. I will present development journey of an integrated robotic system for MRI-guided ablation of brain tumors. Also, I will briefly present MRI-guided robotic systems for prostate biopsy and shoulder arthrography. I will particularly focus on the clinical translation of these systems.

Second half of my talk will be about robot assisted vitreoretinal surgeries. Vitreoretinal surgeries are among the most challenging procedures demanding skills at the limit of human capabilities and requires precise manipulation of multiple surgical instruments in a constrained environment through the small opening on the white part of the eye, the sclera, with limited tool-tissue interaction perception. To alleviate some of these challenges, robotic assistance has been explored to provide steady and precise tool manipulation capabilities. However, in a cooperatively controlled robotic system, the stiff robotic structure removes the tactile perception between the surgeon’s hand holding the tool and sclera, which could result in injury to the sclera. In this part of the talk I will present recent advancements in robot control strategies to secure the sclera from any possible injury due to excessive forces and evaluation of Steady Hand Eye Robot in in vivo studies.

Bio:

Nirav A. Patel received his B.E in Computer Engineering from North Gujarat University in 2005, and M.Tech in Computer Science and Engineering from Nirma University in 2007. After completing M.Tech, he worked on industrial robots with ABB’s corporate research center in Bangalore till 2009. From 2009 to 2012, he worked as a faculty member, teaching at undergraduate and graduate levels. In 2012, he joined Robotics Engineering Ph.D program at Worcester Polytechnic Institute and received doctorate in 2017. Since 2017, he has been working as a postdoctoral fellow with Laboratory for Computational Sensing and Robotics (LCSR) at the Johns Hopkins University. His research interests include development of image guided robotic systems for percutaneous intervention, minimally invasive robotic surgeries and development of sensors and control strategies for safety in robot assisted minimally invasive surgeries. He is particularly interested in clinical translation of these technologies.

If you aren’t able to join us live or wanted to review the presentation, here is the link. It should be up at least through the end of the Spring semester. OneDrive Link

Abstract:

Many airplanes can, or nearly can, glide stably without control. So, it seems natural that the first successful powered flight followed from mastery of gliding. Many bicycles can, or nearly can, balance themselves when in motion. Bicycle design seems to have evolved to gain this feature. Also, we can make toys and ‘robots’ that, like a stable glider or coasting bicycle, stably walk without motors or control in a remarkably human-like way. Again, it seems to make sense to use `passive-dynamics’ as a core for developing the control of walking robots and to gain understanding of the control of walking people. That’s what I used to think. But, so far, this passive approach has not led to robust walking robots. What about human evolution? We didn’t evolve dynamic bodies and then learn to control them. Rather, people had elaborate control systems way back when we were fish and even worms. However: if control is paramount, why is it that uncontrolled passive-dynamic walkers walk so much like humans? It seems that energy optimal, yet robust, control, perhaps a proxy for evolutionary development, arrives at solutions that have some features in common with passive-dynamics. Instead of thinking of good powered walking as passive walking with a small amount of control added, I now think of good powered walking, human or robotic, as highly controlled, while optimized mostly for avoiding falls and, secondarily, for minimal actuator use. When well done, much of the motor effort, always at the ready, is usually titrated out. Thus, deceptively looking, “passive”.

Speaker:

Andy Ruina, Mechanical Engineering, Cornell University

My graduate education was mostly in solid mechanics. That morphed into biomechanics, dynamics and robotics. Recently, I am primarily interested in the mechanics of underactuated motion and locomotion

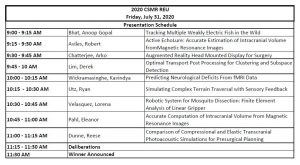

Please join us for the REU Closing Ceremony Presentations this Friday, July 31st, 9am-noon via Zooom https://wse.zoom.us/j/96982283136. Nine students from various institutions across the country have been working remotely with faculty mentors in the REU program this summer. The presentation schedule follows:

Link for Live Seminar

Link for Recorded seminars – 2020/2021 school year

Abstract:

This talk is an overview of our ongoing research in cataract surgery within the Language of Surgery project. Videos of the surgical field are a rich and easily accessible source of data on the extent and nature of care provided to patients in the operating room. This is a tremendous opportunity to demystify care in the surgical field, which is otherwise a black-box in many ways. Methods to analyze videos of the surgical field have multiple applications, one of which is a co-pilot for surgeons that supports their learning in the operating room throughout their career. Using cataract surgery as the prototype, this talk covers research to enable a learning platform that provides objective skill assessments and personalized feedback for surgeons.

Bio:

Swaroop Vedula is a medical doctor with surgical training and an epidemiologist, with post-doctoral training in computer science. He is a research faculty in the Malone Center for Engineering in Healthcare. Dr. Vedula’s research spans technology for objective skill assessment and personalized feedback for surgeons, surgical data science to analyze care in the operating room and its association with patient outcomes, robotic assistance for skill acquisition and surgical coaching, and explainable prediction models for clinical decision support.

Zoom Link for Seminar

Recorded seminars for the 2020/2021 school year

Abstract:

Human interaction with the physical world is increasingly mediated by automation — planes assist pilots, cars assist drivers, and robots assist surgeons. Such semi-autonomous machines will eventually pervade our world, doing dull and dirty work, assisting the elderly and disabled, and responding to disasters. Recent results (e.g. from the DARPA Robotics Challenge) demonstrate that, once a robot reaches a task area and grasps the necessary tool, handle, or wheel, they are able to plan and execute whole-body motions to accomplish complex goals. However, robots frequently lose their balance and fall en route to tasks, necessitating human supervision and intervention. Integrating legged machines in daily life will require safe and stable telelocomotion, that is, robot ambulation guided by humans. This talk presents our efforts to tackle the telelocomotion problem from the bottom-up and top-down, analyzing contact-rich robot dynamics to derive design principles for intrinsically-stable terradynamics, and leveraging the theory of human sensorimotor learning and control to design provably-safe interfaces for nonlinear control systems including legged robots.

Bio:

Sam Burden earned his BS with Honors in Electrical Engineering from the University of Washington in Seattle in 2008. He earned his PhD in Electrical Engineering and Computer Sciences from the University of California in Berkeley in 2014, where he subsequently spent one year as a Postdoctoral Scholar. In 2015, he returned to UW EE (now ECE) as an Assistant Professor; in 2016, he received a Young Investigator Program award from the Army Research Office (ARO-YIP). Sam is broadly interested in discovering and formalizing principles of sensorimotor control. Specifically, he focuses on applications in dynamic and dexterous robotics, neuromechanical motor control, and human-cyber-physical systems. In his spare time, he teaches robotics to students of all ages in classrooms and campus events.

Link for Live Seminar

Link for Recorded seminars – 2020/2021 school year

Peter A. Sheppard – Sr. Intellectual Property Manager Johns Hopkins Technology Ventures

“Intellectual Property Primer For Conflict of Interest Training.”

Laura M. Evans – Senior Policy Associate, Director, Homewood IRB

“Conflicts of Interest: Identification, Review, and Management.”