Autonomous systems offer the promise of providing greater safety and

access. However\, this positive impact will only be achieved if the under

lying algorithms that control such systems can be certified to behave robu

stly. This talk will describe a pair of techniques grounded in infinite di

mensional optimization to address this challenge.

\nThe first techni

que\, which is called Reachability-based Trajectory Design\, constructs a

parameterized representation of the forward reachable set\, which it then

uses in concert with predictions to enable real-time\, certified\, collisi

on checking. This approach\, which is guaranteed to generate not-at-fault

behavior\, is demonstrated across a variety of different real-world platfo

rms including ground vehicles\, manipulators\, and walking robots. The sec

ond technique is a modeling method that allows one to represent a nonlinea

r system as a linear system in the infinite-dimensional space of real-valu

ed functions. By applying this modeling method\, one can employ well-under

stood linear model predictive control techniques to robustly control nonli

near systems. The utility of this approach is verified on a soft robot con

trol task.

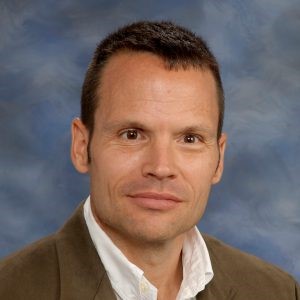

\nRam Vasud

evan is an assistant professor in Mechanical Engineering and the Robotics

Institute at the University of Michigan. He received a BS in Electrical En

gineering and Computer Sciences\, an MS degree in Electrical Engineering\,

and a PhD in Electrical Engineering all from the University of California

\, Berkeley. He is a recipient of the NSF CAREER Award and the ONR Young I

nvestigator Award. His work has received best paper awards at the IEEE Con

ference on Robotics and Automation\, the ASME Dynamics Systems and Control

s Conference\, and IEEE OCEANS Conference and has been finalist for best p

aper at Robotics: Science and Systems.

\nMark Savage is the Johns Hopkins Li

fe Design Educator for Engineering Masters Students\, advising on all aspe

cts of career development and the internship / job search\, with the Hands

hake Career Management System as a necessary tool. Look for weekly newsle

tters to soon be emailed to Homewood WSE Masters Students on Sunday Nights

.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12289@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \n \nAbstract:\nRobots currently have the capacity to help pe

ople in several fields\, including health care\, assisted living\, and man

ufacturing\, where the robots must share physical space and actively inter

act with people in teams. The performance of these teams depends upon how

fluently all team members can jointly perform their tasks. To be successfu

l within a group\, a robot requires the ability to perceive other members’

actions\, model interaction dynamics\, predict future actions\, and adapt

their plans accordingly in real-time. In the Collaborative Robotics Lab (

CRL)\, we develop novel perception\, prediction\, and planning algorithms

for robots to fluently coordinate and collaborate with people in complex h

uman environments. In this talk\, I will highlight various challenges of d

eploying robots in real-world settings and present our recent work to tack

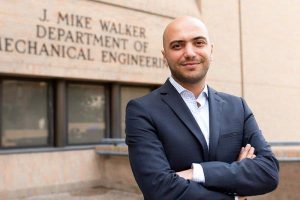

le several of these challenges.\n \nBiography:\nTariq Iqbal is an Assistan

t Professor of Systems Engineering and Computer Science (by courtesy) at t

he University of Virginia (UVA). Prior to joining UVA\, he was a Postdocto

ral Associate in the Computer Science and Artificial Intelligence Lab (CSA

IL) at MIT. He received his Ph.D. in CS from the University of California

San Diego (UCSD). Iqbal leads the Collaborative Robotics Lab (CRL)\, which

focuses on building robotic systems that work alongside people in complex

human environments\, such as factories\, hospitals\, and educational sett

ings. His research group develops artificial intelligence\, computer visio

n\, and machine learning algorithms to enable robots to solve problems in

these domains.

DTSTART;TZID=America/New_York:20210915T120000

DTEND;TZID=America/New_York:20210915T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Tariq Iqbal “Toward Fluent Collaboration in Human-Rob

ot Teams”

URL:https://lcsr.jhu.edu/events/tariq-iqbal/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\nRobots currently have the capacity to help people in several fie

lds\, including health care\, assisted living\, and manufacturing\, where

the robots must share physical space and actively interact with people in

teams. The performance of these teams depends upon how fluently all team m

embers can jointly perform their tasks. To be successful within a group\,

a robot requires the ability to perceive other members’ actions\, model in

teraction dynamics\, predict future actions\, and adapt their plans accord

ingly in real-time. In the Collaborative Robotics Lab (CRL)\, we develop n

ovel perception\, prediction\, and planning algorithms for robots to fluen

tly coordinate and collaborate with people in complex human environments.

In this talk\, I will highlight various challenges of deploying robots in

real-world settings and present our recent work to tackle several of these

challenges.

\nTariq I

qbal is an Assistant Professor of Systems Engineering and Computer Science

(by courtesy) at the University of Virginia (UVA). Prior to joining UVA\,

he was a Postdoctoral Associate in the Computer Science and Artificial In

telligence Lab (CSAIL) at MIT. He received his Ph.D. in CS from the Univer

sity of California San Diego (UCSD). Iqbal leads the Collaborative Robotic

s Lab (CRL)\, which focuses on building robotic systems that work alongsid

e people in complex human environments\, such as factories\, hospitals\, a

nd educational settings. His research group develops artificial intelligen

ce\, computer vision\, and machine learning algorithms to enable robots to

solve problems in these domains.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12292@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nAbstract: We describe an approach for incorporating prior k

nowledge into machine learning algorithms. We aim at applications in physi

cs and signal processing in which we know that certain operations must be

embedded into the algorithm. Any operation that allows computation of a gr

adient or sub-gradient towards its inputs is suited for our framework. We

derive a maximal error bound for deep nets that demonstrates that inclusio

n of prior knowledge results in its reduction. Furthermore\, we show exper

imentally that known operators reduce the number of free parameters. We ap

ply this approach to various tasks ranging from computed tomography image

reconstruction over vessel segmentation to the derivation of previously un

known imaging algorithms. As such\, the concept is widely applicable for m

any researchers in physics\, imaging and signal processing. We assume that

our analysis will support further investigation of known operators in oth

er fields of physics\, imaging and signal processing.\nShort Bio: Prof. Dr

. Andreas Maier was born on 26th of November 1980 in Erlangen. He studied

Computer Science\, graduated in 2005\, and received his PhD in 2009. From

2005 to 2009 he was working at the Pattern Recognition Lab at the Computer

Science Department of the University of Erlangen-Nuremberg. His major res

earch subject was medical signal processing in speech data. In this period

\, he developed the first online speech intelligibility assessment tool –

PEAKS – that has been used to analyze over 4.000 patient and control subje

cts so far.\nFrom 2009 to 2010\, he started working on flat-panel C-arm CT

as post-doctoral fellow at the Radiological Sciences Laboratory in the De

partment of Radiology at the Stanford University. From 2011 to 2012 he joi

ned Siemens Healthcare as innovation project manager and was responsible f

or reconstruction topics in the Angiography and X-ray business unit.\nIn 2

012\, he returned the University of Erlangen-Nuremberg as head of the Medi

cal Reconstruction Group at the Pattern Recognition lab. In 2015 he became

professor and head of the Pattern Recognition Lab. Since 2016\, he is mem

ber of the steering committee of the European Time Machine Consortium. In

2018\, he was awarded an ERC Synergy Grant “4D nanoscope”. Current resear

ch interests focuses on medical imaging\, image and audio processing\, dig

ital humanities\, and interpretable machine learning and the use of known

operators.\n \n \n

DTSTART;TZID=America/New_York:20210922T120000

DTEND;TZID=America/New_York:20210922T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Andreas Maier “Known Operator Learning – An Approach

to Unite Machine Learning\, Signal Processing and Physics”

URL:https://lcsr.jhu.edu/events/andreas-maier/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

The unprecedented prediction accuracy of modern machine learning beckons f

or its application in a wide range of real-world applications\, including

autonomous robots\, fine-grained computer vision\, scientific experimental

design\, and many others. In order to create trustworthy AI systems\, we

must safeguard machine learning methods from catastrophic failures and pro

vide calibrated uncertainty estimates. For example\, we must account for t

he uncertainty and guarantee the performance for safety-critical systems\,

like autonomous driving and health care\, before deploying them in the re

al world. A key challenge in such real-world applications is that the test

cases are not well represented by the pre-collected training data. To pr

operly leverage learning in such domains\, especially safety-critical ones

\, we must go beyond the conventional learning paradigm of maximizing aver

age prediction accuracy with generalization guarantees that rely on strong

distributional relationships between training and test examples.

\nIn this talk\, I will describe a distributionally robust learnin

g framework that offers accurate uncertainty estimation and rigorous guara

ntees under data distribution shift. This framework yields appropriately c

onservative yet still accurate predictions to guide real-world decision-ma

king and is easily integrated with modern deep learning. I will showcase

the practicality of this framework in applications on agile robotic contro

l and computer vision. I will also introduce a survey of other real-world

applications that would benefit from this framework for future work.

\nAnqi (Angie) Liu is an

Assistant Professor in the Department of Computer Science at the Whiting S

chool of Engineering of the Johns Hopkins University. She is broadly inter

ested in developing principled machine learning algorithms for building mo

re reliable\, trustworthy\, and human-compatible AI systems in the real wo

rld. Her research focuses on enabling the machine learning algorithms to b

e robust to the changing data and environments\, to provide accurate and h

onest uncertainty estimates\, and to consider human preferences and values

in the interaction. She is particularly interested in high-stake applicat

ions that concern the safety and societal impact of AI. Previously\, she c

ompleted her postdoc in the Department of Computing and Mathematical Scien

ces of the California Institute of Technology. She obtained her Ph.D. from

the Department of Computer Science of the University of Illinois at Chica

go. She has been selected as the 2020 EECS Rising Stars. Her publications

appear in top machine learning conferences like NeurIPS\, ICML\, ICLR\, AA

AI\, and AISTATS.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12300@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nAbstract:\nDeployment of autonomous vehicles (AV) on public

roads promises increases in efficiency and safety\, and requires intellig

ent situation awareness. We wish to have autonomous vehicles that can lear

n to behave in safe and predictable ways\, and are capable of evaluating r

isk\, understanding the intent of human drivers\, and adapting to differen

t road situations. This talk describes an approach to learning and integra

ting risk and behavior analysis in the control of autonomous vehicles. I w

ill introduce Social Value Orientation (SVO)\, which captures how an agent

’s social preferences and cooperation affect interactions with other agent

s by quantifying the degree of selfishness or altruism. SVO can be integra

ted in control and decision making for AVs. I will provide recent examples

of self-driving vehicles capable of adaptation.\n \nBiography:\nDaniela R

us is the Andrew (1956) and Erna Viterbi Professor of Electrical Engineeri

ng and Computer Science\, Director of the Computer Science and Artificial

Intelligence Laboratory (CSAIL) at MIT\, and Deputy Dean of Research in th

e Schwarzman College of Computing at MIT. Rus’ research interests are in r

obotics and artificial intelligence. The key focus of her research is to d

evelop the science and engineering of autonomy. Rus is a Class of 2002 Mac

Arthur Fellow\, a fellow of ACM\, AAAI and IEEE\, a member of the National

Academy of Engineering\, and of the American Academy of Arts and Sciences

. She is a senior visiting fellow at MITRE Corporation. She is the recipie

nt of the Engelberger Award for robotics. She earned her PhD in Computer S

cience from Cornell University.

DTSTART;TZID=America/New_York:20211006T120000

DTEND;TZID=America/New_York:20211006T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Daniela Rus “Learning Risk and Social Behavior in Mix

ed Human-Autonomous Vehicles Systems”

URL:https://lcsr.jhu.edu/events/daniela-rus/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

Deployment of autonomous vehicles (AV) on public roads promises increases

in efficiency and safety\, and requires intelligent situation awareness. W

e wish to have autonomous vehicles that can learn to behave in safe and pr

edictable ways\, and are capable of evaluating risk\, understanding the in

tent of human drivers\, and adapting to different road situations. This ta

lk describes an approach to learning and integrating risk and behavior ana

lysis in the control of autonomous vehicles. I will introduce Social Value

Orientation (SVO)\, which captures how an agent’s social preferences and

cooperation affect interactions with other agents by quantifying the degre

e of selfishness or altruism. SVO can be integrated in control and decisio

n making for AVs. I will provide recent examples of self-driving vehicles

capable of adaptation.

\nDaniela Rus is the Andrew (1956) and Erna Viterbi Professor of Electric

al Engineering and Computer Science\, Director of the Computer Science and

Artificial Intelligence Laboratory (CSAIL) at MIT\, and Deputy Dean of Re

search in the Schwarzman College of Computing at MIT. Rus’ research intere

sts are in robotics and artificial intelligence. The key focus of her rese

arch is to develop the science and engineering of autonomy. Rus is a Class

of 2002 MacArthur Fellow\, a fellow of ACM\, AAAI and IEEE\, a member of

the National Academy of Engineering\, and of the American Academy of Arts

and Sciences. She is a senior visiting fellow at MITRE Corporation. She is

the recipient of the Engelberger Award for robotics. She earned her PhD i

n Computer Science from Cornell University.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12307@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \n \nAbstract:\nDigital cameras have dramatically changed int

erventional and surgical procedures. Modern operating rooms utilize a rang

e of cameras to minimize invasiveness or provide vision beyond human capab

ilities in magnification\, spectra or sensitivity. Such surgical cameras p

rovide the most informative and rich signal from the surgical site contain

ing information about activity and events as well as physiology and tissue

function. This talk will highlight some of the opportunities for computer

vision in surgical applications and the challenges in translation to clin

ically usable systems.\n \nBio: \nDan Stoyanov is a Professor of Robot Vis

ion in the Department of Computer Science at University College London\, D

irector of the Wellcome/EPSRC Centre for Interventional and Surgical Scien

ces (WEISS)\, a Royal Academy of Engineering Chair in Emerging Technologie

s and Chief Scientist at Digital Surgery Ltd. Dan first studied electronic

s and computer systems engineering at King’s College London before complet

ing a PhD in Computer Science at Imperial College London where he speciali

zed in medical image computing.\n

DTSTART;TZID=America/New_York:20211013T120000

DTEND;TZID=America/New_York:20211013T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Danail Stoyanov “Towards Understanding Surgical Scene

s Using Computer Vision”

URL:https://lcsr.jhu.edu/events/danail-stoyanov/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\nDigital cameras have dramatically changed interventional and sur

gical procedures. Modern operating rooms utilize a range of cameras to min

imize invasiveness or provide vision beyond human capabilities in magnific

ation\, spectra or sensitivity. Such surgical cameras provide the most inf

ormative and rich signal from the surgical site containing information abo

ut activity and events as well as physiology and tissue function. This tal

k will highlight some of the opportunities for computer vision in surgical

applications and the challenges in translation to clinically usable syste

ms.

\nDan

Stoyanov is a Professor of Robot Vision in the Department of Computer Scie

nce at University College London\, Director of the Wellcome/EPSRC Centre f

or Interventional and Surgical Sciences (WEISS)\, a Royal Academy of Engin

eering Chair in Emerging Technologies and Chief Scientist at Digital Surge

ry Ltd. Dan first studied electronics and computer systems engineering at

King’s College London before completing a PhD in Computer Science at Imper

ial College London where he specialized in medical image computing.

\n<

p> \n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12310@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \n \nAbstract:\nI will discuss recent efforts at CinfonIA in

enhancing interpretability in deep neural networks through the use of adve

rsarial robustness and multimodal information.\n \nBiography:\nPablo Arbel

áez received the PhD with honors in Applied Mathematics from the Universit

é Paris Dauphine in 2005. He was Senior Research Scientist with the Comput

er Vision Group at UC Berkeley from 2007 to 2014. He currently holds a fac

ulty position in the Department of Biomedical Engineering at Universidad d

e los Andes in Colombia. Since 2020\, he leads the Center for Research and

Formation in Artificial Intelligence (CinfonIA) at UniAndes. His research

interests are in computer vision and machine learning\, in which he has w

orked on several problems\, including perceptual grouping\, object recogni

tion and the analysis of biomedical images.

DTSTART;TZID=America/New_York:20211020T120000

DTEND;TZID=America/New_York:20211020T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Pablo Arbelaez “Towards Robust Artificial Intelligenc

e”

URL:https://lcsr.jhu.edu/events/pablo-arbelaez/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\nI will discuss recent efforts at CinfonIA in enhancing interpret

ability in deep neural networks through the use of adversarial robustness

and multimodal information.

\nPablo Arbeláez received the PhD with honors in Applied Mathematics

from the Université Paris Dauphine in 2005. He was Senior Research Scient

ist with the Computer Vision Group at UC Berkeley from 2007 to 2014. He cu

rrently holds a faculty position in the Department of Biomedical Engineeri

ng at Universidad de los Andes in Colombia. Since 2020\, he leads the Cent

er for Research and Formation in Artificial Intelligence (CinfonIA) at Uni

Andes. His research interests are in computer vision and machine learning\

, in which he has worked on several problems\, including perceptual groupi

ng\, object recognition and the analysis of biomedical images.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12334@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nLCSR Faculty “Interviewing for Jobs in Academia and Industr

y”\n \nSpeakers: Louis Whitcomb\, Marin Kobilarov\, and the LCSR Faculty\n

Abstract:\nThis LCSR professional development seminar will review the proc

ess of interviewing for jobs in academia (e.g. faculty\, post-doc\, and sc

ientist positions) and industry (e.g. engineering\, scientist\, and manage

ment positions)\, and will provide tips and best-practices for successful

interviewing.\n

DTSTART;TZID=America/New_York:20211027T120000

DTEND;TZID=America/New_York:20211027T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: LCSR Faculty “Interviewing for Jobs in Academia and I

ndustry”

URL:https://lcsr.jhu.edu/events/lcsr-faculty/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

There are more than 2 million industrial robots used worldwide every day\,

and yet these devices represent one of the most fragmented technologies i

n the world. With more than 100 brands of industrial robots\, each with th

eir own proprietary\, difficult to learn software and programming language

s\, we are not seeing the exponential growth we expected out of robots. Th

e computer industry faced a similar challenge in the early 1980s with the

advent of the PC\, and computers did not see explosive growth until a few

key platforms emerged that focused on making computers accessible to end u

sers\, and run on a common software platform. At READY robotics\, we belie

ve the same is true for robots\, and that is why we are building Forge/OS\

, our “Windows” for the robotics space that lets every robot speak the sam

e language and provide the same award winning user experience to end-users

. We will talk about how this technology came about\, how we think it can

change the future\, and discuss the journey from the initial research perf

ormed at Johns Hopkins University up to today.

\nKel Guerin has been working in the robotics spa

ce for more than 10 years\, focusing on the design and usability of a wide

variety of robots\, including systems for space exploration\, deep mining

\, surgery\, and industrial manufacturing. While obtaining his Ph.D. from

Johns Hopkins University (Defended 2016)\, Kel worked specifically on the

challenge of making industrial robots more flexible and easy to use. The r

esult was his award-winning Forge Operating System and easy-to-use program

ming interface for industrial robots. Kel spun out his technology into REA

DY Robotics\, an industrial robotics start-up he co-founded in 2016. His w

ork has been featured in the Wall Street Journal\, Forbes\, and READY’s pr

oducts have been called “the Swiss Army knife of robots” by Inc. magazine.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12322@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \n \nAbstract:\nTBA\n \nBiography:\nTBA

DTSTART;TZID=America/New_York:20211110T120000

DTEND;TZID=America/New_York:20211110T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Maya Cakmak

URL:https://lcsr.jhu.edu/events/maya-cakmak/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

Robot-assisted surgery (RAS) has gained momentum over the last few decades

with nearly 1\,200\,000 RAS procedures performed in 2019 alone using the

da Vinci Surgical System\, the most widely used surgical robotics platform

. The current state-of-the-art surgical robotic systems use only a single

endoscope to view the surgical field. In this talk\, we present a novel de

sign of an additional “pickup” camera that can be integrated into the da V

inci Surgical System. We then explore the benefits of our design for human

-robot interaction (HRI) and autonomy in RAS. On the HRI side\, we show ho

w this “pickup” camera improves depth perception as well as how its additi

onal view can lead to better surgical training. On the autonomy side\, we

show how automating the motion of this camera provides better visualizatio

n of the surgical scene. Finally\, we show how this automation work inspir

es the design of novel execution models of the automation of surgical subt

asks\, leading to superhuman performance.

\nAlaa Eldin Abdelaal is a PhD candidate at the Roboti

cs and Control Laboratory at the University of British Columbia and a visi

ting graduate scholar at the Computational Interaction and Robotics Lab at

Johns Hopkins University. He holds a B.Sc. in Computer and Systems Engine

ering from Mansoura University in Egypt and a M.Sc. in Computing Science f

rom Simon Fraser University in Canada. His research interests are at the i

ntersection of autonomy and human-robot interaction for human skill augmen

tation and decision support with application to surgical robotics. His wor

k is co-advised by Dr. Tim Salcudean and Dr. Gregory Hager. His research h

as been recognized with the Best Bench-to-Bedside Paper Award at the Inter

national Conference on Information Processing in Computer-Assisted Interve

ntions (IPCAI) 2019. He is the recipient of the Vanier Canada Graduate Sch

olarship\, the most prestigious scholarship for PhD students in Canada.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12339@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \n \nAbstract:\nIn this seminar\, we will have a panel of thr

ee LCSR faculty\, Dr. Peter Kazanzides\, Dr. Marin Kobilarov\, and Dr. Axe

l Krieger discussing their experience in commercializing robotic research

through licensing and start-up. The panel will include questions and answe

r sessions with the audience.\n

DTSTART;TZID=America/New_York:20211201T120000

DTEND;TZID=America/New_York:20211201T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: LCSR Faculty “Panel on commercialization of robotics

research”

URL:https://lcsr.jhu.edu/events/tba-3/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\nIn this seminar\, we will have a panel of three LCSR faculty\, D

r. Peter Kazanzides\, Dr. Marin Kobilarov\, and Dr. Axel Krieger discussin

g their experience in commercializing robotic research through licensing a

nd start-up. The panel will include questions and answer sessions with the

audience.

\n

An enduring goal of AI and robotics has been to build a robot capable of r

obustly performing a wide variety of tasks in a wide variety of environmen

ts\; not by sequentially being programmed (or taught) to perform one task

in one environment at a time\, but rather by intelligently choosing approp

riate actions for whatever task and

\nenvironment it is facing. This

goal remains a challenge. In this talk I’ll describe recent work in our la

b aimed at the goal of general-purpose robot manipulation by integrating t

ask-and-motion planning with various forms of model learning. In particula

r\, I’ll describe approaches to manipulating objects without prior shape m

odels\, to acquiring composable sensorimotor skills\, and to exploiting pa

st experience for more efficient planning.

\nTomas Lozano-Perez is professor in EECS at MIT\, an

d a member of CSAIL. He was a recipient of the 2011 IEEE Robotics Pioneer

Award and a co-recipient of the 2021 IEEE Robotics and Automation Technica

l Field Award. He is a Fellow of the AAAI\, ACM\, and

\nIEEE.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12603@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nAbstract:\nWhile many robots are currently deployable in fa

ctories\, warehouses\, and homes\, their autonomous deployment requires ei

ther the deployment environments to be highly controlled\, or the deployme

nt to only entail executing one single preprogrammed task. These deployabl

e robots do not learn to address changes and to improve performance. For u

ncontrolled environments and for novel tasks\, current robots must seek he

lp from highly skilled robot operators for teleoperated (not autonomous) d

eployment.\n \nIn this talk\, I will present two approaches to removing th

ese limitations by learning to enable autonomous deployment in the context

of mobile robot navigation\, a common core capability for deployable robo

ts: (1) Adaptive Planner Parameter Learning utilizes existing motion plann

ers\, fine-tunes these systems using simple interactions with non-expert u

sers before autonomous deployment\, adapts to different deployment environ

ments\, and produces robust autonomous navigation\; (2) Learning from Hall

ucination enables agile navigation in highly-constrained deployment enviro

nments by exploring in a completely safe training environment and creating

synthetic obstacle configurations to learn from. Building on robust auton

omous navigation\, I will discuss my vision toward a hardened\, reliable\,

and resilient robot fleet which is also task-efficient and continually le

arns from each other and from humans.\n \nBiography:\nXuesu Xiao is an inc

oming Assistant Professor in the Department of Computer Science at George

Mason University starting Fall 2022. Currently\, he is a roboticist on The

Everyday Robot Project at X\, The Moonshot Factory\, and a research affil

iate in the Department of Computer Science at The University of Texas at A

ustin. Dr. Xiao’s research focuses on field robotics\, motion planning\, a

nd machine learning. He develops highly capable and intelligent mobile rob

ots that are robustly deployable in the real world with minimal human supe

rvision. Dr. Xiao received his Ph.D. in Computer Science from Texas A&M Un

iversity in 2019\, Master of Science in Mechanical Engineering from Carneg

ie Mellon University in 2015\, and dual Bachelor of Engineering in Mechatr

onics Engineering from Tongji University and FH Aachen University of Appli

ed Sciences in 2013.

DTSTART;TZID=America/New_York:20220202T120000

DTEND;TZID=America/New_York:20220202T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Xuesu Xiao “Deployable Robots that Learn”

URL:https://lcsr.jhu.edu/events/xuesu-xiao/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

While many robots are currently deployable in factories\, warehouses\, and

homes\, their autonomous deployment requires either the deployment enviro

nments to be highly controlled\, or the deployment to only entail executin

g one single preprogrammed task. These deployable robots do not learn to a

ddress changes and to improve performance. For uncontrolled environments a

nd for novel tasks\, current robots must seek help from highly skilled rob

ot operators for teleoperated (not autonomous) deployment.

\nIn this talk\, I will present two approaches to removing these limitati

ons by learning to enable autonomous deployment in the context of mobile r

obot navigation\, a common core capability for deployable robots: (1) Adap

tive Planner Parameter Learning utilizes existing motion planners\, fine-t

unes these systems using simple interactions with non-expert users before

autonomous deployment\, adapts to different deployment environments\, and

produces robust autonomous navigation\; (2) Learning from Hallucination en

ables agile navigation in highly-constrained deployment environments by ex

ploring in a completely safe training environment and creating synthetic o

bstacle configurations to learn from. Building on robust autonomous naviga

tion\, I will discuss my vision toward a hardened\, reliable\, and resilie

nt robot fleet which is also task-efficient and continually learns from ea

ch other and from humans.

\nXuesu Xiao is an incoming Assistant Professor in the Department of C

omputer Science at George Mason University starting Fall 2022. Currently\,

he is a roboticist on The Everyday Robot Project at X\, The Moonshot Fact

ory\, and a research affiliate in the Department of Computer Science at Th

e University of Texas at Austin. Dr. Xiao’s research focuses on field robo

tics\, motion planning\, and machine learning. He develops highly capable

and intelligent mobile robots that are robustly deployable in the real wor

ld with minimal human supervision. Dr. Xiao received his Ph.D. in Computer

Science from Texas A&M University in 2019\, Master of Science in Mechanic

al Engineering from Carnegie Mellon University in 2015\, and dual Bachelor

of Engineering in Mechatronics Engineering from Tongji University and FH

Aachen University of Applied Sciences in 2013.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12615@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nAbstract:\nThis presentation overviews a number of the proj

ects related to image-guided intervention that have taken place in my lab

at the Robarts Research Institute at Western University in recent years. P

rojects cover applications in Image-guided Neurosurgery\, Cardiac surgery\

, as well as the role of simulation phantoms and technologies as motion m

agnification and mixed reality in image-guided interventions.\n \nBiograp

hy:\nDr. Terry Peters is a Scientist in the Imaging Research Laboratories

at the Robarts Research Institute\, London\, ON\, Canada\, and is Professo

r Emeritus in the Departments of Medical Imaging and Medical Biophysics\,

and the School of Biomedical Engineering\, at Western University. He obtai

ned his PhD in Electrical Engineering at the University of Canterbury in C

hristchurch NZ\, in the field image reconstruction for CT in 1974\, and f

ollowing some time as a Medical Physicist at the Christchurch Hospital\,

joined the Montreal Neurological Institute at McGill University as a rese

arch scientist in 1978. In 1997 he joined the Imaging Research Labs at th

e Robarts Research Institute at Western University in London Canada\, wher

e he expanded his research focus to encompass image-guided procedures in m

ultiple organ systems. He has authored over 350 peer-reviewed papers\, bo

oks and book chapters\, and has mentored over 100 trainees. Dr Peters is

a Fellow of several academic and professional societies including the IEE

E\, the MICCAI Society\, the Royal Society of Canada.

DTSTART;TZID=America/New_York:20220209T120000

DTEND;TZID=America/New_York:20220209T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Terry Peters “A journey in Image-guided Intervention”

URL:https://lcsr.jhu.edu/events/terry-peters/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

This presentation overviews a number of the projects related to image-guid

ed intervention that have taken place in my lab at the Robarts Research In

stitute at Western University in recent years. Projects cover applications

in Image-guided Neurosurgery\, Cardiac surgery\, as well as the role of s

imulation phantoms and technologies as motion magnification and mixed re

ality in image-guided interventions.

\nDr. Terry Peters is a Scientist in the Imaging Research L

aboratories at the Robarts Research Institute\, London\, ON\, Canada\, and

is Professor Emeritus in the Departments of Medical Imaging and Medical B

iophysics\, and the School of Biomedical Engineering\, at Western Universi

ty. He obtained his PhD in Electrical Engineering at the University of Can

terbury in Christchurch NZ\, in the field image reconstruction for CT in

1974\, and following some time as a Medical Physicist at the Christchurch

Hospital\, joined the Montreal Neurological Institute at McGill Universi

ty as a research scientist in 1978. In 1997 he joined the Imaging Researc

h Labs at the Robarts Research Institute at Western University in London C

anada\, where he expanded his research focus to encompass image-guided pro

cedures in multiple organ systems. He has authored over 350 peer-reviewed

papers\, books and book chapters\, and has mentored over 100 trainees.

Dr Peters is a Fellow of several academic and professional societies inclu

ding the IEEE\, the MICCAI Society\, the Royal Society of Canada.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12619@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nHow many skills do think you have? Mark Savage\, Life Desi

gn Educator for Engineering Masters Students will explain how the truth ma

y far exceed your estimate. Knowing\, understanding\, and communicating

your major skills will prove useful as you pursue jobs and internships.\n

DTSTART;TZID=America/New_York:20220216T120000

DTEND;TZID=America/New_York:20220216T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Mark Savage (Life Design Educator) “Skills”

URL:https://lcsr.jhu.edu/events/tbd-2/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\nHow many skills do think you have?

Mark Savage\, Life Design Educator for Engineering Masters Students will

explain how the truth may far exceed your estimate. Knowing\, understand

ing\, and communicating your major skills will prove useful as you pursue

jobs and internships.

\nDr. Ghazi MD\, F

EBU\, MHPE\, received his medical education from Cairo University\, Egypt

in 2000\, where he also completed his Urology residency 2001-2005. He comp

leted a series of fellowships in minimal invasive Urological surgery\, in

Paris and Austria (2009-2011)\, where he received accreditation from the E

uropean Board of Urology. He completed an Endourology and robotic surgery

fellowship at the University of Rochester Medical Center\, New York (2011-

2013)\, after which was appointed Assistant professor of Urology at the Un

iversity of Rochester (2013).

\nDr. Ghazi specializes in the diagnos

is and minimal invasive treatment of urological cancers as well as complex

stone disease. In addition he perused research grants in education\, simu

lation research and surgical training. To enhance his educational backgrou

nd\, he was awarded the George Corner Deans Teaching fellowship (2014-2016

)\, completed the Harvard Macy Institute program for Educators in Health P

rofessions in 2016 and a Masters in Health Professions Education program a

t the Warner School of Education\, University of Rochester (2016-2020). He

is currently enrolled in a 2-year Senior Leadership Education and Develop

ment Program at the University of Rochester.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12625@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nAbstract:\nThe pandemic exacerbated inequities faced by peo

ple with disabilities and healthcare workers — both are at high risk of ad

verse physical and mental health outcomes. Robots alone are not going to f

ix these major societal problems\; however\, our work explores how we can

design technology to lessen the burden of systemic ableism and healthcare

system stress. I will discuss several of our recent projects in acute care

and community health contexts. In acute care\, we are building hospital-b

ased robots to support the clinical workforce\, to support item delivery\,

telemedicine\, and decision support. In community health\, we are creatin

g interactive and adaptive systems that aim to extend the reach of cogniti

ve neurorehabilitative therapies\, provide respite to overburdened caregiv

ers\, and explore how technology might serve as a means for mediating posi

tive interactions during hardship. We focus on building robots that can ad

aptively team with and longitudinally learn from people\, and personalize

and tailor their behavior.\n \nBiography:\nDr. Laurel Riek is a professor

in Computer Science and Engineering at the University of California\, San

Diego\, with a joint appointment in the Department of Emergency Medicine\,

and is affiliated with the Contextual Robotics Institute and Design Lab.

Dr. Riek directs the Healthcare Robotics Lab and leads research in human-r

obot teaming and health informatics\, with a focus on autonomous robots th

at work proximately with people. Riek’s current research interests include

long term learning\, robot perception\, and personalization\; with applic

ations in acute care\, neurorehabilitation\, and home health. Dr. Riek rec

eived a Ph.D. in Computer Science from the University of Cambridge\, and B

.S. in Logic and Computation from Carnegie Mellon. Riek served as a Senior

Artificial Intelligence Engineer and Roboticist at The MITRE Corporation

from 2000-2008\, working on learning and vision systems for robots\, and h

eld the Clare Boothe Luce chair in Computer Science and Engineering at the

University of Notre Dame from 2011-2016. Dr. Riek has received the NSF CA

REER Award\, AFOSR Young Investigator Award\, Qualcomm Research Award\, an

d was named one of ASEE’s 20 Faculty Under 40. Dr. Riek is the HRI 2023 Ge

neral Co-Chair and served as the Program Co-Chair for HRI 2020\, and serve

s on the editorial boards of T-RO and THRI.

DTSTART;TZID=America/New_York:20220302T120000

DTEND;TZID=America/New_York:20220302T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Laurel Riek “Robots in Hospitals and in the Community

: Supporting Wellbeing and Furthering Health Equity”

URL:https://lcsr.jhu.edu/events/laurel-riek/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

The pandemic exacerbated inequities faced by people with disabilities and

healthcare workers — both are at high risk of adverse physical and mental

health outcomes. Robots alone are not going to fix these major societal pr

oblems\; however\, our work explores how we can design technology to lesse

n the burden of systemic ableism and healthcare system stress. I will disc

uss several of our recent projects in acute care and community health cont

exts. In acute care\, we are building hospital-based robots to support the

clinical workforce\, to support item delivery\, telemedicine\, and decisi

on support. In community health\, we are creating interactive and adaptive

systems that aim to extend the reach of cognitive neurorehabilitative the

rapies\, provide respite to overburdened caregivers\, and explore how tech

nology might serve as a means for mediating positive interactions during h

ardship. We focus on building robots that can adaptively team with and lon

gitudinally learn from people\, and personalize and tailor their behavior.

\nDr. Laurel Riek is

a professor in Computer Science and Engineering at the University of Calif

ornia\, San Diego\, with a joint appointment in the Department of Emergenc

y Medicine\, and is affiliated with the Contextual Robotics Institute and

Design Lab. Dr. Riek directs the Healthcare Robotics Lab and leads researc

h in human-robot teaming and health informatics\, with a focus on autonomo

us robots that work proximately with people. Riek’s current research inter

ests include long term learning\, robot perception\, and personalization\;

with applications in acute care\, neurorehabilitation\, and home health.

Dr. Riek received a Ph.D. in Computer Science from the University of Cambr

idge\, and B.S. in Logic and Computation from Carnegie Mellon. Riek served

as a Senior Artificial Intelligence Engineer and Roboticist at The MITRE

Corporation from 2000-2008\, working on learning and vision systems for ro

bots\, and held the Clare Boothe Luce chair in Computer Science and Engine

ering at the University of Notre Dame from 2011-2016. Dr. Riek has receive

d the NSF CAREER Award\, AFOSR Young Investigator Award\, Qualcomm Researc

h Award\, and was named one of ASEE’s 20 Faculty Under 40. Dr. Riek is the

HRI 2023 General Co-Chair and served as the Program Co-Chair for HRI 2020

\, and serves on the editorial boards of T-RO and THRI.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12635@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nAbstract:\nWhile human interaction remains key to a caring

treatment\, medical robotics holds the potential to improve surgical proce

sses through enabling scaling of forces and actuation\, providing safe and

individual treatments to patients\, and allowing for efficient use of hea

lth care personnel and resources. Machine learning algorithms and standard

ization of processes can increase the quality of medical diagnosis and tre

atments\, particularly when analyzing large quantities of data. Technical

and robotic systems can thus support the medical staff in all steps of a m

edical process.\nThis talk introduces several assistive robotic systems fo

r minimally invasive surgical procedures being researched at the Health Ro

botics and Automation Lab at KIT\, Germany. On one hand\, we will discuss

steerable flexible robotic tools for medical applications that require del

icate tissue handling. On the other hand\, cognitive robotic surgeons and

augmented reality support in the operation room are presented for applicat

ion in laparoscopy and neurosurgery.\n \nBiography:\nFranziska Mathis-Ullr

ich is Assistant Professor for Medical Robotics at the Karlsruhe Institute

of Technology (KIT) in Germany. Her primary research focus is on minimall

y invasive and cognition controlled robotic systems and embedded machine l

earning with emphasis on applications in surgery. She received her B.Sc. a

nd M.Sc. degrees in mechanical engineering and robotics in 2009 and 2012 a

nd obtained her Ph.D. in 2017 in Microrobotics from ETH Zurich\, respectiv

ely. Since 2019\, she has been an Assistant Professor with the Health Robo

tics and Automation Laboratory at KIT.\nProf. Mathis-Ullrich is vice-presi

dent of the German Society for Computer- and Robot-assisted Surgery (CURAC

) and has received the IEEE ICRA Best Paper Award in Medical Robotics (201

4)\, the IEEE BioRob Best Student Paper Award (2016) and won twice with he

r team the first prize of the ICRA Microassembly Challenge (2014 & 2015).

Furthermore\, she made it onto the prestigious Forbes “30 under 30” list (

2017).

DTSTART;TZID=America/New_York:20220309T120000

DTEND;TZID=America/New_York:20220309T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Franziska Mathis-Ullrich “Cognitive Robotics and Embe

dded AI for minimally invasive Surgery”

URL:https://lcsr.jhu.edu/events/franziska-mathis-ullrich/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

While human interaction remains key to a caring treatment\, medical roboti

cs holds the potential to improve surgical processes through enabling scal

ing of forces and actuation\, providing safe and individual treatments to

patients\, and allowing for efficient use of health care personnel and res

ources. Machine learning algorithms and standardization of processes can i

ncrease the quality of medical diagnosis and treatments\, particularly whe

n analyzing large quantities of data. Technical and robotic systems can th

us support the medical staff in all steps of a medical process.

\nTh

is talk introduces several assistive robotic systems for minimally invasiv

e surgical procedures being researched at the Health Robotics and Automati

on Lab at KIT\, Germany. On one hand\, we will discuss steerable flexible

robotic tools for medical applications that require delicate tissue handli

ng. On the other hand\, cognitive robotic surgeons and augmented reality s

upport in the operation room are presented for application in laparoscopy

and neurosurgery.

\nFr

anziska Mathis-Ullrich is Assistant Professor for Medical Robotics at the

Karlsruhe Institute of Technology (KIT) in Germany. Her primary research f

ocus is on minimally invasive and cognition controlled robotic systems and

embedded machine learning with emphasis on applications in surgery. She r

eceived her B.Sc. and M.Sc. degrees in mechanical engineering and robotics

in 2009 and 2012 and obtained her Ph.D. in 2017 in Microrobotics from ETH

Zurich\, respectively. Since 2019\, she has been an Assistant Professor w

ith the Health Robotics and Automation Laboratory at KIT.

\nProf. Ma

this-Ullrich is vice-president of the German Society for Computer- and

Robot-assisted Surgery (CURAC) and has received the IEEE ICRA Best P

aper Award in Medical Robotics (2014)\, the IEEE BioRob Best Student Paper

Award (2016) and won twice with her team the first prize of the ICRA Micr

oassembly Challenge (2014 & 2015). Furthermore\, she made it onto the pres

tigious Forbes “30 under 30” list (2017).

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12682@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Jamie Seward\; 1-800-JHU-JHU1 (548-5481)\; alumevents@jhu.edu\; htt

ps://events.jhu.edu/form/roboticsinhealthcare

DESCRIPTION: \n\nSponsored by the Hopkins Robotics Alumni Network\, the Lab

oratory for Computational Sensing + Robotics\, and the Healthcare Affinity

\nJoin us as we hear from Dr. Ayushi Sinha\, Senior Scientist in the Preci

sion Diagnosis & Image Guided Therapy department at Philips Research North

America. Dr. Sinha will discuss her time at Hopkins\, her career journey\

, and her current role. We’ll have time for Q&A with our speaker and time

to network with one another. This program will be presented by Zoom. A lin

k will be shared with you in advance.\nDisclaimer: The perspectives and op

inions expressed by the speaker(s) during this program are those of the sp

eaker(s) and not\, necessarily\, those of Johns Hopkins University and the

scheduling of any speaker at an alumni event or program does not constitu

te the University’s endorsement of the speaker’s perspectives and opinions

.\n\n\n\n\n\n\nAyushi Sinha\nSenior Scientist\, Phillips\n\n\nAyushi Sinha

is a Senior Scientist in the Precision Diagnosis & Image Guided Therapy d

epartment at Philips Research North America. She currently leads a project

focused on using machine learning to improve workflow during X-ray guided

minimally invasive procedures and has worked on improving guidance during

biopsy procedures in her previous roles at Philips. She also leads a grou

p focused on generating intellectual property around machine learning solu

tions for X-ray guided interventions.\nAyushi completed her Ph.D. at Johns

Hopkins University with Russ Taylor and Greg Hager in the Department of C

omputer Science with a focus on using statistical shape models to improve

guidance during endoscopic sinus procedures. She continued at Hopkins as a

postdoctoral fellow and research faculty to explore unsupervised learning

in image-based tool tracking. Before her Ph.D.\, Ayushi received a Master

of Science in Engineering degree in Computer Science at Hopkins working w

ith Misha Kazhdan\, and a Bachelor of Science degree in Computer Science a

nd a Bachelor of Arts degree in Mathematics at Providence College.\n\nFOLL

OW\nLinkedIn\nPersonal Website

DTSTART;TZID=America/New_York:20220311T120000

DTEND;TZID=America/New_York:20220311T130000

SEQUENCE:0

SUMMARY:Robotics in Healthcare: A Conversation with Ayushi Sinha\, PhD (Eng

ineering ’18)

URL:https://lcsr.jhu.edu/events/robotics-in-healthcare-a-conversation-with-

ayushi-sinha-phd-engineering-18/

X-COST-TYPE:free

X-WP-IMAGES-URL:thumbnail\;https://lcsr.jhu.edu/wp-content/uploads/2022/01/

Alumni.png\;600\;330\,medium\;https://lcsr.jhu.edu/wp-content/uploads/2022

/01/Alumni.png\;600\;330\,large\;https://lcsr.jhu.edu/wp-content/uploads/2

022/01/Alumni.png\;600\;330\,full\;https://lcsr.jhu.edu/wp-content/uploads

/2022/01/Alumni.png\;600\;330

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n\n

\n

\n\n

\n

Ayushi Sinha is a Senior Scientist

in the Precision Diagnosis & Image Guided Therapy department at Philips Re

search North America. She currently leads a project focused on using machi

ne learning to improve workflow during X-ray guided minimally invasive pro

cedures and has worked on improving guidance during biopsy procedures in h

er previous roles at Philips. She also leads a group focused on generating

intellectual property around machine learning solutions for X-ray guided

interventions.

\n

Ayushi completed her Ph.D. at Johns Hopkins Univers

ity with Russ Taylor and Greg Hager in the Department of Computer Science

with a focus on using statistical shape models to improve guidance during

endoscopic sinus procedures. She continued at Hopkins as a postdoctoral fe

llow and research faculty to explore unsupervised learning in image-based

tool tracking. Before her Ph.D.\, Ayushi received a Master of Science in E

ngineering degree in Computer Science at Hopkins working with Misha Kazhda

n\, and a Bachelor of Science degree in Computer Science and a Bachelor of

Arts degree in Mathematics at Providence College.

\n

\n

\n

\n

<

a class='speaker_sm_link m-r-10' href='https://www.cs.jhu.edu/~ayushis/#in

tro' target='_blank' rel='noopener'>Personal Website

\n

\n\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12644@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nMid-term Spring Semester can usher in the interview season

for many students seeking internship or fulltime employment opportunities.

Mark Savage\, Life Design Educator for Engineering Masters Students\, wi

ll walk you through what to expect and how to ace the job interview. Time

permitting\, we may also discuss the Elevator Pitch in preparation for yo

ur upcoming Robotics Industry Day. Remember to convey some of those 800 s

kills that relate to some of the jobs you’ll be discussing.\n

DTSTART;TZID=America/New_York:20220316T120000

DTEND;TZID=America/New_York:20220316T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Mark Savage “Interviews”

URL:https://lcsr.jhu.edu/events/mark-savage-2/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

\\n\\n

\n

\n

\n

Mid-term Spring Semester can usher

in the interview season for many students seeking internship or fulltime e

mployment opportunities. Mark Savage\, Life Design Educator for Engineeri

ng Masters Students\, will walk you through what to expect and how to ace

the job interview. Time permitting\, we may also discuss the Elevator Pit

ch in preparation for your upcoming Robotics Industry Day. Remember to co

nvey some of those 800 skills that relate to some of the jobs you’ll be di

scussing.

\n

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12449@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; 410-516-6841\; ashleymoriarty@jhu.edu

DESCRIPTION:Update Jan 28: Industry Day will now be virtual as we won’t kno

w the COVID climate in the future. In order to reduce zoom fatigue\, we ar

e splitting the event into 2 half days. Industry Day will be Monday March

21 1-4pm and and Tuesday March 22 1-4pm.\n2022 Industry Day Agenda/Program

\n\n\n\nMonday 3/21\nZoom\n\n\n1:00 pm\nWelcome WSE: Larry Nagahara\, Asso

ciate Dean for Research\n\n\n1:05 pm\nIntroduction to LCSR: Russell H. Tay

lor\, Director\n\n\n1:25 pm\nLCSR Education: Louis Whitcomb\, Deputy Direc

tor\n\n\n1:40 pm\nStudent Research Talk 1 – Max Li\n\n\n1:50 pm\nStudent R

esearch Talk 2 – Will Pryor\n\n\n2:00 pm\nStudent Research Talk 3 – Neha T

homas\n\n\n2:10 pm\nStudent Research Talk 4 – Filip Aronshtein and Peter W

eiss\n\n\n2:20 pm\nBreak\n\n\n2:30 pm\nJHTV – Seth Zonies\n\n\n2:45 pm\nIn

dustry Talk – Gouthami Chintalapani\, Siemens\n\n\n3:05 pm\nIndustry Talk

– Vinutha Kallem\, Waymo\n\n\n3:25 pm\nBreak\n\n\n3:35 pm\nNew Faculty Tal

k – Axel Krieger\n\n\n3:55 pm\nNew Faculty Talk – Mathias Unberath\n\n\n4:

15 pm\nClosing: Russell H. Taylor\, Director\n\n\n\n \n\n\n\nTuesday 3/22

\nGather Town:\n\n\n1:00-3:00pm\nPoster and Demo Session\n\n\n3:00-4:00pm

\nStudent and Industry Resume Review\n\n\n4:00-5:00pm\nNetworking Receptio

n\n\n\n\n \nThe Laboratory for Computational Sensing and Robotics will hig

hlight its elite robotics students and showcase cutting-edge research proj

ects in areas that include Medical Robotics\, Extreme Environments Robotic

s\, Human-Machine Systems for Manufacturing\, BioRobotics and more.\nRobot

ics Industry Day will provide top companies and organizations in the priva

te and public sectors with access to the LCSR’s forward-thinking\, solutio

n-driven students. The event will also serve as an informal opportunity to

explore university-industry partnerships.\nYou will experience dynamic pr

esentations and discussions\, observe live demonstrations\, and participat

e in speed networking sessions that afford you the opportunity to meet Joh

ns Hopkins most talented robotics students before they graduate.\nPlease c

ontact Ashley Moriarty if you have any questions.\n\nDownload our NEW 2022

Industry Day Book\n\n\n\n\nPlease contact Ashley Moriarty if you have any

questions.\n \nTickets: https://forms.gle/YUfHzMXBy6t6FdBn8.

DTSTART;TZID=America/New_York:20220321T130000

DTEND;TZID=America/New_York:20220321T160000

LOCATION:Zoom

SEQUENCE:0

SUMMARY:2022 JHU Robotics Industry Day

URL:https://lcsr.jhu.edu/events/jhu-robotics-industry-day-2022/

X-COST-TYPE:external

X-WP-IMAGES-URL:thumbnail\;https://lcsr.jhu.edu/wp-content/uploads/2017/11/

6722-LCSR-book-final-pdf.jpg\;553\;716\,medium\;https://lcsr.jhu.edu/wp-co

ntent/uploads/2017/11/6722-LCSR-book-final-pdf.jpg\;553\;716\,large\;https

://lcsr.jhu.edu/wp-content/uploads/2017/11/6722-LCSR-book-final-pdf.jpg\;5

53\;716\,full\;https://lcsr.jhu.edu/wp-content/uploads/2017/11/6722-LCSR-b

ook-final-pdf.jpg\;553\;716

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

\\n\\n

Update Jan 28

: Industry Day will now be virtual as we won’t know the COVID climate in t

he future. In order to reduce zoom fatigue\, we are splitting the event in

to 2 half days. Industry Day will be Monday March 21 1-4pm and and Tuesday March 22 1-4pm.

\n

2022 Industry Day Agenda/Program

\n

\n\n\n| Monday 3/21 | \nZoo

m | \n

\n\n| 1:00 pm | \nWelcome WSE: Larry Nagahara\, Associate Dean for Resear

ch | \n

\n\n| 1:05 pm | \nIntroduction to LCSR: Russell H. Taylor\, Director | \n

\n\n| 1:25 pm | \nLCSR Educati

on: Louis Whitcomb\, Deputy Director | \n

\n\n| 1:40 pm | \nStudent Research Talk 1 – Max Li |

\n

\n\n| 1:50 pm | \nStudent Resea

rch Talk 2 – Will Pryor | \n

\n\n| 2:00 pm | \n<

td width='588'>Student Research Talk 3 – Neha Thomas\n

\n\n2:10 pm\n| Student Research Talk 4 – Fili

p Aronshtein and Peter Weiss | \n

\n\n| 2:20 pm\n | Break | \n

\n\n| 2:30 pm | \nJHTV – Seth Zonies | \n

\n\n2:45 pm\n| Industry Talk – Gouthami Chint

alapani\, Siemens | \n

\n\n| 3:05 pm | \nIndustry Talk – Vinutha Kallem\, Waymo | \n

\n\n| 3:25 pm | \nBreak | \n

\n\n| 3:35 pm | \nNew Faculty Talk – A

xel Krieger | \n

\n\n| 3:55 pm | \nNew Faculty Talk – Mathias Unberath | \n

\n\n|

4:15 pm | \nClosing: Russell H. Taylor\, Director | \n

\n\n

\n

\n

\n\n\n| Tuesday 3/22 | \nGather Town

: | \n

\n\n| 1:00-3:00pm | \nPoster and Demo Session | \n

\n\n| 3:00-4:00

pm | \nStudent and Industry Resume Review | \n

\n<

tr>\n4:00-5:00pm | \nNetworking Receptio

n | \n\n\n

\n

\n

The Laboratory for Computa

tional Sensing and Robotics will highlight its elite robotics students and

showcase cutting-edge research projects in areas that include Med

ical Robotics\, Extreme Environments Robotics\, Human-Machine Systems for

Manufacturing\, BioRobotics and more.

\n

Robotics Industry D

ay will provide top companies and organizations in the private and public

sectors with access to the LCSR’s forward-thinking\, solution-driven stude

nts. The event will also serve as an informal opportunity to explore unive

rsity-industry partnerships.

\n

You will experience dynamic presentat

ions and discussions\, observe live demonstrations\, and participate in sp

eed networking sessions that afford you the opportunity to meet Johns Hopk

ins most talented robotics students before they graduate.

\n

Please c

ontact Ashley Moriarty if you have any questions.

\n

\n

\n

\n

\n

\n\n

Please contact Ashley Moriarty if you have any quest

ions.

\n

\n

Tickets: https://forms.gle/YUfHzMXBy6t6FdBn8

.

X-TICKETS-URL:https://forms.gle/YUfHzMXBy6t6FdBn8

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12632@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nAbstract:\nLocomotion in living systems and bio-inspired ro

bots requires the generation and control of oscillatory motion. While a co

mmon method to generate motion is through modulation of time-dependent “cl

ock” signals\, in this talk we will motivate and study an alternative meth

od of oscillatory generation through autonomous limit-cycle systems. Limit

-cycle oscillators for robotics have many desirable properties including a

daptive behaviors\, entrainment between oscillators\, and potential simpli

fication of motion control. I will present several examples of the generat

ion and control of autonomous oscillatory motion in bio-inspired robotics.

First\, I will describe our recent work to study the dynamics of wingbeat

oscillations in “asynchronous” insects and how we can build these behavio

rs into micro-aerial vehicles. In the second part of this talk I will desc

ribe how limit-cycle gait generation in collective robots can enable swarm

s to synchronize their movement through contact and without communication.

More broadly in this talk I hope to motivate why we should look to autono

mous dynamical systems for designing and controlling emergent locomotor be

haviors in bio-inspired robotics.\n \nBiography:\nDr. Nick Gravish receive

d his PhD from Georgia Tech where he used robots as physical models to mot

ivate and study aspects of biological locomotion. During his post-doc Grav

ish worked in the microrobotics lab of Rob Wood at Harvard\, where he gain

ed expertise in designing and studying insect-scale robots. Gravish is cur

rently an assistant professor at UC San Diego in the Mechanical and Aerosp

ace Engineering department. His lab bridges the gap between bio-inspiratio

n\, biomechanics\, and robotics\, towards the development of new bio-inspi

red robotic technologies to improve the adaptability and resilience of mob

ile robots.

DTSTART;TZID=America/New_York:20220330T120000

DTEND;TZID=America/New_York:20220330T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Nick Gravish “Design and control of emergent oscillat

ions for flapping-wing flyers and synchronizing swarms”

URL:https://lcsr.jhu.edu/events/nick-gravish/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

\\n\\n

\n

\n

\n

Abstract:

\n

Locomotion in living systems and bio-inspired robots requires the generati

on and control of oscillatory motion. While a common method to generate mo

tion is through modulation of time-dependent “clock” signals\, in this tal

k we will motivate and study an alternative method of oscillatory generati

on through autonomous limit-cycle systems. Limit-cycle oscillators for rob

otics have many desirable properties including adaptive behaviors\, entrai

nment between oscillators\, and potential simplification of motion control

. I will present several examples of the generation and control of autonom

ous oscillatory motion in bio-inspired robotics. First\, I will describe o

ur recent work to study the dynamics of wingbeat oscillations in “asynchro

nous” insects and how we can build these behaviors into micro-aerial vehic

les. In the second part of this talk I will describe how limit-cycle gait

generation in collective robots can enable swarms to synchronize their mov

ement through contact and without communication. More broadly in this talk

I hope to motivate why we should look to autonomous dynamical systems for

designing and controlling emergent locomotor behaviors in bio-inspired ro

botics.

\n

\n

Biography:

\n

Dr. Nick Gra

vish received his PhD from Georgia Tech where he used robots as physical m

odels to motivate and study aspects of biological locomotion. During his p

ost-doc Gravish worked in the microrobotics lab of Rob Wood at Harvard\, w

here he gained expertise in designing and studying insect-scale robots. Gr

avish is currently an assistant professor at UC San Diego in the Mechanica

l and Aerospace Engineering department. His lab bridges the gap between bi

o-inspiration\, biomechanics\, and robotics\, towards the development of n

ew bio-inspired robotic technologies to improve the adaptability and resil

ience of mobile robots.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12639@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nAbstract:\nDesigning robots for human interaction is a mult

ifaceted challenge involving the robot’s intelligent behavior\, physical f

orm\, mechanical structure\, and interaction schema. Our lab develops and

studies human-centered robots using a combination of methods from AI\, Des

ign\, and Human-Computer Interaction. This talk focuses on three recent p

rojects\, two concerning the design of a new robot\, and one that tackles

designing robots that help human designers.\n \nBiography:\nGuy Hoffman is

Associate Professor and the Mills Family Faculty Fellow in the Sibley Sch

ool of Mechanical and Aerospace Engineering at Cornell University. Prior t

o that he was an Assistant Professor at IDC Herzliya and co-director of th

e IDC Media Innovation Lab. Hoffman holds a Ph.D from MIT in the field of

human-robot interaction. He heads the Human-Robot Collaboration and Compan

ionship (HRC²) group\, studying the algorithms\, interaction schema\, and

designs enabling close interactions between people and personal robots in

the workplace and at home. Among others\, Hoffman developed the world’s fi

rst human-robot joint theater performance\, and the first real-time improv

ising human-robot Jazz duet. His research papers won several top academic

awards\, including Best Paper awards at robotics conferences in 2004\, 200

6\, 2008\, 2010\, 2013\, 2015\, 2018\, 2019\, 2020\, and 2021. His TEDx ta

lk is one of the most viewed online talks on robotics\, watched more than

3 million times.

DTSTART;TZID=America/New_York:20220406T120000

DTEND;TZID=America/New_York:20220406T130000

LOCATION:https://wse.zoom.us/s/94623801186

SEQUENCE:0

SUMMARY:LCSR Seminar: Guy Hoffman “Designing Robots and Designing with Robo

ts”

URL:https://lcsr.jhu.edu/events/guy-hoffman/

X-COST-TYPE:free

X-ALT-DESC;FMTTYPE=text/html:\\n\\n\\n

\\n\\n

\n

\n

\n

Abstract:

\n

Designing robots for human interaction is a multifaceted challenge involvi

ng the robot’s intelligent behavior\, physical form\, mechanical structure

\, and interaction schema. Our lab develops and studies human-centered rob

ots using a combination of methods from AI\, Design\, and Human-Computer I

nteraction. This talk focuses on three recent projects\, two concerning t

he design of a new robot\, and one that tackles designing robots that help

human designers.

\n

\n

Biography:

\n

Gu

y Hoffman is Associate Professor and the Mills Family Faculty Fellow in th

e Sibley School of Mechanical and Aerospace Engineering at Cornell Univers

ity. Prior to that he was an Assistant Professor at IDC Herzliya and co-di

rector of the IDC Media Innovation Lab. Hoffman holds a Ph.D from MIT in t

he field of human-robot interaction. He heads the Human-Robot Collaboratio

n and Companionship (HRC²) group\, studying the algorithms\, interaction s

chema\, and designs enabling close interactions between people and persona

l robots in the workplace and at home. Among others\, Hoffman developed th

e world’s first human-robot joint theater performance\, and the first real

-time improvising human-robot Jazz duet. His research papers won several t

op academic awards\, including Best Paper awards at robotics conferences i

n 2004\, 2006\, 2008\, 2010\, 2013\, 2015\, 2018\, 2019\, 2020\, and 2021.

His TEDx talk is one of the most viewed online talks on robotics\, watche

d more than 3 million times.

\n

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-12649@lcsr.jhu.edu

DTSTAMP:20240427T081404Z

CATEGORIES:

CONTACT:Ashley Moriarty\; amoriar2@jhu.edu\; https://wse.zoom.us/s/94623801

186

DESCRIPTION:Link for Live Seminar\nLink for Recorded seminars – 2021/2022 s

chool year\n \nAbstract:\nMany successful approaches to robotic locomotion

and manipulation operate with high quality simulation tools. Many such ap

proaches are “bottom-up” in a modeling sense\, accounting for all internal

forces and environmental interactions explicitly. These “bottom-up” model

s are used either beforehand (such as in reinforcement learning) and/or in

real time. However\, various types of robots are getting smaller\, softe

r\, and more complex (e.g. bio-hybrid actuators). Some robots lean on low-

precision manufacturing and fabrication techniques\, and many robots are n

ow being asked to operate in hard-to-characterize\, natural interfaces lik

e the human body. Such attributes can render “bottom-up” simulators imprac

tical for expected use cases on various research frontiers\, such as micro

-biomedical robots and soft robots deployed in uncharacterized environment

s. In this talk I will revisit the reconstruction equation\, a result from

the geometric mechanics literature that offers a “top-down” view of Lagra

ngian systems\, permitting insights into generalizable system behaviors al

ong a spectrum of friction-momentum dominance. I will show how these tools

can permit rapid modeling of high complexity robots in their operating en

vironment without the requirement to specify CAD models or any explicit fo

rces. I will also discuss a related strength and weakness of the approach

resulting from the use of symmetries. Surprisingly\, results in simulation

and hardware indicate that even with eight-jointed systems\, useful behav

ioral models can be computed from tens of cycles of data. This suggests th

at high degree of freedom robots can adjust and excel in situations where

explicit force models are poorly understood. I will also briefly discuss a

framework for robot recovery that leans on these tools as well as a metri

c for a robot’s ability to cover the local space of motions\, computed on

the Lie algebra of the position space. The metric allows primitives to be

valued for their contribution to the space of composed motions rather than

just their individual qualities. Results here include a Dubins car that c

an learn how to turn left (with its steering wheel restricted to only turn

right) in less than a second as well as a robot made of tree branches tha