Dr. Dana R. Yoerger

\nIn the past two decades\, engineers and scientists h

ave used robots to study basic processes in the deep ocean including the M

id Ocean Ridge\, coral habitats\, volcanoes\, and the deepest trenches. We

have also used such vehicles to investigate the environmental impact of t

he Deepwater Horizon oil spill and to investigate ancient and modern shipw

recks. More recently\, we are expanding our efforts to include the mesopel

agic or “twilight zone” which extends vertically in the ocean from about 2

00 to 1000m where sunlight ceases to penetrate. This regime is particular

ly under-explored and poorly understood due in large part to the logistica

l and technological challenges in accessing it. However\, knowledge of th

is vast region is critical for many reasons\, including understanding the

global carbon cycle – and Earth’s climate – and for managing biological re

sources. This talk will show results from our past expeditions and look to

future challenges.

\nDr. Dana Yoerger is a S

enior Scientist at the Woods Hole Oceanographic Institution and a research

er in robotics and autonomous vehicles. He supervises the research and ac

ademic program of graduate students studying oceanographic engineering thr

ough the MIT/WHOI Joint Program in the areas of control\, robotics\, and d

esign. Dr. Yoerger has been a key contributor to the remotely-operated ve

hicle Jason\; to the Autonomous Benthic Explorer known as ABE\; m

ost recently\, to the autonomous underwater vehicle\, Sentry\; th

e hybrid remotely operated vehicle\, Nereus which reached the bot

tom of the Mariana Trench in 2009\, and most recently Mesobot\, a

hybrid robot for midwater exploration. Dr. Yoerger has gone to sea on ov

er 90 oceanographic expeditions exploring the Mid-Ocean Ridge\, mapping un

derwater seamounts and volcanoes\, surveying ancient and modern shipwrecks

\, studying the environmental effects of the Deepwater Horizon oil spill\,

and the recent effort that located the Voyage Data Recorder from the merc

hant vessel El Faro. His current research focuses on robots for exploring

the midwater regions of the world’s ocean. Dr. Yoerger has served on sever

al National Academies committees and is a member of the Research Board of

the Gulf of Mexico Research Initiative. He has a PhD in mechanical engine

ering from the Massachusetts Institute of Technology and is a Fellow of th

e IEEE.

\nAll models

are wrong\, and too many are directed inward. The Internal Model Principle

of control engineering directs our attention (and modeling proficiency) t

o what makes the world around us patterned and predictable. It says that

driving a model of that patterned or predictable behavior in a feedback lo

op is the only way to achieve perfect tracking or disturbance rejection. I

n the spirit of “some models are useful”\, I will present a control system

model of humans tracking moving targets on a screen using a mouse and cur

sor. Simple analyses reveal this controller’s robustness to visual blankin

g and experiments (even experiments conducted remotely during the pandemic

) provide ample support. Extensions that combine feedforward and feedback

control complete the picture and complement existing literature in human m

otor behavior\, most of which is focused on modeling the system under cont

rol rather than the environment.

\nBrent Gillespie is a

Professor of Mechanical Engineering and Robotics at the University of Mic

higan. He received a Bachelor of Science in Mechanical Engineering from th

e University of California Davis in 1986\, a Master of Music from the San

Francisco Conservatory of Music in 1989\, and a Ph.D. in Mechanical Engine

ering from Stanford University in 1996. His research interests include hap

tic interface\, human motor behavior\, haptic shared control\, and robot-a

ssisted rehabilitation after neurological injury. Prof. Gillespie’s awards

include the Popular Science Invention Award (2016)\, the University of Mi

chigan Provost’s Teaching Innovation Prize (2012)\, and the Presidential E

arly Career Award for Scientists and Engineers (2001).

\nOver 70% of

our world is underwater\, but less than 1% of the world’s oceans have bee

n mapped at resolutions greater than 100m per pixel. Regular inspection\,

mapping\, and data collection in marine environments is essential for a wh

ole host of reasons including gaining a scientific understanding of our pl

anet\, civil infrastructure maintenance\, and safe navigation. However\, m

anual inspection/data collection using divers is expensive\, dangerous\, t

ime-consuming\, and tedious work.

\nIn this talk\, I will

discuss the use of autonomous underwater vehicles (AUVs) and autonomous su

rface vessels (ASVs) to automatically and intelligently map\, inspect\, an

d collect information in unstructured marine environments. In particular\,

we will discuss the problems present in this space as well as the contrib

utions my lab is making towards addressing these problems\, including i) t

he development of a general-purpose marine robotics testbed at BYU\, ii) t

he development of a marine robotics simulator called HoloOcean (https://holoocean.readthedoc

s.io/en/stable/)\, iii) advancements in marine robotic localization us

ing Lie groups\, and iv) preliminary results towards expert-guided topic m

odeling and intelligent data collection.

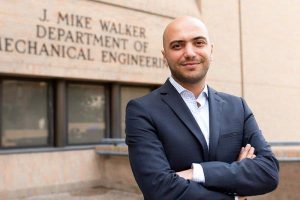

\nDr

. Joshua Mangelson holds PhD and Masters degrees in Robotics from the Univ

ersity of Michigan. After completing his degre\, he served as a post-docto

ral fellow at Carnegie Mellon University before joining the Electrical and

Computer Engineering faculty at Brigham Young University in 2020. His qua

lifications include demonstrated expertise in robotic perception\, mapping

\, and localization with a particular focus on marine robotics. He has ext

ensive experience leading marine robotic field trials in various locations

around the world including San Diego\, Hawaii\, Boston\, northern Michiga

n\, and Utah. In 2018\, his work on multi-robot mapping received the Best

Multi-Robot Paper Award at the IEEE ICRA conference and 1st-Place in the I

EEE OCEANS Student Poster Competition. He is currently serving as an assoc

iate editor for The International Journal of Robotics Research (IJRR) and

the IEEE/RSJ IROS Conference.

\nAbstract: Robotic

systems designed to work alongside people are susceptible to technical an

d unexpected errors. Prior work has investigated a variety of strategies a

imed at repairing people’s trust in the robot after its erroneous operatio

ns. In this work\, we explore the effect of post-error trust repair strate

gies (promise and explanation) on people’s trust in the robot under varyin

g power dynamics (supervisor and subordinate robot). Our results show that

\, regardless of the power dynamics\, promise is more effective at repairi

ng user trust than explanation. Moreover\, people found a supervisor robot

with verbal trust repair to be more trustworthy than a subordinate robot

with verbal trust repair. Our results further reveal that people are prone

to complying with the supervisor robot even if it is wrong. We discuss th

e ethical concerns in the use of supervisor robot and potential interventi

ons to prevent improper compliance in users for more productive human-robo

t collaboration.

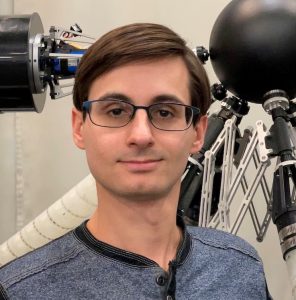

\nBio: Ulas Berk Karli is a MSE student i

n Robotics LCSR\, Johns Hopkins University. He received the Bachelor of Sc

ience degree in Mechanical Engineering and Double Majored in Computer Engi

neering from Koc University\, Istanbul in 2021. His research interests are

Human-Robot Collaboration and Robot Learning for HRI.

\nShiye Cao i

s a first-year Ph.D. student in the Department of Computer Science\, co-ad

vised by Dr. Chien-Ming Huang and Dr. Anqi Liu. She received the Bachelor

of Science degree in Computer Science with a second major in Applied Math

ematics and Statistics from Johns Hopkins University in 2021\, and the Mas

ters of Science in Engineering in Computer Science from Johns Hopkins Univ

ersity in 2022. Her work focuses on user trust and reliance in human-machi

ne collaborative tasks.

\nAbstract: Orb-weaving spider

s are functionally blind and detect prey-generated web vibrations through

vibration sensors at their leg joints to locate and identify prey caught i

n their (near) planar webs. Previous studies focused on how spiders use we

b geometry\, silk properties\, and web pre-tension to modulate vibration s

ensing. Spiders can also dynamically adjust their posture while sensing pr

ey\, which may be a form of active sensing (Hung\, Corver\, Gordus\, 2022\

, APS March Meeting). However\, whether this is true and how it works is p

oorly understood\, due to difficulty of measuring the dynamics of the enti

re prey-web-spider interaction system all at once. Here\, we developed a r

obophysical model of the system to test this hypothesis of active sensing

and discover its principles. Our model consists of a vibrating prey robot

and a spider robot that can adjust its posture\, with torsional springs at

leg joints and accelerometers to measure joint vibration. Both robots are

attached to a physical web made of cords with qualitatively similar prope

rties to real spider web threads. Load cells measure web pre-tension and a

high-speed camera system measure web vibrations and robot movement. Preli

minary results showed vibration attenuation through the web from the prey

robot. We are currently studying the complex effects of spider robot’s dyn

amic posture change on vibration propagation across the web and leg joints

\, by systematically varying the parameters of prey robot vibration\, spid

er robot leg posture\, and web pre-tension.

\nBio: Eugene

Lin is a third year PhD student in Dr. Chen Li’s lab (Terradynamics lab).

His work focuses on understanding environmental sensing on suspended\, spa

rse terrain. He received a B.S. in Mechanical Engineering at the Universit

y of California\, San Diego. He recently presented this work at the annual

SICB conference and will present it again at the annual March APS confere

nce.

\nAbstract: The development of untethered soft craw

ling robots programmed to respond to environmental stimuli and precisely m

aneuverable across size scales has been paramount to the fields of soft ro

botics\, drug delivery\, and autonomous smart devices. Of particular relev

ance are reversible thermoresponsive hydrogels\, which swell and shrink in

the temperature range of (30- 60 °C) for operating such untethered soft r

obots in human physiological and ambient conditions. While crawling has be

en demonstrated by thermoresponsive hydrogels\, they need surface modifica

tions in the form of rachets\, asymmetric patterning\, or constraints to a

chieve unidirectional motion.

\nHere we demonstrate and validate a n

ew mechanism for untethered\, unidirectional crawling for multisegmented g

el crawlers built from an active thermoresponsive poly (N-isopropyl acryla

mide) (pNIPAM) and passive polyacrylamide (pAAM) on flat unpatterned surfa

ces. By connecting bilayers of different geometries and thicknesses using

a centrally suspended gel linker\, we create a morphological gradient alon

g the fore-aft axis\, which leads to an asymmetry in the contact forces du

ring the swelling and deswelling of our crawler. We thoroughly explain our

mechanism using experiments and finite element simulations and\, using ex

periments\, demonstrate that we can tune the generated asymmetry and\, in

turn\, increase the displacement of the crawler by varying linker stiffnes

s\, morphology\, and the number of bilayer segments. We believe this mecha

nism can be widely applied across fields of study to create the next gener

ation of autonomous shape-changing and smart locomotors.

\nBio: Aish

warya is a 4th year Ph.D. candidate in the lab of Dr. David Gracias at Joh

ns Hopkins University\, USA. Her research focuses on exploring smart mater

ials like stimuli-responsive hydrogels\, combining them with novel pattern

ing methods like 3D/4D printing\, imprint molding\, lithography\, etc.\, a

nd using different mechanical design strategies to create untethered biomi

metic actuators and locomotors across size scales for soft robotics and bi

omedical devices.

\nAbs

tract: Prior error management techniques often do not possess the versatil

ity to appropriately address robot errors across tasks and scenarios. Thei

r fundamental framework involves explicit\, manual error management and im

plicit domain-specific information driven error management\, tailoring the

ir response for specific interaction contexts. We present a framework for

approaching error-aware systems by adding implicit social signals as anoth

er information channel to create more flexibility in application. To suppo

rt this notion\, we introduce a novel dataset (composed of three data coll

ections) with a focus on understanding natural facial action unit (AU) res

ponses to robot errors during physical-based human-robot interactions—vary

ing across task\, error\, people\, and scenario. Analysis of the dataset r

eveals that\, through the lens of error detection\, using AUs as input int

o error management affords flexibility to the system and has the potential

to improve error detection response rate. In addition\, we provide an exa

mple real-time interactive robot error management system using the error-a

ware framework.

\nBio: Maia Stiber is a 4th year Ph.D. can

didate in the Department of Computer Science\, co-advised by Dr. Chien-Min

g Huang and Dr. Russell Taylor. She received a B.S. in Computer Science fr

om Caltech in 2019 and a M.S.E. in Computer Science from Johns Hopkins Uni

versity in 2021. Her work focuses on leveraging natural human responses to

robot errors in an effort to develop flexible error management techniques

in support of effective human-robot interaction.

\nAbstract

: Lack of physical activity has severe negative health consequences for ol

der adults and limits their ability to live independently. Robots have bee

n proposed to help engage older adults in physical activity (PA)\, albeit

with limited success. There is a lack of robust understanding of older adu

lts’ needs and wants from robots designed to engage them in PA. In this pa

per\, we report on the findings of a co-design process where older adults\

, physical therapy experts\, and engineers designed robots to promote PA i

n older adults. We found a variety of motivators for and barriers against

PA in older adults\; we\, then\, conceptualized a broad spectrum of possib

le robotic support and found that robots can play various roles to help ol

der adults engage in PA. This exploratory study elucidated several overarc

hing themes and emphasized the need for personalization and adaptability.

This work highlights key design features that researchers and engineers sh

ould consider when developing robots to engage older adults in PA\, and un

derscores the importance of involving various stakeholders in the design a

nd development of assistive robots.

\nBio: Victor Antony i

s a second-year Ph.D. student in the Department of Computer Science\, advi

sed by Dr. Chien-Ming Huang. He received the Bachelor of Science degree in

Computer Science from the University of Rochester in 2021. His work focus

es on Social Robots for well-being.

\nHaptic devices allow touc

h-based information transfer between humans and intelligent systems\, enab

ling communication in a salient but private manner that frees other sensor

y channels. For such devices to become ubiquitous\, their physical and com

putational aspects must be intuitive and unobtrusive. The amount of inform

ation that can be transmitted through touch is limited in large part by th

e location\, distribution\, and sensitivity of human mechanoreceptors. Not

surprisingly\, many haptic devices are designed to be held or worn at the

highly sensitive fingertips\, yet stimulation using a device attached to

the fingertips precludes natural use of the hands. Thus\, we explore the d

esign of a wide array of haptic feedback mechanisms\, ranging from devices

that can be actively touched by the fingertips to multi-modal haptic actu

ation mounted on the arm. We demonstrate how these devices are effective i

n virtual reality\, human-machine communication\, and human-human communic

ation.

\nAllison Okamura received the BS degr

ee from the University of California at Berkeley\, and the MS and PhD degr

ees from Stanford University. She is the Richard W. Weiland Professor of E

ngineering at Stanford University in the mechanical engineering department

\, with a courtesy appointment in computer science. She is an IEEE Fellow

and is the co-general chair of the 2022 IEEE/RSJ International Conference

on Intelligent Robots and Systems and a deputy director of the Wu Tsai Sta

nford Neurosciences Institute. Her awards include the IEEE Engineering in

Medicine and Biology Society Technical Achievement Award\, IEEE Robotics a

nd Automation Society Distinguished Service Award\, and Duca Family Univer

sity Fellow in Undergraduate Education. Her academic interests include hap

tics\, teleoperation\, virtual reality\, medical robotics\, soft robotics\

, rehabilitation\, and education. For more information\, please see the CHARM Lab website.

DTSTART;TZID=America/New_York:20230308T120000

DTEND;TZID=America/New_York:20230308T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Allison Okamura “Wearable Haptic Devices for Ubiquito

us Communication”

URL:https://lcsr.jhu.edu/events/allison-okamura/

X-COST-TYPE:free

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13542@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:Christy Brooks

DESCRIPTION:Dr. Mathews earned her PhD in gen

etics from Case Western Reserve University\, as well as a concurrent Maste

r’s in bioethics. She completed a Post-Doctoral Fellowship in genetics at

Johns Hopkins\, and the Greenwall Fellowship in Bioethics and Health Polic

y at Johns Hopkins and Georgetown Universities.

\n\n\n\n| Friday 3/24 | \nLocation: Glass Pavilion – Levering

Hall | \n

\n\n| 8:30 AM | \nRegistration Open a

nd Breakfast | \n

\n\n| 9:00 AM | \nWelcome | \n

\n\n| 9:05 AM | \nIntroduction to LCSR – Russell H. Taylor\, D

irector | \n

\n\n| 9:20 AM | \nLCSR Education – Louis Wh

itcomb\, Deputy Director | \n

\n\n| 9:25 AM | \nIAA – Ja

mes Bellingham and Anton Dahbura | \n

\n\n| 9:30 AM | \n

Student Research Talk – Max Li | \n

\n\n| 9:42 AM | \nSt

udent Research Talk – Divya Ramesh | \n

\n\n| 9:55 AM | \nStudent Research Talk – Michael Kam\n

\n\n| 10:07 AM |

\nStudent Research Talk – Di Cao | \n

\n\n| 10:20 AM |

\nCoffee Break | \n

\n\n| 10:40 AM | \nJohns Hopkins

Tech Ventures – Seth Zonies | \n

\n\n| 10:55 AM | \nInd

ustry Talk – Ankur Kapoor\, Siemens | \n

\n\n| 11:15 AM | \n

Industry Talk – William Tan\, GE | \n

\n\n| 11:35 AM |

\nNew LCSR Faculty – Alejandro Martin-Gomez\, | \n

\n\n| 1

1:55 AM | \nClosing – Russell H. Taylor\, Director | \n

\n

\n| 12:00 PM | \nLunch – Resume Roundtables | \n

\n\n | \n | \n

\n\n| 1:30-4:00 PM | \nPoster and Demo

Session (Hackerman Hall) | \n

\n\n| 1:45-3:45 PM | \n<

td> Guided Krieger Hall Tours (meet outside Hackerman 134)\n\n

\n | \n | \n

\n\n| 4:00-5:00 PM | \nA

lumni Reception (Shriver Hall – Clipper Room) | \n

\n\n\n

\nThe Laboratory for Computational Sensing and Robotics wi

ll highlight its elite robotics students and showcase cutting-edge researc

h projects in areas that include Medical Robotics\, Extreme Enviro

nments Robotics\, Human-Machine Systems\, BioRobotics and more.\n

Robotics Industry Day will provide top companies and organizations

in the private and public sectors with access to the LCSR’s forward-thinki

ng\, solution-driven students. The event will also serve as an informal op

portunity to explore university-industry partnerships.

\nYou will ex

perience dynamic presentations and discussions\, observe live demonstratio

ns\, and participate in speed networking sessions that afford you the oppo

rtunity to meet Johns Hopkins most talented robotics students before they

graduate.

\nPlease contact Ashley Moriarty if you have any que

stions.

\n

\n\n\n\n

\n\nPlease co

ntact Ashley Moriarty if you have any questions.

\n

\nT

ickets: https://forms.gle/c8DwoVkfnPTsSbcY7.

DTSTART;TZID=America/New_York:20230324T090000

DTEND;TZID=America/New_York:20230324T160000

LOCATION:Levering Hall - Glass Pavilion @ 3400 N Charles St\, Baltimore MD

21218

SEQUENCE:0

SUMMARY:2023 JHU Robotics Industry Day

URL:https://lcsr.jhu.edu/events/jhu-robotics-industry-day-2023/

X-COST-TYPE:external

X-WP-IMAGES-URL:thumbnail\;https://lcsr.jhu.edu/wp-content/uploads/2017/11/

header.png\;872\;130\,medium\;https://lcsr.jhu.edu/wp-content/uploads/2017

/11/header.png\;872\;130\,large\;https://lcsr.jhu.edu/wp-content/uploads/2

017/11/header.png\;872\;130\,full\;https://lcsr.jhu.edu/wp-content/uploads

/2017/11/header.png\;872\;130

X-TICKETS-URL:https://forms.gle/c8DwoVkfnPTsSbcY7

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13544@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:Christy Brooks\; cbrook53@jhu.edu

DESCRIPTION:\n\n

\nAbstract:

\nThe open ocean is a m

assive 3D ecosystem responsible for absorbing much of Earth’s excess heat

and CO2 emissions produced by humans. A portion of the ocean’s carbon pum

p sequesters atmospheric carbon into the sediments of the deep sea. Quanti

fying the amount of this carbon exported to the deep and identifying the v

ariables driving that export is vital to understanding how we might better

mitigate the deleterious effects of climate change. The Monterey Bay Aqua

rium Institutes MBARI has developed high endurance mobile robots to invest

igate ocean carbon transport. One of its vehicles\, the Benthic Rover has

been working continuously on the seafloor at 4000m for 6 years– measuring

the spatial and temporal variability of carbon export from the surface. Th

is long-term dataset has revealed that carbon enters the deep sea in large

pulses of sinking detritus. MBARI is now focused on connecting these carb

on pulses to processes in the upper layers of the ocean. Exploring\, mappi

ng and sampling the upper water column to uncover ocean productivity hotsp

ots (HS) is a central/key initiative/goal requiring the collaboration of M

BARI’s Long Range Autonomous Underwater Vehicles (LRAUVs) as well as other

complementary vehicles that are able to measure the full ecology of the h

otspots from the microbes to the whales.

\nBio:

\nBrett W. Hob

son received a BS in Mechanical Engineering from San Francisco State Unive

rsity in 1989. He began his ocean engineering career at Deep Ocean Engine

ering in San Leandro California\, developing remotely operated vehicles (R

OVs) and manned submarines. In 1992\, he helped start and run Deep Sea Dis

coveries where he helped develop and operate deep towed sonar and camera s

ystems offshore the US\, Venezuela\, Spain and the Philippians. In 1998\, he joined Nekton Research in Nor

th Carolina to develop bio-inspired underwater vehicles for Navy applicati

ons. After the sale of Nekton Research to iRobot in 2005\, Hobson joined t

he Monterey Bay Aquarium Research Institute (MBARI) where he leads the Lon

g Range Autonomous Underwater Vehicle (AUV) program overseeing the develop

ment and science operations of a fleet of AUVs. He also helped develop MB

ARI’s long-endurance seafloor crawling Benthic Rover. Hobson holds a paten

t on the design of a biomimetic underwater vehicle and has been the Co-PI

on large projects funded by NSF\, NASA\, and DHS projects aimed at develop

ing novel underwater vehicles for ocean science.

DTSTART;TZID=America/New_York:20230329T120000

DTEND;TZID=America/New_York:20230329T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Brett Hobson “The development of robots for open ocea

n ecology”

URL:https://lcsr.jhu.edu/events/brett-hobson/

X-COST-TYPE:free

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13546@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:LCSR\; lcsr-admin@jhu.edu

DESCRIPTION:\n\n

\nBenjamin D. Killeen “An Autonom

ous X-ray Image Acquisition and Interpretation System for Assisting Percut

aneous Pelvic Fracture Fixation”

\nAbstract: Percutaneous f

racture fixation involves multiple X-ray acquisitions to determine adequat

e tool trajectories in bony anatomy. In order to reduce time spent adjusti

ng the X-ray imager’s gantry\, avoid excess acquisitions\, and anticipate

inadequate trajectories before penetrating bone\, we propose an autonomous

system for intra-operative feedback that combines robotic X-ray imaging a

nd machine learning for automated image acquisition and interpretation\, r

espectively. Our approach reconstructs an appropriate trajectory in a two-

image sequence\, where the optimal second viewpoint is determined based on

analysis of the first image. The reconstructed corridor and K-wire pose a

re compared to determine likelihood of cortical breach\, and both are visu

alized for the clinician in a mixed reality environment that is spatially

registered to the patient and delivered by an optical see-through head-mou

nted display. We assess the upper bounds on system performance through in

silico evaluation across 11 CTs with fractures present\, in which the corr

idor and K-wire are adequately reconstructed. In post-hoc analysis of radi

ographs across 3 cadaveric specimens\, our system determines the appropria

te trajectory to within 2.8 ± 1.3 mm and 2.7 ± 1.8°. An expert user study

with an anthropomorphic phantom demonstrates how our autonomous\, integrat

ed system requires fewer images and lower movement to guide and confirm ad

equate placement compared to current clinical practice.

\nBio: A 4th

year Ph.D. candidate at Johns Hopkins University\, Benjamin D. Killeen is interested in intelligent s

urgical systems that improve patient outcomes. His recent work involves re

alistic simulation of interventional X-ray imaging for the purpose of deve

loping AI-integrated surgical systems. Benjamin is a member of the Advance

d Robotics and Computationally Augmented Environments (ARCADE) research gr

oup\, led by Mathias Unberath\, as well as the President of the LCSR Gradu

ate Student Association (GSA) and Sports Officer for the MICCAI Student Bo

ard. In 2019\, he earned a B.A. in Computer Science with Honors from the U

niversity of Chicago\, with a minor in Physics\, and he has completed inte

rnships at IBM Research – Almaden\, Epic Systems\, and Intuitive Surgical.

In his spare time\, he enjoys bouldering and creative writing.

\n <

/p>\n

Divya Ramesh “Studying terrestrial fish locomotion on wet

deformable substrates”

\nAbstract: Many amphibious fishes c

an make forays onto land. The water-land interface often has wet deformabl

e substrates like mud and sand\, whose strength changes as they get dryer

or wetter\, challenging locomotion. Most previous terrestrial locomotion s

tudies of fishes focused on quantifying kinematics\, muscle control\, and

functional morphology. Yet\, without quantifying how the complex mechanics

of wet deformable substrates affect ground reaction forces during locomot

ion\, we cannot fully understand how these locomotor features interact wit

h the environment to permit performance. Here\, we used controlled mud as

a model wet deformable substrate and developed methods to prepare mud into

spatially uniform and temporally stable states and tools to characterize

its strength. As a first step to understand how mud strength impact locomo

tion\, we studied the Atlantic mudskipper (Periophthalmus barbarus) moving on a thicker and a thinner mud\, which differs in strength by a

factor of two. The animal performed similar “crutching” walks on mud of bo

th strengths\, with only a slight reduction in speed on the thinner mud (f

rom 0.39 ± 0.12 to 0.32 ± 0.14 body length/s\, P < 0.05\, ANOVA).

However\, it jumped more frequently on the thinner mud (from 1.2 ± 0.7 to

3.2 ± 1.6 times per minute\, P < 0.05\, ANOVA)\, likely due to i

t sticking more to the belly and fins and hindering walking.

\nBio:

Divya Ramesh is a fourth year PhD student in Dr. Chen Li’s lab (Terradynam

ics lab). Her current work focuses in studying and understanding amphibiou

s fish locomotion on wet deformable substrates. Her previous work focused

in using contact sensing to study and understand limbless locomotion of sn

akes and snake-robot on 3-D terrains. She received a BTech in Electronics

and Communication Engineering from VIT University (Vellore\, India) and MS

E in Electrical Engineering from University of Pennsylvania. She has publi

shed in IEEE RA-L (presented at ICRA 2020) and presented at ICRA 2022. Thi

s work was presented in SICB 2023 where she was a finalist for Best Studen

t Presentation in the Division of Comparative Biomechanics.

\n

\nGargi Sadalgekar “Template-level robophysical models for stud

ying sustained terrestrial locomotion of amphibious fish”

\nAbstract: Studying terrestrial locomotion of amphibious fishes informs ho

w early tetrapods may have invaded land. The water-land interface often ha

s wet\, deformable substrates like mud and sand that challenge locomotion.

Recent progress has been made on understanding limbed and limbless tetrap

od locomotion by studying robots as active physical models of model organi

sms. Robophysical models complement animals with their high controllabilit

y and repeatability for systematic experiments. They also complement theor

etical and computational models because they enact physical laws in the re

al world\, which is especially useful for studying locomotion in complex t

errain. Here\, we created the first robophysical models for studying susta

ined terrestrial locomotion of amphibious fishes on controlled mud as a mo

del web deformable substrate. Our three robots are on the template level (

lowest degree-of-freedom to generate a target locomotor behavior) and repr

esent mudskippers\, ropefish\, and bichirs that use appendicular\, axial\,

and axial-appendicular strategies\, respectively. The mudskipper robot ro

tated two fins in phase to raise the body and “crutch” forward on mud. The

ropefish robot used body lateral undulation to “surface-swim” on mud. The

bichir robot combined body undulation and out-of-phase fin rotations to “

army-crawl” forward on mud. Each robot generated qualitatively similar loc

omotion on mud as its model organism. We are currently refining the robots

and performing systematic experiments on mud of a wide range of strengths

.

\nBio: Gargi Sadalgekar is a 2nd year master’s student

in the Terradynamics Lab at Johns Hopkins University and is interested in

developing bio-inspired robots to investigate locomotion in extreme enviro

nments. Her current work focuses on developing low-order robophysical mode

ls of amphibious fish to uncover general principles of locomotion over wet

deformable substrates\, and this work was presented in SICB 2023 where sh

e was a finalist for Best Student Presentation in the Division of Comparat

ive Biomechanics. Gargi received a BSE in Mechanical and Aerospace Enginee

ring from Princeton University with a minor in Robotics and Information Sy

stems.

\n

\nYaqing Wang “Force sensing can help robot

s reconstruct potential energy landscape and guide locomotor transitions t

o traverse large obstacles”

\nAbstract: Legged robots alrea

dy excel at maintaining stability during upright walking and running to st

ep over small obstacles. However\, they must further traverse large obstac

les comparable to body size to enable a broader range of applications like

search and rescue in rubble and sample collection in rocky Martian hills.

Our lab’s recent research demonstrated that legged robots can traverse la

rge obstacles if they can be destabilized to transition across various loc

omotor modes. When viewed on a potential energy landscape of the system\,

which results from physical interaction with obstacles\, these locomotor t

ransitions are strenuous barrier-crossing transitions between landscape ba

sins. Because potential energy landscape gradients are closely related to

terrain reaction forces and torques\, we hypothesize that sensing obstacle

interaction forces allows landscape reconstruction\, which can guide robo

ts to cross barriers at the saddle to make transitions more easily (analog

ous to crossing a mountain ridge at its saddle). Here\, we created a robop

hysical model with custom 3-axis force sensors and surface contact sensors

to measure forces and contacts during interaction with large obstacles. W

e found that the measured forces indeed well captured potential energy lan

dscape gradients and we could use the locally measured gradients to roughl

y reconstruct the potential energy landscape. Our future work should under

stand how to enable robots to make locomotor transitions at the landscape

saddle based on local landscape reconstruction.

\nBio: Yaqing Wang i

s a fourth-year PhD student in Dr. Chen Li’s lab (Terradynamics lab). His

work focuses on understanding locomotor transitions in bio and bio-inspire

d terrestrial locomotion. He received a B.S. in Mechanical Engineering at

Tsinghua University in China. He recently presented this work at the annua

l APS March meeting.

DTSTART;TZID=America/New_York:20230405T120000

DTEND;TZID=America/New_York:20230405T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Student Seminar

URL:https://lcsr.jhu.edu/events/lcsr-seminar-student-2/

X-COST-TYPE:free

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13548@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:LCSR\; lcsr-admin@jhu.edu

DESCRIPTION:\n\n

\nAbstract:

\nEmot

ional intelligence for artificial systems is not a luxury but a necessity.

It is paramount for many applications that require both short and long–te

rm engaging human–technology interactions\, including entertainment\, hosp

itality\, education\, and healthcare. However\, creating artificially inte

lligent systems and interfaces with social and emotional skills is a chall

enging task. Progress in industry and developments in academia provide us

a positive outlook\, however\, the artificial emotional intelligence of th

e current technology is still quite limited. Creating technology with arti

ficial emotional intelligence requires the development of perception\, lea

rning\, action and adaptation capabilities\, and the ability to execute th

ese pipelines in real-time in human-AI interactions. Truly addressing thes

e challenges relies on cross-fertilization of multiple research fields\, i

ncluding psychology\, nonverbal behaviour understanding\, psychiatry\, vis

ion\, social signal processing\, affective computing\, and human-computer

and human-robot interaction. My lab’s research has been pushing the state

of the art in a wide spectrum of research topics in this area\, including

the design and creation of new datasets\; novel feature representations a

nd learning algorithms for sensing and understanding human nonverbal behav

iours in solo\, dyadic and group settings\; designing short/long-term huma

n-robot adaptive interactions for wellbeing\; and creating algorithmic sol

utions to mitigate the bias that creeps into these systems.

\nIn thi

s talk\, I will present the recent explorations of the Cambridge Affective

Intelligence and Robotics Lab in these areas with insights for human embo

died-AI interaction research.

\nBio:

\nHatice

Gunes is a Professor of Affective Intelligence and Robotics (AFAR) and le

ads the AFAR Lab at the University of Cambridge’s Department of Computer S

cience and Technology. Her expertise is in the areas of affective computin

g and social signal processing cross-fertilising research in multimodal in

teraction\, computer vision\, signal processing\, machine learning and soc

ial robotics. She has published over 155 papers in these areas (H-index=36

\, citations > 7\,300)\, with most recent works on lifelong learning

for facial expression recognition\, fairness\, and affective robotics\;

and longitudinal HRI for wellbeing. She has served as an Associate Edi

tor for IEEE Transactions on Affective Computing\, IEEE Transactions on Mu

ltimedia\, and Image and Vision Computing Journal\, and has guest edited m

any Special Issues\, the latest ones being 2022 Int’l Journal of Social Ro

botics Special Issue on Embodied Agents for Wellbeing\, 2022 Frontiers in

Robotics and AI Special Issue on Lifelong Learning and Long-Term Human-Rob

ot Interaction\, and 2021 IEEE Transactions on Affective Computing Specia

l Issue on Automated Perception of Human Affect from Longitudinal Behav

ioural Data. Other research highlights include Outstanding PC Award at A

CM/IEEE HRI’23\, RSJ/KROS Distinguished Interdisciplinary Research Award F

inalist at IEEE RO-MAN’21\, Distinguished PC Award at IJCAI’21\, Best P

aper Award Finalist at IEEE RO-MAN’20\, Finalist for the 2018 Frontiers Sp

otlight Award\, Outstanding Paper Award at IEEE FG’11\, and Best Demo Awar

d at IEEE ACII’09. Prof Gunes is a former President of the Association for

the Advancement of Affective Computing (2017-2019)\, is/was the General C

o-Chair of ACM ICMI’24 and ACII’19\, and the Program Co-Chair of ACM/IEEE

HRI’20 and IEEE FG’17. She was the Chair of the Steering Board of IEEE Tra

nsactions on Affective Computing (2017-2019) and was a member of the Human

-Robot Interaction Steering Committee (2018-2021. Her research has been su

pported by various competitive grants\, with funding from Google\, the Eng

ineering and Physical Sciences Research Council UK (EPSRC)\, Innovate UK\,

British Council\, Alan Turing Institute and EU Horizon 2020. In 2019 she

was awarded a prestigious EPSRC Fellowship to investigate adaptive robotic

emotional intelligence for wellbeing (2019-2024) and has been named a Fac

ulty Fellow of the Alan Turing Institute – UK’s national centre for data s

cience and artificial intelligence (2019-2021). Prof Gunes is currently a

Staff Fellow of Trinity Hall\, a Senior Member of the IEEE\, and a member

of the AAAC.

DTSTART;TZID=America/New_York:20230412T120000

DTEND;TZID=America/New_York:20230412T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Hatice Gunes “Emotional Intelligence for Human-Embodi

ed AI Interaction”

URL:https://lcsr.jhu.edu/events/hatice-gunes/

X-COST-TYPE:free

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13698@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:

DESCRIPTION:Zoom Link for Seminar Recorded seminars for the 2022/

2023 school year

\n

\nAbstract:

\nOld monkeys may have stories. Some could be lessons learned to help overc

ome obstacles. The first part of this seminar discusses classical robotic

applications in industry and critical factors in the development and appli

cations. The second part discusses intelligent manufacturing with the use

of data and easy-to-use analytics\, necessary in modern-day manufacturing.

Moving forward\, some opportunities in robotics in intelligent manufactur

ing are discussed.

\n

\n \n

Bio:

\nDr. Day was previously a Senior VP of

Foxconn Automation Technology. Dr. Day began his career in 1970 as a coop

equipment development engineer at IBM Burlington Vt. and later continued

to plow in the manufacturing automation field with General Motors\, Fanuc\

, Rockwell Automation\, Stoneridge and Foxconn. Dr. was the founder of Fox

bot\, with 80\,000 units deployed in various applications. In June 2016\,

Dr. Day received the Joseph F. Engelberger award from the Robot Industries

Association for a lifetime career contribution in the automotive and elec

tronic industries.

\n

DTSTART;TZID=America/New_York:20230418T110000

DTEND;TZID=America/New_York:20230418T120000

LOCATION:106 Latrobe Hall

SEQUENCE:0

SUMMARY:LCSR Seminar: Chia Day “Robotics in Intelligent Manufacturing”

URL:https://lcsr.jhu.edu/events/lcsr-seminar-chia-day-robotics-in-intellige

nt-manufacturing/

X-COST-TYPE:free

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13550@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:LCSR\; lcsr-admin@jhu.edu

DESCRIPTION:

\n\n\n

\n“Games Without Fr

ontiers: Beating Super Mario Bros. 1-1 with a 3D-Printed Soft Robotic

Hand”

\n Ryan D. Sochol\

, Ph.D.

\n

\nAssociate P

rofessor\, Department of Mechanical Engineering

\nAffiliate

Faculty\, Fischell Department of Bioengineering

\nExecutive

Committee Member\, Maryland Robotics Center

\nFischell Insti

tute Fellow\, Robert E. Fischell Institute for Biomedical Devices

\nAffiliate Faculty\, Institute for Systems Research

\nJam

es Clark School of Engineering

\nUniversity of Maryland\, College Par

k

\n

\nAbstract:

\n

\nOver the pa

st decade\, the field of “soft robotics” has established itself as uniquel

y suited for applications that would be difficult or impossible to realize

using traditional\, rigid-bodied robots. The reliance on compliant mater

ials that are often actuated by fluidic (e.g.\, hydraulic or pneu

matic) means presents a number of inherent benefits for soft robots\, part

icularly in terms of safety for human-robot interactions and adaptability

for manipulating complex and/or delicate objects. Unfortunately\, progres

s has been impeded by broad challenges associated with controlling the und

erlying fluidics of such systems. In this seminar\, Prof. Ryan D. Sochol

will discuss how his Bioinspired Advanc

ed Manufacturing (BAM) Laboratory is leveraging the capabilities

of two alternative types of additive manufacturing (or “three-dimensional

(3D) printing”) technologies to address these critical barriers. Specific

ally\, Prof. Sochol will describe his lab’s recent strategies for using th

e 3D nanoprinting approach\, “Two-Photon Direct Laser Writing”\, and the i

nkjet 3D printing technique\, “PolyJet 3D Printing”\, to engineer soft rob

otic systems that comprise integrated fluidic circuitry… including a soft

robotic “hand” that plays Nintendo.

\n

\nBiography:

\nProf. Ryan D. Sochol is an Associate Professor of Mechanic

al Engineering within the A. James Clark School of Engineering at the Univ

ersity of Maryland\, College Park. Prof. Sochol received his B.S. in Mech

anical Engineering from Northwestern University in 2006\, and both his M.S

. and Ph.D. in Mechanical Engineering from the University of California\,

Berkeley\, in 2009 and 2011\, respectively\, with Doctoral Minors in Bioen

gineering and Public Health. Prior to joining the faculty at UMD\, Prof.

Sochol served two primary academic roles: (i) as an NIH Postdocto

ral Trainee within the Harvard-MIT Division of Health Sciences & Technolog

y\, Harvard Medical School\, and Brigham & Women’s Hospital\, and (ii<

/em>) as the Director of the Micro Mechanical Methods for Biology (M3

B) Laboratory Program within the Berkeley Sensor & Actuator Center a

t UC Berkeley. Prof. Sochol also served as a Visiting Postdoctoral Fellow

at the University of Tokyo. In 2019\, Prof. Sochol was elected Co-Presid

ent of the Mid-Atlantic Micro/Nano Alliance. His group received IEEE

MEMS Outstanding Student Paper Awards in both 2019 and 2021 and the <

em>Springer Nature Best Paper Award (Runner-Up) in 2022. P

rof. Sochol received the NSF CAREER Award in 2020 and the Ear

ly Career Award from the IOP Journal of Micromechanics and Microengin

eering in 2021\, and was recently honored as an inaugural Rising Star<

/em> by the journal\, Advanced Materials Technologies\, in 2023.

\n

DTSTART;TZID=America/New_York:20230419T120000

DTEND;TZID=America/New_York:20230419T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Ryan Sochol “Games without Frontiers: Beating Super M

ario Bros. 1-1 with a 3D printed Soft Robotic Hand”

URL:https://lcsr.jhu.edu/events/ryan-sochol/

X-COST-TYPE:free

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13552@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:LCSR\; lcsr-admin@jhu.edu

DESCRIPTION:

\n\n\n

\nAyushi Sinha Ph.D.

\nJob title and affiliation: Senior Scient

ist\, Philips

\nJHU degrees and year(s) of degree(s): Ph.D. Computer Science 2018\, MSE Computer Science 2014

\nShort bio: Ayushi Sinha is a Senior Scientist at Philips working

on image guided therapy systems including C-arm X-ray imaging systems. Ay

ushi received a BS in Computer Science and a BA in Mathematics from Provid

ence College\, RI\, and a MSE and Ph.D. in Computer Science from Johns Hop

kins University\, MD. She remained at Hopkins as a Provost’s Postdoctoral

Fellow followed by a Research Scientist before joining Philips in late 201

9. Her primary research interest is in image analysis to enable automation

of medical imaging systems and integration of multiple systems.

\n<

strong>

\n

\nCan Kocabalk

anli M.S.E.

\nJob title and affiliation:

Computer Vision Research Scientist at PediaMetrix

\nJHU degr

ees and year(s) of degree(s): BS Mechanical Engineering 2019\, MS

E Robotics 2020

\nShort bio: Originally from Istanb

ul\, Turkey\, Can came to JHU for his undergraduate degree and early on ex

plored an interest in robotics through coursework and the robotics minor.

He completed his master’s research and thesis under Prof. Taylor in 2020 o

n an autonomous endoscope safety system. Since graduation\, Can has been w

orking as a Computer Vision Research Scientist at PediaMetrix\, a medical

imaging startup focused on infant healthcare. There he has worked on devel

oping\, deploying\, and validating image processing and vision algorithms\

, machine and deep learning models\, as well as acquiring 510(k) clearance

. Since September 2022\, he has taken a leadership role in their R&D depar

tment. Can is interested in making healthcare more robust and accessible t

hrough innovation and technology and is the co-inventor of 2 US patents.\n

\n

\nMich

ael Kutzer Ph.D.

\nJob title and affiliation: Associate Professor\, United States Naval Academy Department of Weapo

ns\, Robotics\, and Control Engineering/Instructor\, JHU-EP Mechanical Eng

ineering Program

\nJHU degrees and year(s) of degree(s): M.S.E. Mechanical Engineering 2007\, Ph.D. Mechanical Engineering 20

12

\nShort bio: Mike Kutzer received his Ph.D. in m

echanical engineering from the Johns Hopkins University\, Baltimore\, MD\,

USA in 2012. He is currently an Associate Professor in the Weapons\, Robo

tics\, and Control Engineering Department (WRCE) at the United States Nava

l Academy (USNA). Prior to joining USNA\, he worked as a senior researcher

in the Research and Exploratory Development Department of the Johns Hopki

ns University Applied Physics Laboratory (JHU/APL). His research interests

include robotic manipulation\, computer vision and motion capture\, appli

cations of and extensions to additive manufacturing\, mechanism design and

characterization\, continuum manipulators\, redundant mechanisms\, and mo

dular systems.

\n

\n

\n

DTSTART;TZID=America/New_York:20230426T120000

DTEND;TZID=America/New_York:20230426T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Careers in Robotics: A Panel Discussion With Experts

From Industry and Academia

URL:https://lcsr.jhu.edu/events/robotics/

X-COST-TYPE:free

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13826@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:

DESCRIPTION:Click here to see recording.

DTSTART;TZID=America/New_York:20230830T120000

DTEND;TZID=America/New_York:20230830T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Welcome Townhall “Review of LCSR”

URL:https://lcsr.jhu.edu/events/lcsr-seminar-welcome-townhall-review-of-lcs

r-hackerman-b17/

X-COST-TYPE:free

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13828@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:

DESCRIPTION: Dr. Juan Wachs is a Professor and Faculty Scholar

in the Industrial Engineering School at Purdue University\, Professor of

Biomedical Engineering (by courtesy) and an Adjunct Associate Professor of

Surgery at IU School of Medicine. He is currently serving at NSF as a Pro

gram Director for robotics and AI programs at CISE. He is also the directo

r of the Intelligent Systems and Assistive Technologies (ISAT) Lab at Purd

ue\, and he is affiliated with the Regenstrief Center for Healthcare Engin

eering. He completed postdoctoral training at the Naval Postgraduate Schoo

l’s MOVES Institute under a National Research Council Fellowship from the

National Academies of Sciences. Dr. Wachs received his B.Ed.Tech in Electr

ical Education in ORT Academic College\, at the Hebrew University of Jerus

alem campus. His M.Sc and Ph.D in Industrial Engineering and Management fr

om the Ben-Gurion University of the Negev\, Israel. He is the recipient of

the 2013 Air Force Young Investigator Award\, and the 2015 Helmsley Senio

r Scientist Fellow\, and 2016 Fulbright U.S. Scholar\, the James A. and Sh

aron M. Tompkins Rising Star Associate Professor\, 2017\, and an ACM Disti

nguished Speaker 2018. He is also the Associate Editor of IEEE Transaction

s in Human-Machine Systems\, Frontiers in Robotics and AI.

Dr. Juan Wachs is a Professor and Faculty Scholar

in the Industrial Engineering School at Purdue University\, Professor of

Biomedical Engineering (by courtesy) and an Adjunct Associate Professor of

Surgery at IU School of Medicine. He is currently serving at NSF as a Pro

gram Director for robotics and AI programs at CISE. He is also the directo

r of the Intelligent Systems and Assistive Technologies (ISAT) Lab at Purd

ue\, and he is affiliated with the Regenstrief Center for Healthcare Engin

eering. He completed postdoctoral training at the Naval Postgraduate Schoo

l’s MOVES Institute under a National Research Council Fellowship from the

National Academies of Sciences. Dr. Wachs received his B.Ed.Tech in Electr

ical Education in ORT Academic College\, at the Hebrew University of Jerus

alem campus. His M.Sc and Ph.D in Industrial Engineering and Management fr

om the Ben-Gurion University of the Negev\, Israel. He is the recipient of

the 2013 Air Force Young Investigator Award\, and the 2015 Helmsley Senio

r Scientist Fellow\, and 2016 Fulbright U.S. Scholar\, the James A. and Sh

aron M. Tompkins Rising Star Associate Professor\, 2017\, and an ACM Disti

nguished Speaker 2018. He is also the Associate Editor of IEEE Transaction

s in Human-Machine Systems\, Frontiers in Robotics and AI.

\n

\n

Click here for the recording of Dr. Wac

h’s Seminar.

DTSTART;TZID=America/New_York:20230906T120000

DTEND;TZID=America/New_York:20230906T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Juan P. Wachs “Gore Robots: From Blood and Guts to Bi

ts and Bytes”

URL:https://lcsr.jhu.edu/events/lcsr-seminar-juan-p-wachs-title-tbd-hackerm

an-b17/

X-COST-TYPE:free

X-WP-IMAGES-URL:thumbnail\;https://lcsr.jhu.edu/wp-content/uploads/2023/07/

Juan-Wachs-300x275.jpg\;216\;198\,medium\;https://lcsr.jhu.edu/wp-content/

uploads/2023/07/Juan-Wachs-300x275.jpg\;216\;198\,large\;https://lcsr.jhu.

edu/wp-content/uploads/2023/07/Juan-Wachs-300x275.jpg\;216\;198\,full\;htt

ps://lcsr.jhu.edu/wp-content/uploads/2023/07/Juan-Wachs-300x275.jpg\;216\;

198

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13874@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:Michele Greatti\; 4105166841\; mgreatt1@jhu.edu

DESCRIPTION:

DTSTART;TZID=America/New_York:20230908T130000

DTEND;TZID=America/New_York:20230908T150000

LOCATION:Taharka Brothers Ice Cream truck @ Outdoors\, between Shriver & Ma

lone

SEQUENCE:0

SUMMARY:LCSR Welcome (Back) Ice Cream Social

URL:https://lcsr.jhu.edu/events/lcsr-welcome-back-ice-cream-social/

X-COST-TYPE:free

X-WP-IMAGES-URL:thumbnail\;https://lcsr.jhu.edu/wp-content/uploads/2023/09/

2023-Welcome-Back-Ice-Cream--223x300.jpg\;223\;300\,medium\;https://lcsr.j

hu.edu/wp-content/uploads/2023/09/2023-Welcome-Back-Ice-Cream--223x300.jpg

\;223\;300\,large\;https://lcsr.jhu.edu/wp-content/uploads/2023/09/2023-We

lcome-Back-Ice-Cream--223x300.jpg\;223\;300\,full\;https://lcsr.jhu.edu/wp

-content/uploads/2023/09/2023-Welcome-Back-Ice-Cream--223x300.jpg\;223\;30

0

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13878@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:The LCSR Graduate Student Association (LCSR-GSA)\; lcsr-gsa@jhu.edu

DESCRIPTION:

\nDeares t

LCSR Community!

t

LCSR Community!

\n

\nWE ARE BACK WITH OUR MONDAY BAGELS TRADI

TION!!

\n

\nPlease join us this coming Monday 09/11 at 10.30

am at the students’ office space in Hackerman 136/137 for some fresh morni

ng bagels!! We will provide various cream cheese spreads\, and there will

be a coffee machine\, water boiler and K-cups for you to enjoy as well (br

ing your own mugs though).

\n

\nLooking forward to seeing you

all there!

\nCHEERIOS!

\n

\nLydia & Benjamin

\n

\nThe LCSR Graduate Student Association (LCSR-GSA)

\nLaborato

ry for Computational Sensing and Robotics

\nJohns Hopkins University

\nlcsr-gsa@jhu.edu

DTSTART;TZID=America/New_York:20230911T103000

DTEND;TZID=America/New_York:20230911T113000

LOCATION:Hackerman 136/137

SEQUENCE:0

SUMMARY:GSA Monday Bagels

URL:https://lcsr.jhu.edu/events/gsa-monday-bagels/

X-COST-TYPE:free

X-WP-IMAGES-URL:thumbnail\;https://lcsr.jhu.edu/wp-content/uploads/2023/09/

bagel-2023-300x300.png\;300\;300\,medium\;https://lcsr.jhu.edu/wp-content/

uploads/2023/09/bagel-2023-300x300.png\;300\;300\,large\;https://lcsr.jhu.

edu/wp-content/uploads/2023/09/bagel-2023-300x300.png\;300\;300\,full\;htt

ps://lcsr.jhu.edu/wp-content/uploads/2023/09/bagel-2023-300x300.png\;300\;

300

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13830@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:

DESCRIPTION:Mark S. Savage is Associate Director\, Life Design Lab & Lif

e Design Educator for Engineering master’s Students at Johns Hopkins Unive

rsity.

\nClick here for a link to Ma

rk’s presentation.

DTSTART;TZID=America/New_York:20230913T120000

DTEND;TZID=America/New_York:20230913T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Mark Savage “COMPOSING AN EFFECTIVE RESUME FOR JOB &

INTERNSHIP APPLICATIONS”

URL:https://lcsr.jhu.edu/events/lcsr-seminar-mark-savage-tbd-hackerman-b17/

X-COST-TYPE:free

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13832@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:

DESCRIPTION:Abstract: The haptic (touch) sensations fel

t when interacting with the physical world create a rich and varied impres

sion of objects and their environment. Humans can discover a significant a

mount of information through touch with their environment\, allowing them

to assess object properties and qualities\, dexterously handle objects\, a

nd communicate social cues and emotions. Humans are spending significantly

more time in the digital world\, however\, and are increasingly interacti

ng with people and objects through a digital medium. Unfortunately\, digit

al interactions remain unsatisfying and limited\, representing the human a

s having only two sensory inputs: visual and auditory.

\n

\nT

his talk will focus on methods for building haptic and multimodal models t

hat can be used to create realistic virtual interactions in mobile applica

tions and in VR. I will discuss data-driven modeling methods that involve

recording force\, vibration\, and sounds data from direct interactions wit

h the physical objects. I will compare this to new methods using machine l

earning to generate and tune haptic models using human preferences.

\n<

p>![]() \n

\nBio: Heather Cul

bertson is a Gabilan Assistant Professor of Computer Science at the Univer

sity of Southern California. Her research focuses on the design and contro

l of haptic devices and rendering systems\, human-robot interaction\, and

virtual reality. Particularly she is interested in creating haptic interac

tions that are natural and realistically mimic the touch sensations experi

enced during interactions with the physical world. Previously\, she was a

research scientist in the Department of Mechanical Engineering at Stanford

University. She received her PhD in the Department of Mechanical Engineer

ing and Applied Mechanics (MEAM) at the University of Pennsylvania. She is

currently serving as Publications Chair for IEEE Haptics Symposium. Her a

wards include the NSF CAREER Award\, IEEE Technical Committee on Haptics E

arly Career Award\, and the Okawa Research Foundation Award.

\n

\nClick here to watch a video recording

of this presentation.

DTSTART;TZID=America/New_York:20230920T120000

DTEND;TZID=America/New_York:20230920T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Heather Culbertson “Using Data for Increased Realism

with Haptic Modeling and Devices”

URL:https://lcsr.jhu.edu/events/lcsr-seminar-heather-culbertson-tbd/

X-COST-TYPE:free

X-WP-IMAGES-URL:thumbnail\;https://lcsr.jhu.edu/wp-content/uploads/2023/07/

HCulbertson-225x300.jpg\;186\;248\,medium\;https://lcsr.jhu.edu/wp-content

/uploads/2023/07/HCulbertson-225x300.jpg\;186\;248\,large\;https://lcsr.jh

u.edu/wp-content/uploads/2023/07/HCulbertson-225x300.jpg\;186\;248\,full\;

https://lcsr.jhu.edu/wp-content/uploads/2023/07/HCulbertson-225x300.jpg\;1

86\;248

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13844@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:

DESCRIPTION:IROS practice talks by the students followed by 5-minute Q&A

session after each paper.

\n\n- Hisashi Ishida

\n- Juan Ba

rragan

\n- Michael Kam

\n- Jiawei Liu

\n- Jim Wang.

\n

DTSTART;TZID=America/New_York:20230927T120000

DTEND;TZID=America/New_York:20230927T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: IROS paper presentations

URL:https://lcsr.jhu.edu/events/lcsr-seminar-student-seminars/

X-COST-TYPE:free

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13836@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:

DESCRIPTION:Abstract:

\nThe success in medical d

evice development depends on alignment between needs of patients\, provide

rs\, and hospitals. In this talk I will cover 20 years of my journey in d

efining clinical needs\, business objectives\, and developing products in

the space medical devices and robotics. I will discuss products across ima

ge guidance\, navigation\, ultrasound\, and robotics technologies\, starti

ng with products in electrophysiology mapping and lung interventions\, cov

ering breakthroughs in quantitative imaging for ultrasound and AI-based ul

trasound exams. We will talk about projects that worked and those that fai

led addressing key issues in the development cycle. In the final section\,

I will cover surgical and interventional robotic developments and Johnson

& Johnson.

\n

\nBio:

\nAs VP o

f Robotic Strategy at Johnson and Johnson MedTech\, Aleksandra is leading

Johnson & Johnson efforts in defining the future of surgical robotics. Joh

nson & Johnson MedTech is present in almost every operating room in the wo

rld with more than 75 million procedures each year. Aleksandra has over 20

years of experience in medical device and robotics. Starting her career i

n Germany\, at RWTH Aachen University\, Helmholtz Institute and University

Hospital\, Aleksandra obtained PhD (Dr. Ing.) with specialization in surg

ical robotics. After graduate school\, Aleksandra spend 15 years at Philip

s in New York and Boston\, starting as a scientist developing products acr

oss different clinical areas (e.g.\, electrophysiology\, vascular interven

tional\, lung interventions\, cardiology) with technical focus on image gu

idance\, navigation\, and robotics. In the later years\, she became innova

tion lead for Ultrasound and subsequently Image Guided Therapy at Philips.

Today\, she heads strategy for leading surgical robotics company Johnson

& Johnson. Aleksandra grew up in former Yugoslavia (Montenegro and Serbia)

. She obtained her master’s degree (Dipl.-Ing.) in Electrical Engineering

from Belgrade University in Serbia and PhD in Engineering from RWTH Aachen

University in Germany. Strong believer in formal education\, Aleksandra a

lso has executive degree from MIT Sloan School of Management and certifica

te in Industrial Design from Massachusetts College of Art and Design.

DTSTART;TZID=America/New_York:20231004T120000

DTEND;TZID=America/New_York:20231004T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Aleksandra Popovic “My Journey in Medical Devices and

Robotics”

URL:https://lcsr.jhu.edu/events/lcsr-seminar-aleksandra-popovic-tbd/

X-COST-TYPE:free

X-WP-IMAGES-URL:thumbnail\;https://lcsr.jhu.edu/wp-content/uploads/2023/07/

Popovic-300x300.jpeg\;241\;241\,medium\;https://lcsr.jhu.edu/wp-content/up

loads/2023/07/Popovic-300x300.jpeg\;241\;241\,large\;https://lcsr.jhu.edu/

wp-content/uploads/2023/07/Popovic-300x300.jpeg\;241\;241\,full\;https://l

csr.jhu.edu/wp-content/uploads/2023/07/Popovic-300x300.jpeg\;241\;241

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13840@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:

DESCRIPTION: Continuum robots change their shape with elast

ic deformations rather than mechanical joints and are often elastically de

form under typical forces for their applications. They have advantages in

some environments where geometry may be complex and not well-known in adva

nce of operations\, which is a common feature of many applications outside

of factory settings. Continuum robots leverage contact and deformation to

complete tasks\, relying on passive mechanical behaviors in addition to s

oftware-based intelligence and traditional control systems. For example\,

robots with slender\, snake-like\, elastic bodies can navigate the tortuou

s human anatomy like the colon or the esophagus to perform surgery\, or th

ey can navigate through challenging industrial environments like pipelines

and machinery to perform “minimally invasive” inspection and maintenance.

However\, slender bodies and mechanical softness come with distinct engi

neering challenges. Many slender-bodied soft robots have adopted remote ac

tuation approaches that suffer from exponentially worsening friction as th

ey bend. Additionally\, many approaches to actuation result in an undesira

ble coupling between actuators. In this seminar\, I will describe our rece

nt research that has focused on improving the understanding of continuum m

echanism manipulator designs\, models\, and applications. Ongoing studies

are aimed at (i) improving the design of electromechanically driven contin

uum robots\; (ii) investigating methods to mitigate friction in long\, sle

nder devices\; and (iii) improving modeling approaches for continuum robot

s.

Continuum robots change their shape with elast

ic deformations rather than mechanical joints and are often elastically de

form under typical forces for their applications. They have advantages in

some environments where geometry may be complex and not well-known in adva

nce of operations\, which is a common feature of many applications outside

of factory settings. Continuum robots leverage contact and deformation to

complete tasks\, relying on passive mechanical behaviors in addition to s

oftware-based intelligence and traditional control systems. For example\,

robots with slender\, snake-like\, elastic bodies can navigate the tortuou

s human anatomy like the colon or the esophagus to perform surgery\, or th

ey can navigate through challenging industrial environments like pipelines

and machinery to perform “minimally invasive” inspection and maintenance.

However\, slender bodies and mechanical softness come with distinct engi

neering challenges. Many slender-bodied soft robots have adopted remote ac

tuation approaches that suffer from exponentially worsening friction as th

ey bend. Additionally\, many approaches to actuation result in an undesira

ble coupling between actuators. In this seminar\, I will describe our rece

nt research that has focused on improving the understanding of continuum m

echanism manipulator designs\, models\, and applications. Ongoing studies

are aimed at (i) improving the design of electromechanically driven contin

uum robots\; (ii) investigating methods to mitigate friction in long\, sle

nder devices\; and (iii) improving modeling approaches for continuum robot

s.

\n

\nBio:

\nHunter B. Gilbert recei

ved the B.S. degree in mechanical engineering in 2010 from Rice University

(Houston\, Texas)\, and the Ph.D. degree in mechanical engineering in 201

6 from Vanderbilt University (Nashville\, Tennessee). He conducted a postd

octoral fellowship in the Physical Intelligence Department of the Max Plan

ck Institute for Intelligent Systems (Stuttgart\, Germany)\, supported by

an Alexander von Humboldt Stiftung postdoctoral fellowship from 2016-2019.

He is currently an Associate Professor of Mechanical Engineering at Louis

iana State University\, where he is co-director of the Innovation in Contr

ol and Robotics Engineering (iCORE) research laboratory. He is an Associat

e Editor for the IEEE Robotics and Automation Letters and for Frontiers in

Robotics and AI. His research interests are centered on several themes wi

thin applied mechanics and dynamic systems: mechanically “soft” or deforma

ble robots\, systems and technologies focused on human health and safety\,

and modeling of complex dynamic systems.

DTSTART;TZID=America/New_York:20231025T120000

DTEND;TZID=America/New_York:20231025T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Hunter Gilbert “Continuum Robots: Addressing Challeng

es through Modeling\, Design\, and Control”

URL:https://lcsr.jhu.edu/events/lcsr-seminar-hunter-gilbert-tbd/

X-COST-TYPE:free

X-WP-IMAGES-URL:thumbnail\;https://lcsr.jhu.edu/wp-content/uploads/2023/07/

HGilbert-236x300.jpg\;236\;300\,medium\;https://lcsr.jhu.edu/wp-content/up

loads/2023/07/HGilbert-236x300.jpg\;236\;300\,large\;https://lcsr.jhu.edu/

wp-content/uploads/2023/07/HGilbert-236x300.jpg\;236\;300\,full\;https://l

csr.jhu.edu/wp-content/uploads/2023/07/HGilbert-236x300.jpg\;236\;300

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13842@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:Michele Greatti\; 4105166841\; lcsr-admin@jh.edu

DESCRIPTION: Eric Diller\, Associate Professor\, Department of

Mechanical and Industrial Engineering\, Robotics Institute\, Institute of

Biomedical Engineering (cross-appointed)\; University of Toronto

Eric Diller\, Associate Professor\, Department of

Mechanical and Industrial Engineering\, Robotics Institute\, Institute of

Biomedical Engineering (cross-appointed)\; University of Toronto

\n

\nAbstract: Micro-scale mobile robots can physically access small

spaces in a versatile and non-invasive manner. Such microrobots under seve

ral mm in size have potential unique applications for surgery\, sensing an

d drug delivery in healthcare\, microfactories and as scientific tools. Th

ese devices are powered and controlled remotely using externally-applied m

agnetic fields for motion in 3D. This talk will introduce how we design an

d produce these tiny machines\, as well as how we create magnetic fields t

hat can move them as functional robots inside the body. Moving microrobots

for swimming\, crawling and grasping powered by these magnetic fields wil

l be shown\, along with our progress towards medical applications for diag

nosis in the gut\, and in neurosurgery.

\nEric Diller received the B

.S. and M.S. degree in mechanical engineering from Case Western Reserve Un

iversity in 2010 and the Ph.D. degree in mechanical engineering from Carne

gie Mellon University in 2013. He is currently Associate Professor in the

Department of Mechanical and Industrial Engineering and the Robotics Insti

tute at the University of Toronto\, where he is director of the Microrobot

ics Laboratory. His research interests include micro-scale robotics\, and

features fabrication and control relating to remote actuation of micro-sca

le devices using magnetic fields\, micro-scale robotic manipulation\, and

smart materials. He is an Associate Editor of the Journal of Micro-Bio Rob

otics\, and received the IEEE Robotics & Automation Society 2020 Early Car

eer Award. He has also received the 2018 Ontario Early Researcher Award\,

the University of Toronto Innovation Award\, and the Canadian Society of M

echanical Engineering’s 2018 I.W. Smith Award for research contributions i

n medical microrobotics. He envisions an accessible future of medicine fre

e of invasive colonoscopies\, open surgery and long recoveries.

\nLab

website: http://microrobotics

.mie.utoronto.ca/

DTSTART;TZID=America/New_York:20231101T120000

DTEND;TZID=America/New_York:20231101T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Eric Diller “Micro-Scale Surgery: Using Magnetic Fiel

ds to Control Tiny Robots in the Gut and Brain”

URL:https://lcsr.jhu.edu/events/lcsr-seminar-eric-diller-tbd/

X-COST-TYPE:free

X-WP-IMAGES-URL:thumbnail\;https://lcsr.jhu.edu/wp-content/uploads/2023/07/

Diller-300x290.jpg\;300\;290\,medium\;https://lcsr.jhu.edu/wp-content/uplo

ads/2023/07/Diller-300x290.jpg\;300\;290\,large\;https://lcsr.jhu.edu/wp-c

ontent/uploads/2023/07/Diller-300x290.jpg\;300\;290\,full\;https://lcsr.jh

u.edu/wp-content/uploads/2023/07/Diller-300x290.jpg\;300\;290

END:VEVENT

BEGIN:VEVENT

UID:ai1ec-13834@lcsr.jhu.edu

DTSTAMP:20240427T103210Z

CATEGORIES:

CONTACT:

DESCRIPTION:Abstract:

\nAs robotics increasingly integ

rates into our social and professional spheres\, the question of how human

s perceive and trust robots has gained prominence. Are robots regarded as

utilitarian tools\, designed to fulfill tasks efficiently\, or are they em

braced as teammates\, eliciting human-like trust? Some argue that humans i

nteract with robots in a way that resembles social interactions with other

humans\, a viewpoint aligned with the ‘computers are social actors’ (CASA

) concept. Conversely\, proponents of the robot as a tool view contend tha

t humans perceive robots as non-human tools\, promoting the use of human-t

o-automation theories and trust measures. In this presentation\, we delve

into these arguments and propose an empirical study aimed at shedding ligh

t on this debate.

\n Bio:

Bio:

\nHe holds the position of Professor in the School

of Information at the University of Michigan and boasts a number of distin

guished memberships\, including AIS Distinguished Member Cum Laude and IEE

E Senior Member. Dr. Robert obtained his Ph.D. in Information Systems from

Indiana University\, where he was a BAT Fellow and KPMG Scholar. Currentl

y\, he is the director of the Michigan Autonomous Vehicle Research Intergr

oup Collaboration (MAVRIC) and affiliated with various institutions\, incl

uding the University of Michigan Robotics Institute\, the National Center

for Institutional Diversity at the University of Michigan\, and the Center

for Computer-Mediated Communication at Indiana University. Additionally\,

he is a member of the AAAS Community Advisory Board. Dr. Robert’s researc

h interests revolve around human collaboration with technology\, which is

reflected in his published works in leading information systems and inform

ation science journals as well as notable computer and robotics conference

s. His research has garnered numerous accolades\, including best paper awa

rds/nominations from the Journal of the Association of Information Systems

\, the ACM Conference on Computer-Supported Cooperative Work\, SAE Interna

tional\, and the ACM/IEEE International Conference on Human–Robot Interact

ion. Dr. Robert has received research funding from various sources\, such

as the AAA Foundation\, Automotive Research Center/U.S. Army\, Army Resear

ch Laboratory\, Toyota Research Institute\, MCity\, Lieberthal-Rogel Cente

r for Chinese Studies\, and the National Science Foundation. He has also b

een featured in print\, radio\, and television for major media outlets lik

e ABC\, CBS\, CNN\, CNBC\, Michigan Radio\, Inc.\, New York Times\, and th

e Associated Press.

\n

\n

DTSTART;TZID=America/New_York:20231108T120000

DTEND;TZID=America/New_York:20231108T130000

LOCATION:Hackerman B17

SEQUENCE:0

SUMMARY:LCSR Seminar: Lionel Robert “Human Trust in Robots: Teammate or Too

l? Does it Matter?”

URL:https://lcsr.jhu.edu/events/lcsr-seminar-lionel-robert-tbd/

X-COST-TYPE:free

X-WP-IMAGES-URL:thumbnail\;https://lcsr.jhu.edu/wp-content/uploads/2023/07/

robert_lionel_0-214x300.jpg\;214\;300\,medium\;https://lcsr.jhu.edu/wp-con

tent/uploads/2023/07/robert_lionel_0-214x300.jpg\;214\;300\,large\;https:/

/lcsr.jhu.edu/wp-content/uploads/2023/07/robert_lionel_0-214x300.jpg\;214\